Random Variables III: Continuous Distributions & CLT

Megan Ayers

Math 141 | Spring 2026

Friday, Week 10

HW Announcement

- HW 8 is posted

- It is due in 2 weeks, on Friday April 17th 11:59pm.

- After today we will have covered all relevant lecture material

- In the next lab we’ll practice related R functions more

- My suggestion: start on non-R problems now, save 2c-f, 4, 5d-e for after the next lab

Goals for Today

- Answers from last class activity

- Discuss continuous random variables

- Introduce the normal distribution

- Discuss the Central Limit Theorem!

Activity: Survey of Portlanders

Suppose you believe 40% of Portlanders think the city should install more bike lanes. You will take a simple random survey of 50 Portlanders to test your belief.

Assume your belief is true. Let \(X\) be the number of survey respondents who think the city should install more bike lanes. Does \(X\) follow a Binomial distribution? If so, what are \(n\) and \(p\)?

Calculate the expected value and standard deviation of \(X\) (still assuming your belief is true)

Suppose you conducted the survey and found 30 respondents wanted more bike lanes. What’s the probability that \(X\geq 30\)? (use R!)

Draw the connection between the probability you calculated and a hypothesis test.

Activity: Survey of Portlanders (Answers)

Suppose you believe 40% of Portlanders think the city should install more bike lanes. You will take a simple random survey of 50 Portlanders to test your belief.

- Assume your belief is true. Let \(X\) be the number of survey respondents who think the city should install more bike lanes. Does \(X\) follow a Binomial distribution? If so, what are \(n\) and \(p\)?

- \(X\) follows a Binomial(\(n=50,p=0.4\)) distribution.

- Calculate the expected value and standard deviation of \(X\) (assuming your belief)

\[E[X] = (50)(.4) = 20\] \[SD[X] = \sqrt{(50)(.4)(1-.4)} = 3.46\]

- Suppose you conducted the survey and found 30 respondents wanted more bike lanes. What’s the probability that \(X\geq 30\)? (use R!)

- \(P[X\geq30] = 1 - \texttt{pbinom}(29,~ 50,~ 0.4) = 0.0033\)

- Draw the connection between the probability you calculated and a hypothesis test.

- We are testing \(H_0: p=0.4\) vs. \(H_a: p>0.4\)

- Test statistic: \(\hat{p}=30/50\)

- p-value = 0.0033

Continuous Random Variables

The Distribution of a Continuous Variable

If \(X\) is a continuous random variable, it can take on any value in an interval.

- e.g., \(0\leq X\leq 10\) or \(-\infty < X < \infty\)

Recall: For discrete random variables, we could list the probability of each possible outcome.

- For continuous random variables, this won’t work. There are an uncountable number of outcomes!

Instead, we represent relative chances of different possible outcomes using a density function \(f(x)\)

- \(f(x) \geq 0\) for all possible \(X\)

- The total area under the function is \(1\)

- \(P(a\leq X\leq b)\) is the area under \(f\) between \(a\) and \(b\)

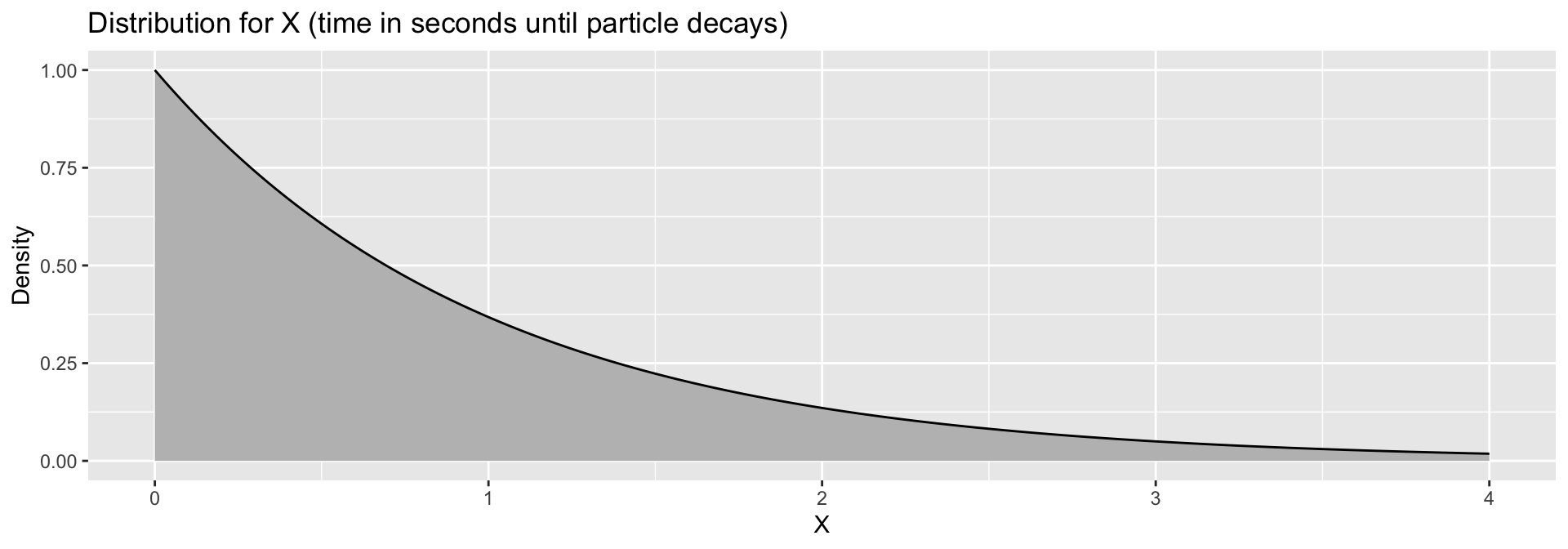

Density Curve

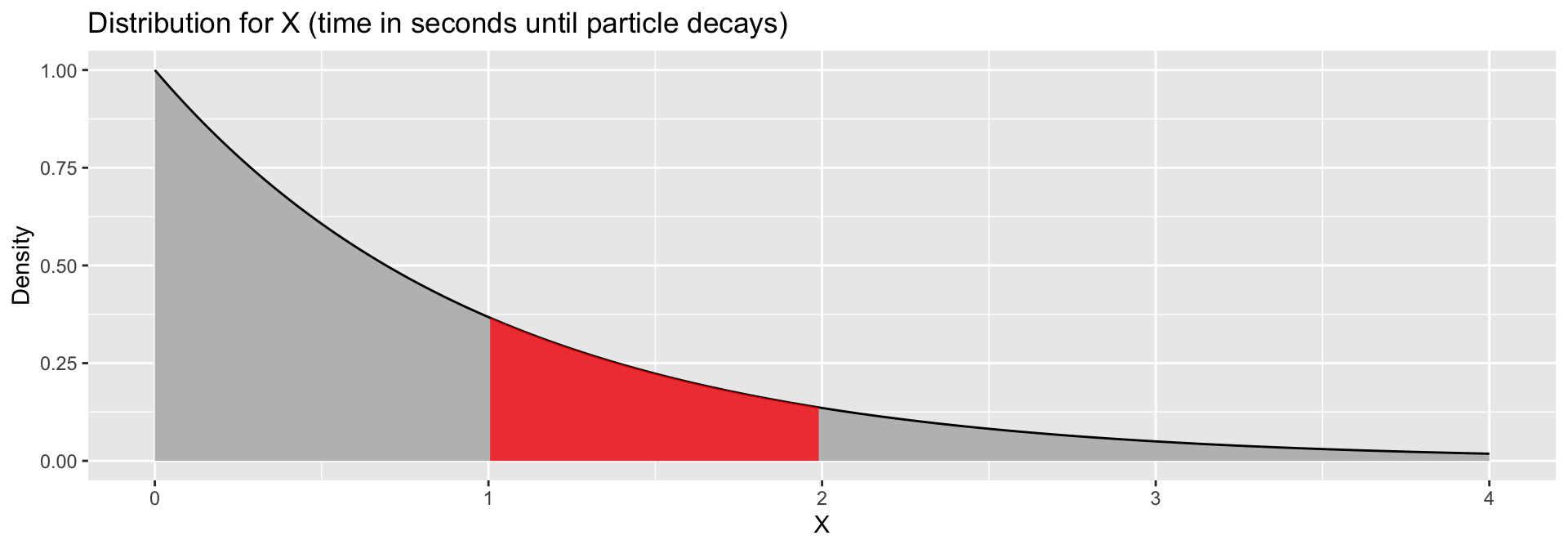

Suppose \(X\) is a random variable representing the time (in seconds) it takes for a particle to experience radioactive decay, where \[ f(x) = e^{-x} \qquad \textrm{for } x\geq 0 \]

- The probability that it takes between \(1\) and \(2\) seconds to decay is the area under the curve between \(1\) and \(2\). \(P(1 < T < 2) =\)

Density Curve

Suppose \(X\) is a random variable representing the time (in seconds) it takes for a particle to experience radioactive decay, where \[ f(x) = e^{-x} \qquad \textrm{for } x\geq 0 \]

- The probability that it takes between \(1\) and \(2\) seconds to decay is the area under the curve between \(1\) and \(2\). \(P(1 < T < 2) = \color{red}{0.232}\)

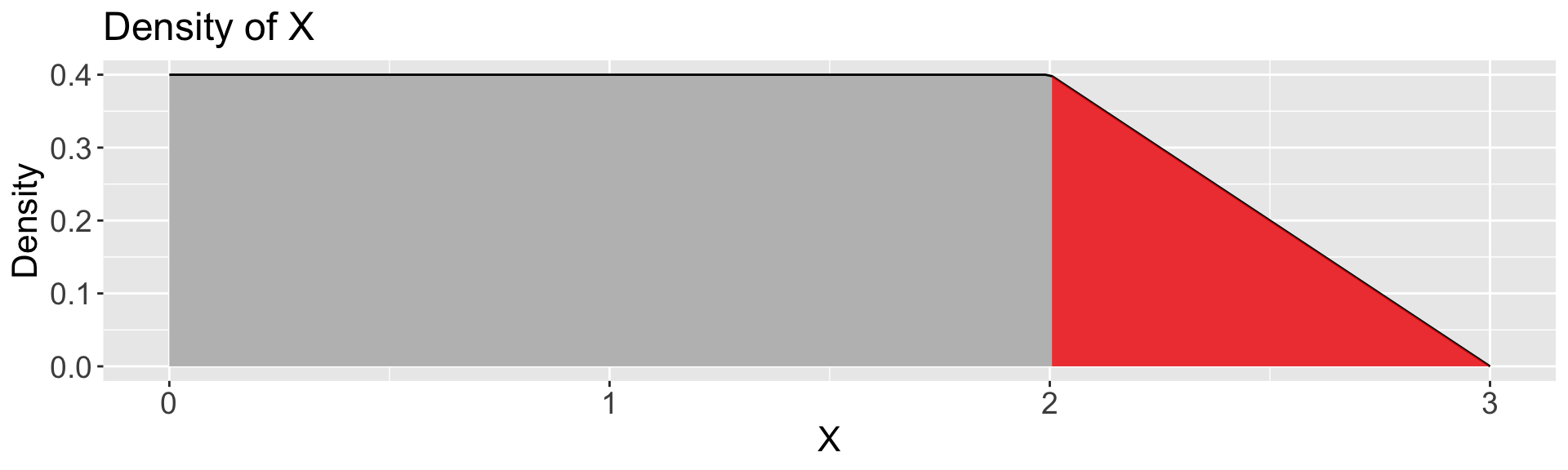

Practice with Continuous Random Variables

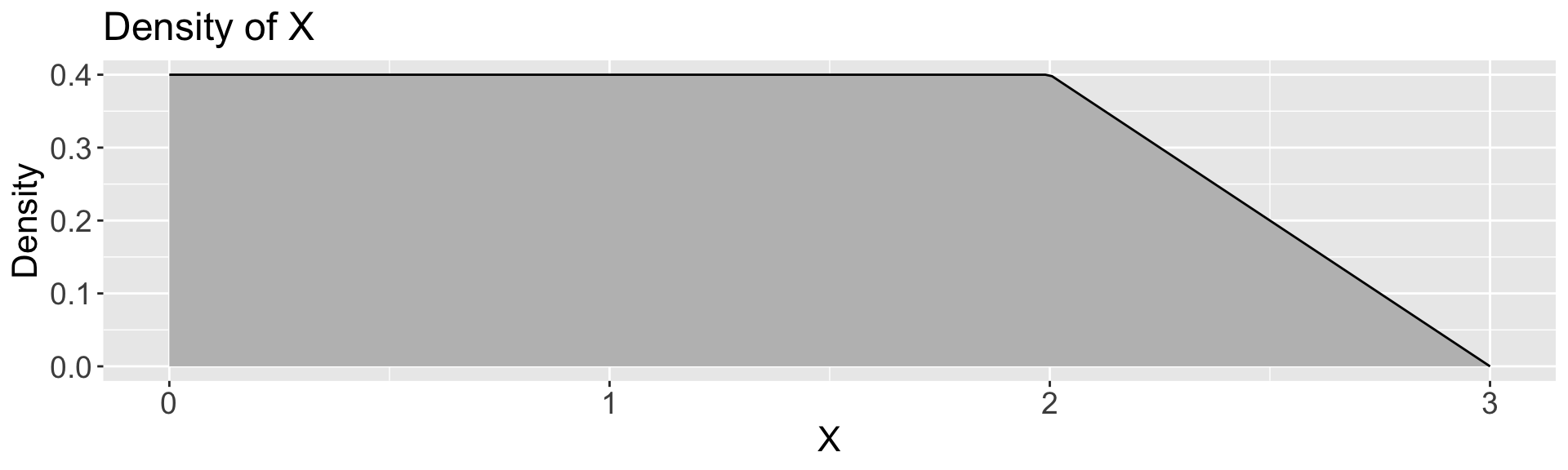

Let \(X\) be a continuous random variable with the density function,

Calculate:

\(P(X \leq 1)\)

\(P(X \leq 2)\)

\(P(2 \leq X \leq 3)\)

Practice with Continuous Random Variables

Let \(X\) be a continuous random variable with the density function,

Calculate:

\(P(X \leq 1) = 1 * 0.4 = \boxed{0.4}\)

\(P(X \leq 2)\)

\(P(2 \leq X \leq 3)\)

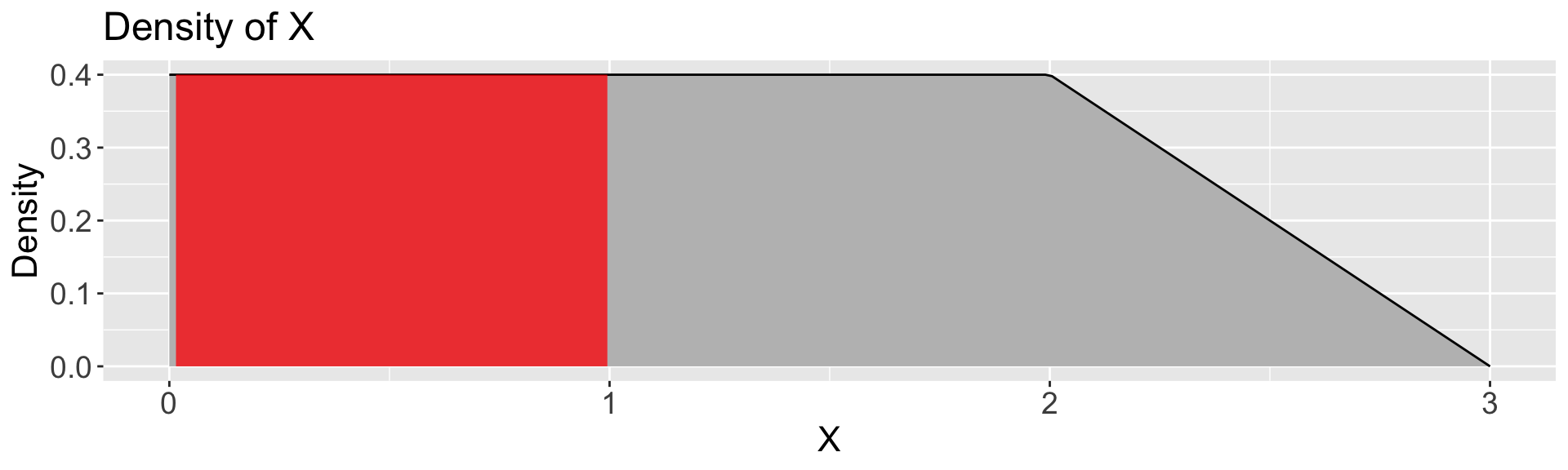

Practice with Continuous Random Variables

Let \(X\) be a continuous random variable with the density function,

Calculate:

\(P(X \leq 1) = 1 * 0.4 = \boxed{0.4}\)

\(P(X \leq 2) = 2 * 0.4 = \boxed{0.8}\)

\(P(2 \leq X \leq 3)\)

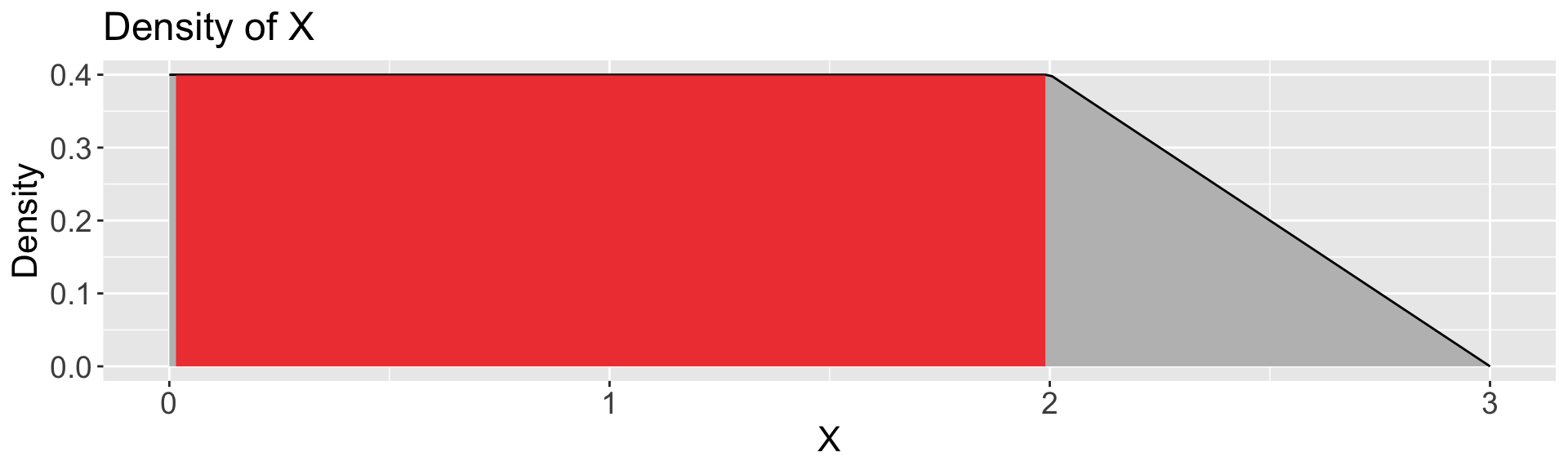

Practice with Continuous Random Variables

Let \(X\) be a continuous random variable with the density function,

Calculate:

\(P(X \leq 1) = 1 * 0.4 = \boxed{0.4}\)

\(P(X \leq 2) = 2 * 0.4 = \boxed{0.8}\)

\(P(2 \leq X \leq 3) = \frac{1}{2} * 1 * 0.4 = \boxed{0.2}\)

Mean and Variance

Continuous variables have a mean, variance, and standard deviation too!

- We can’t use the same definition as for discrete random variables. \[E[X] = \sum_x xP[X=x]\]

- There are infinitely many values

- With infinitely many, each specific value has probability 0 of occurring

Instead, we have to use calculus to define mean and variance: \[ \begin{align} E[X] &= \int x f(x) \, dx\\ \mathrm{Var}(X) &= \int (x - \mu)^2 f(x) \, dx \end{align} \]

Mean and Variance

\[ \begin{align} E[X] &= \int x f(x) \, dx\\ \mathrm{Var}(X) &= \int (x - \mu)^2 f(x) \, dx\\ \mathrm{SD}(X) &=\sqrt{\mathrm{Var}(X)} \end{align} \]

- These integrals are tools to meaningfully average infinitely many values

- (We won’t compute any integrals in this class!)

As always…

- the mean of a random variable represents a typical value.

- the standard deviation represents the typical size of deviations from the mean.

The Normal Distribution

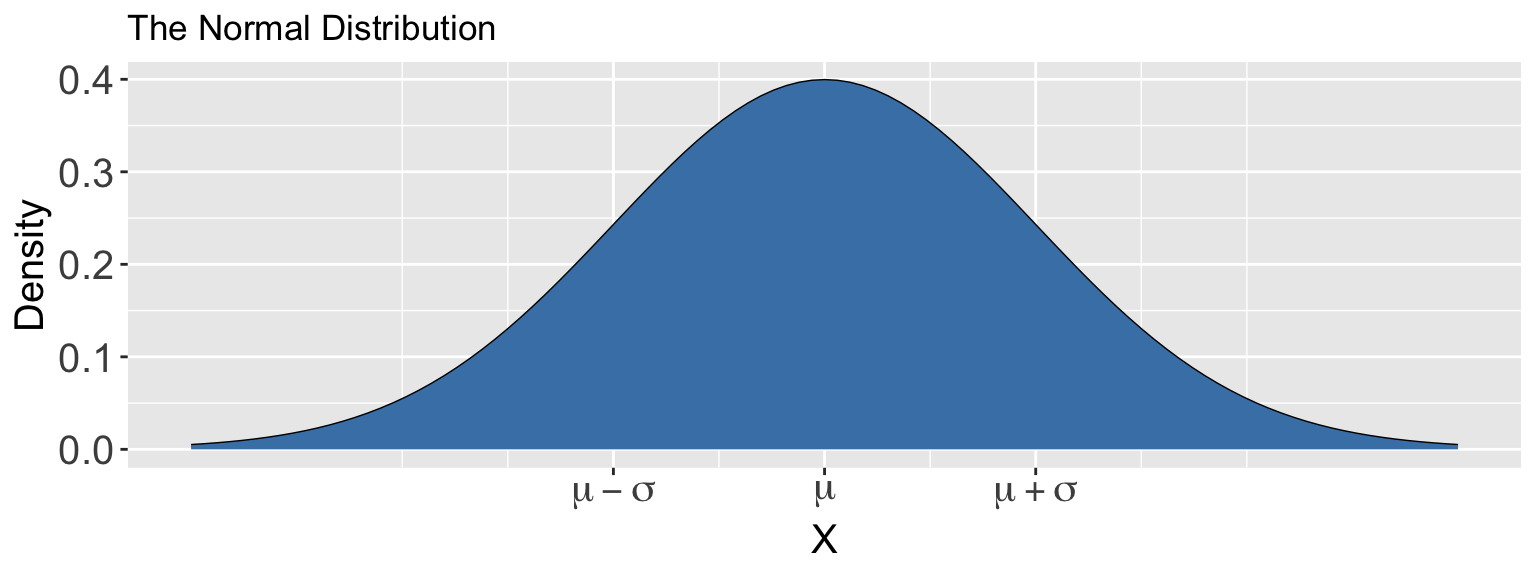

The Normal Distribution

The Normal distribution is defined by two parameters:

- Mean, \(\mu\)

- Standard deviation, \(\sigma\)

Suppose \(X\) follows a Normal(\(\mu\),\(\sigma\)) distribution. The density function is

\[f(x) = \frac{1}{\sqrt{2 \pi \sigma^2}} \cdot\exp \left(\frac{-(x-\mu)^2}{2\sigma^2}\right)\]

(Don’t memorize this!)

This is the official name for “bell curves”!

Calculating Probabilities

R has built-in functions for calculating probabilities from a normal distribution.

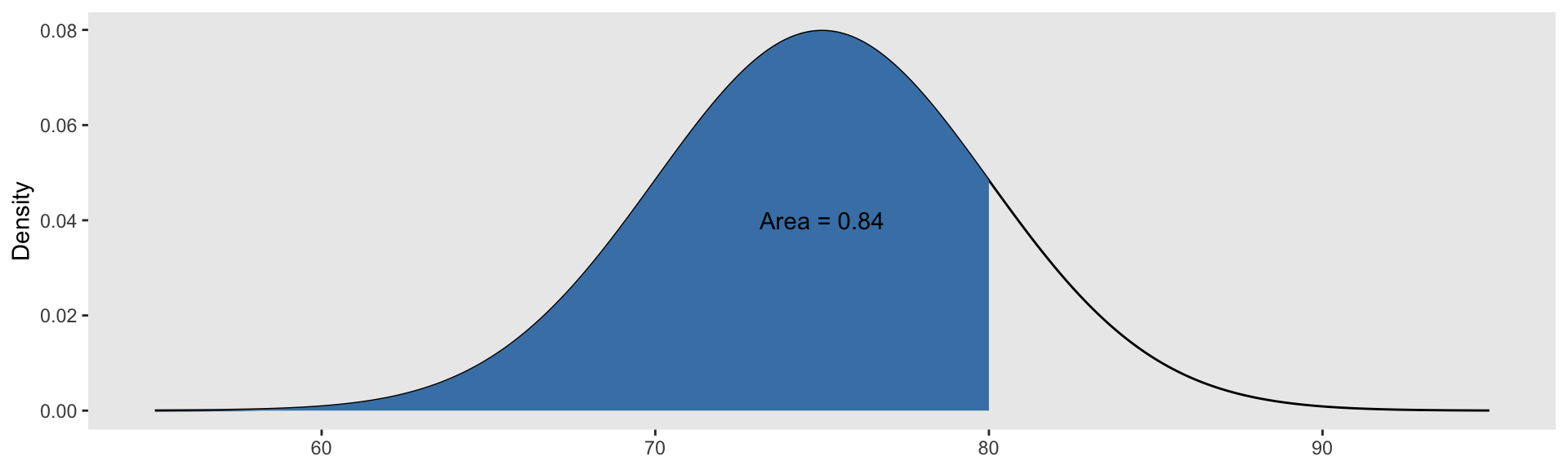

Suppose \(X\sim \text{Normal}(\mu=75, \sigma=5)\). Then:

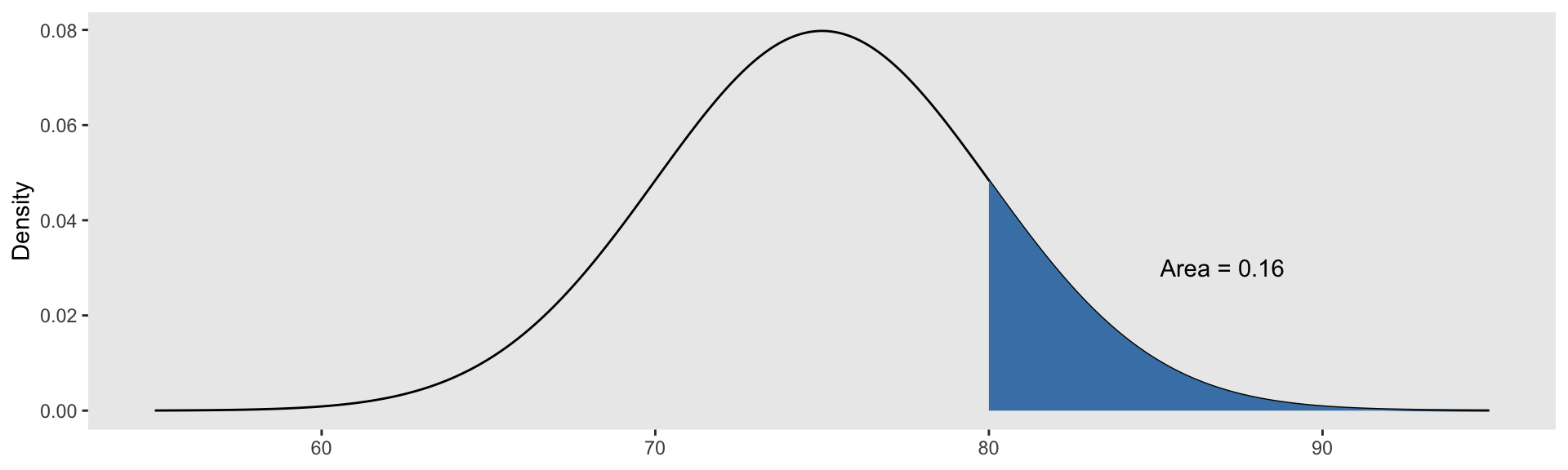

Calculating Probabilities

R has built-in functions for calculating probabilities from a normal distribution.

Suppose \(X\sim \text{Normal}(\mu=75, \sigma=5)\). Then:

- \(P(X \geq 80) = P(X>80)=\)

Calculating Probabilities

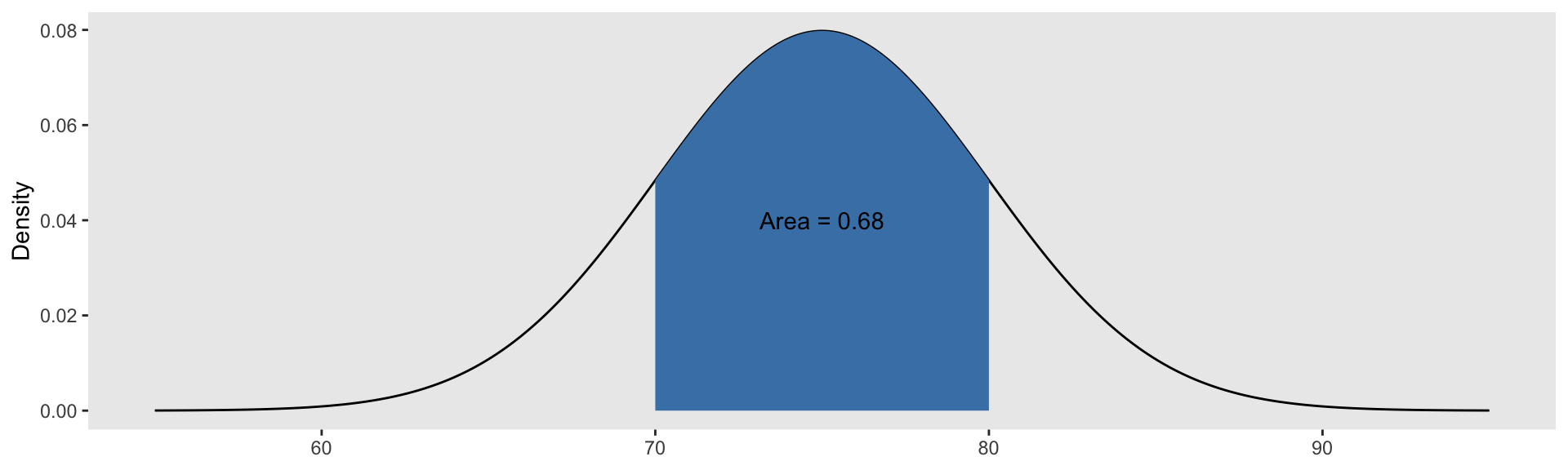

R has built-in functions for calculating probabilities from a normal distribution.

Suppose \(X\sim \text{Normal}(\mu=75, \sigma=5)\). Then:

- \(P(70 \leq X \leq 80) = P(X\leq 80) - P(X\leq 70) =\)

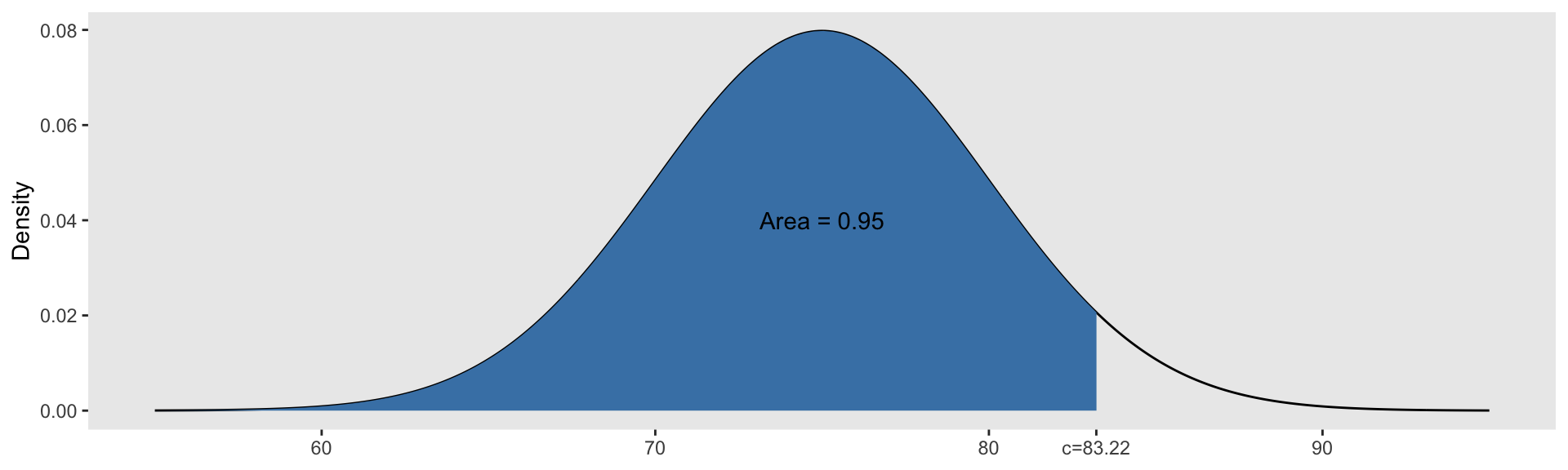

Finding Quantiles

We can also use R to find quantiles of a Normal distribution.

Suppose \(X\sim \text{Normal}(\mu=75, \sigma=5)\). Then:

Scale and Translation Invariance

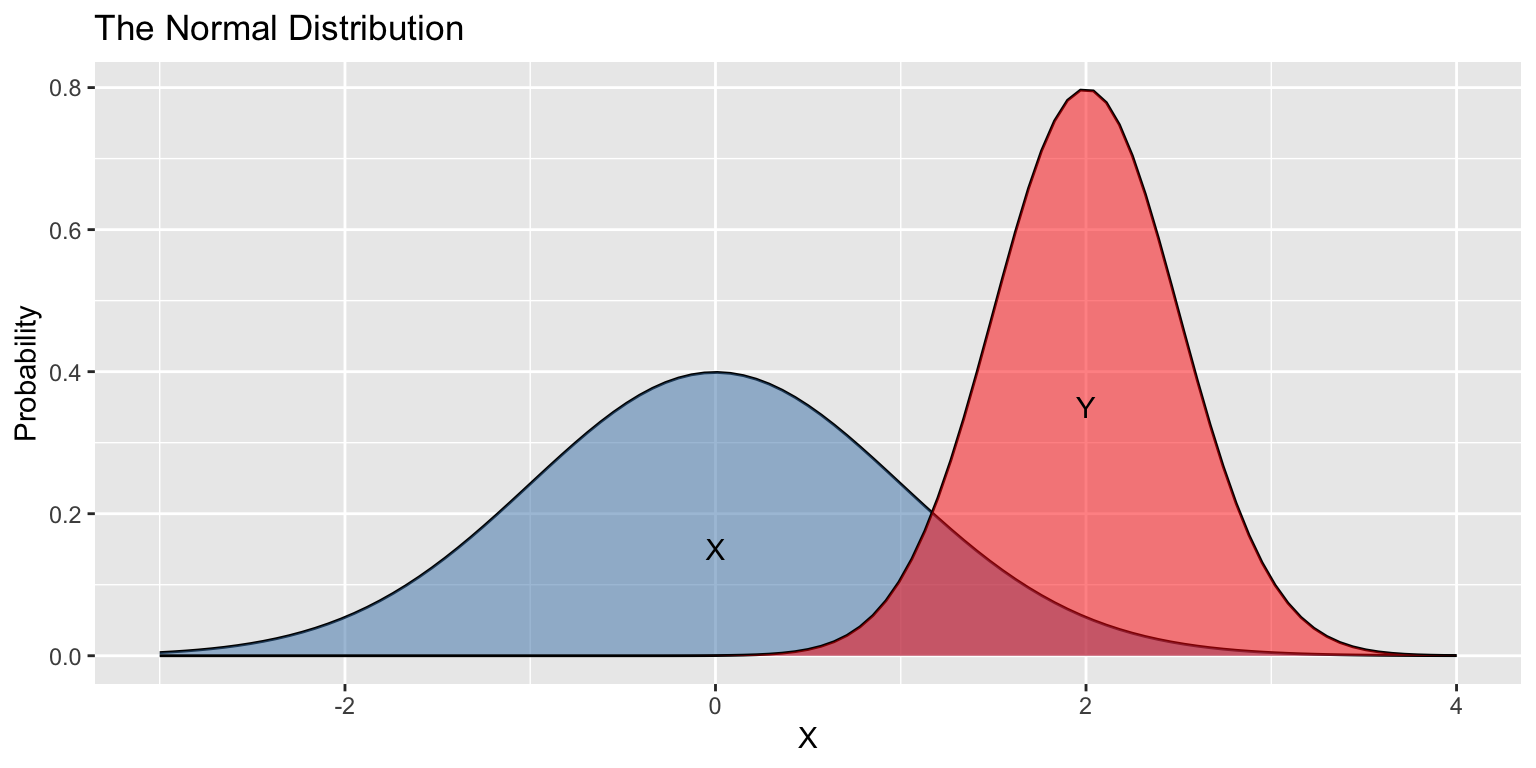

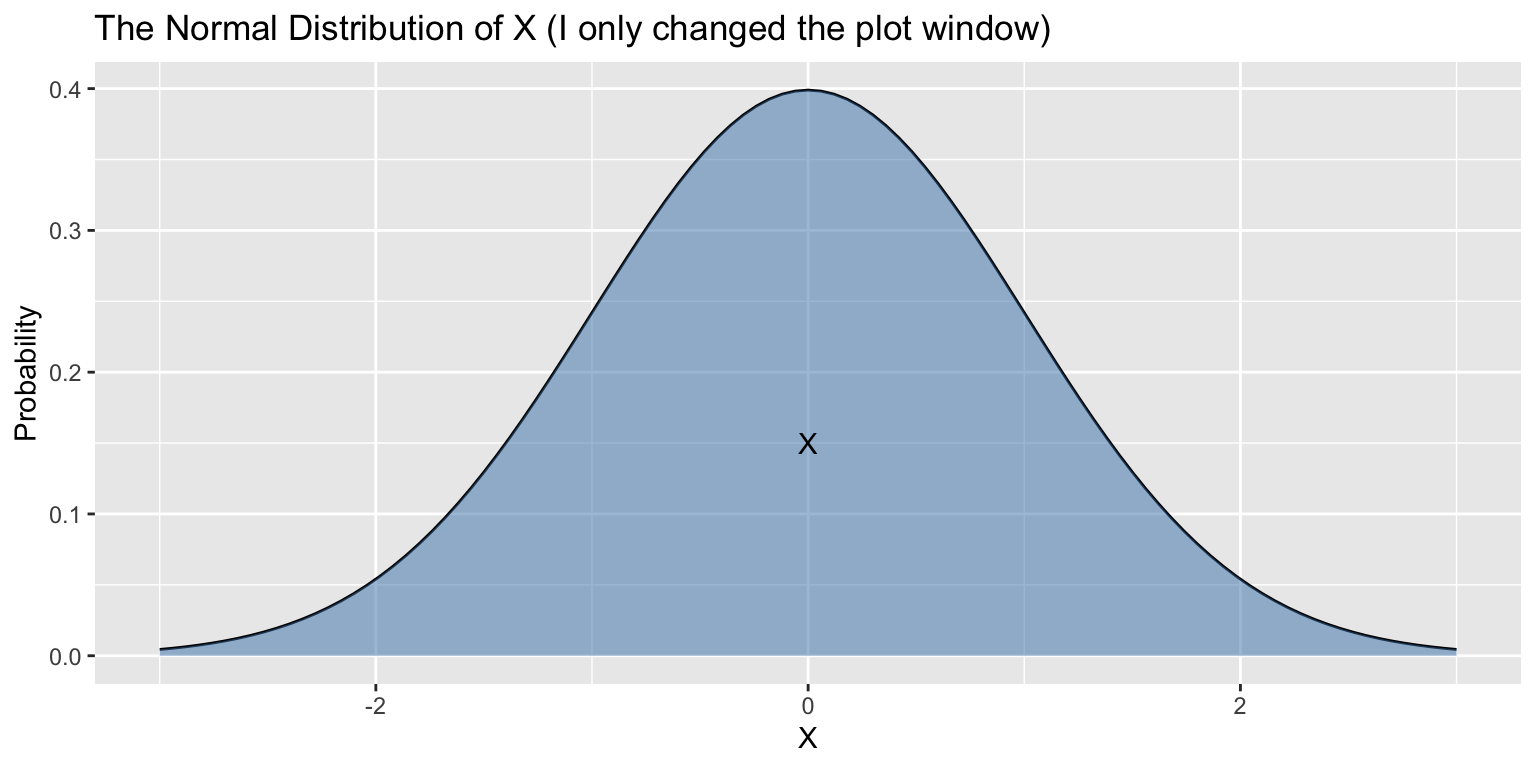

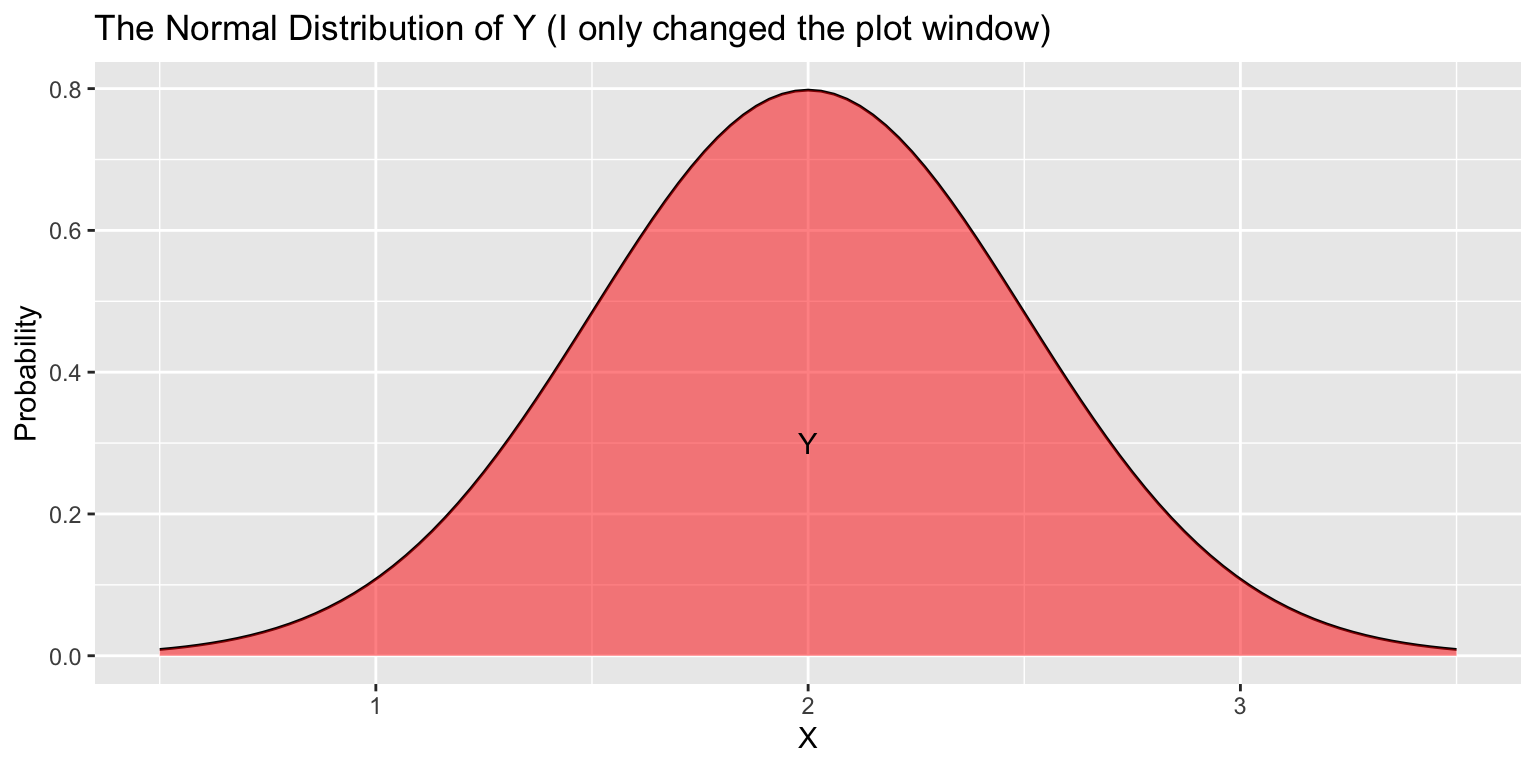

Suppose \(X\sim\text{Normal}(\mu=0,\sigma=1)\) and \(Y\sim\text{Normal}(\mu=2,\sigma=0.25)\).

\(X\) and \(Y\) have different means, heights, and widths…

- But the same shapes!

Scale and Translation Invariance

Suppose \(X\sim\text{Normal}(\mu=0,\sigma=1)\) and \(Y\sim\text{Normal}(\mu=2,\sigma=0.25)\).

\(X\) and \(Y\) have different means, heights, and widths…

- But the same shapes!

Scale and Translation Invariance

Suppose \(X\sim\text{Normal}(\mu=0,\sigma=1)\) and \(Y\sim\text{Normal}(\mu=2,\sigma=0.25)\).

\(X\) and \(Y\) have different means, heights, and widths…

- But the same shapes!

Standardization

Theorem: Standardization

Suppose \(X\sim\text{Normal}(\mu,\sigma)\). Then, \(Z = \frac{X - \mu}{\sigma}\) is a Normal random variable with mean 0 and standard deviation 1.

Standard Normal: a Normal random variable with mean \(0\) and standard deviation \(1\).

- We call the process of subtracting off \(\mu\) and dividing by \(\sigma\), standardizing.

- Useful Fact: If \(X\sim\text{Normal}(\mu=100,\sigma=10)\) and \(Z\sim\text{Normal}(\mu=0,\sigma=1)\), then,

\[P\Big[X < 90\Big] = P\Big[X<\text{ 1 SD below }\mu\Big] = P\Big[Z<-1\Big]\]

More Generally: If \(X\sim\text{Normal}(\mu,\sigma)\) and \(Z\sim\text{Normal}(0,1)\), then \[ P(X \leq x) = P\left(Z \leq \frac{x-\mu}{\sigma}\right) \]

Practice with Standard Normals

More Generally: If \(X\sim\text{Normal}(\mu,\sigma)\) and \(Z\sim\text{Normal}(0,1)\), then

\[ P(X \leq x) = P\left(Z \leq \frac{x-\mu}{\sigma}\right) \]

Practice: For each of the following, rewrite \(P(X \leq x)\) as \(P(Z \leq z)\) for some \(z\).

\(P(X \leq 10)\) where \(X\sim\text{Normal}(\mu=5,\sigma=5)\)

\(P(X \leq 15)\) where \(X\sim\text{Normal}(\mu=20,\sigma=10)\)

Practice with Standard Normals

More Generally: If \(X\sim\text{Normal}(\mu,\sigma)\) and \(Z\sim\text{Normal}(0,1)\), then

\[ P(X \leq x) = P\left(Z \leq \frac{x-\mu}{\sigma}\right) \]

Practice: For each of the following, rewrite \(P(X \leq x)\) as \(P(Z \leq z)\) for some \(z\).

- \(P(X \leq 10)\) where \(X\sim\text{Normal}(\mu=5,\sigma=5)\)

- \(\frac{10 - \mu}{\sigma} = \frac{10 - 5}{5} = 1 = z\), so \(\color{red}{P(X \leq 10) = P(Z \leq 1)}\)

- \(P(X \leq 15)\) where \(X\sim\text{Normal}(\mu=20,\sigma=10)\)

- \(\frac{15 - \mu}{\sigma} = \frac{15 - 20}{10} = -0.5 = z\), so \(\color{red}{P(X \leq 15) = P(Z \leq -0.5)}\)

The Central Limit Theorem

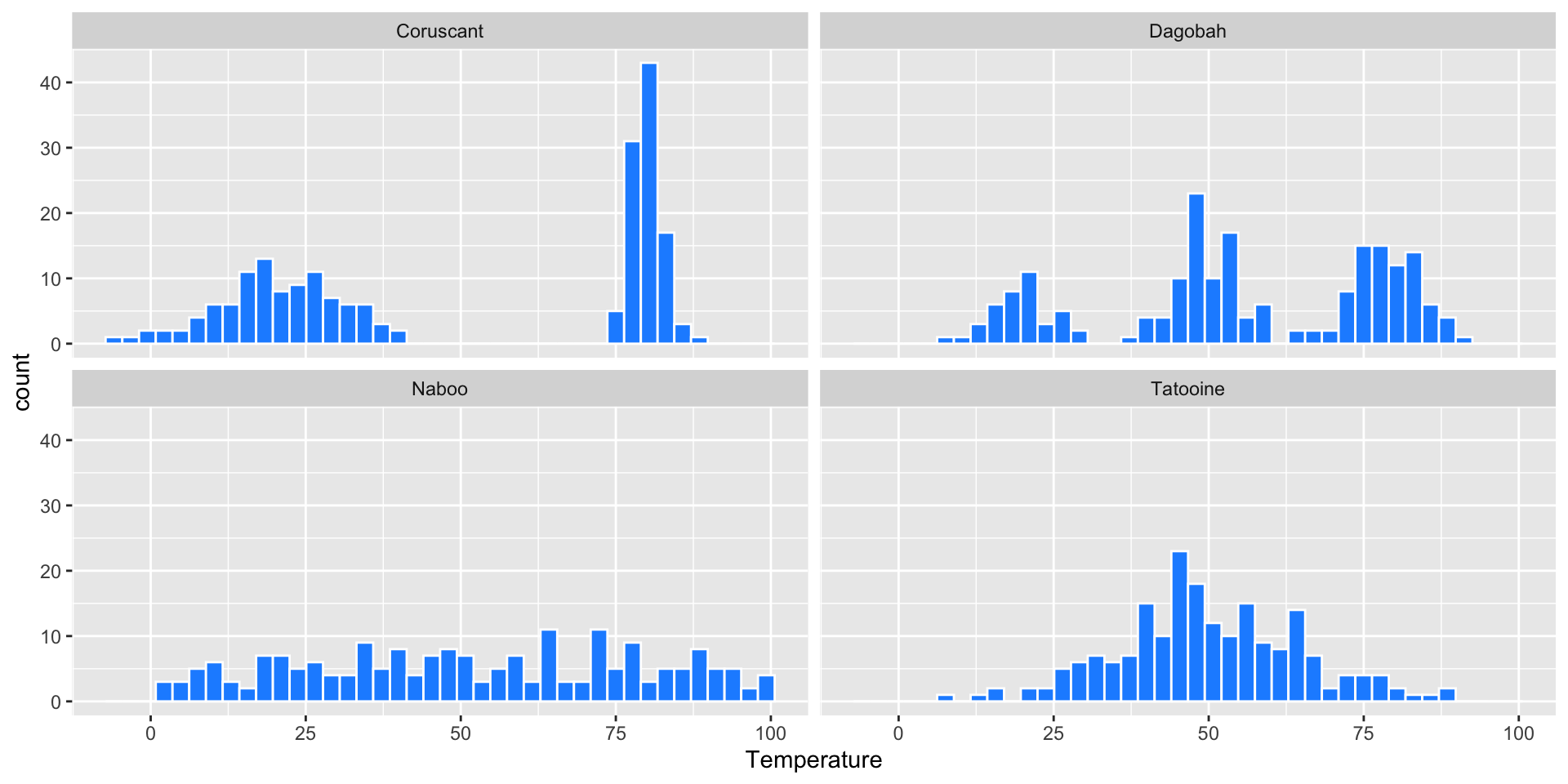

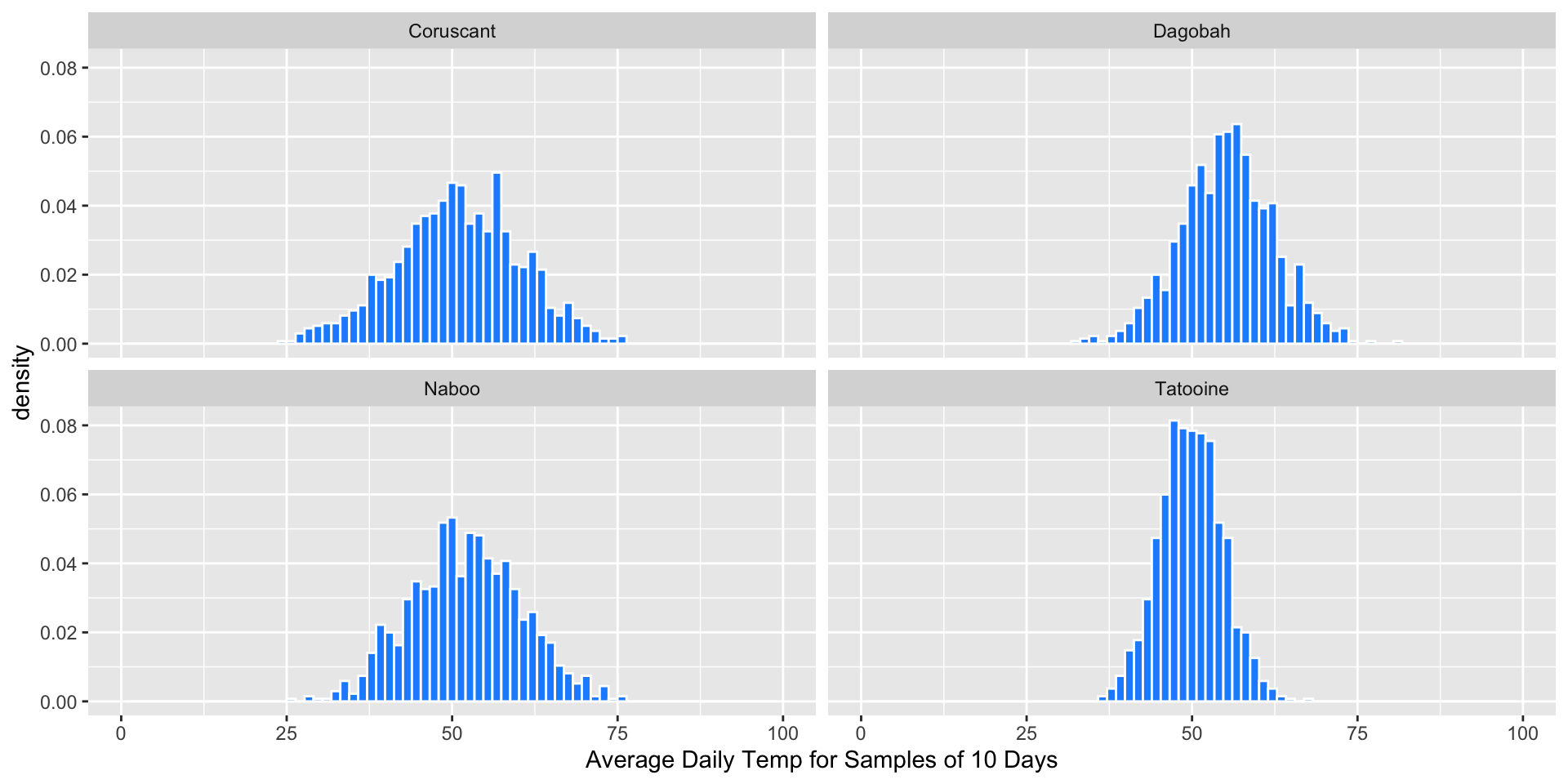

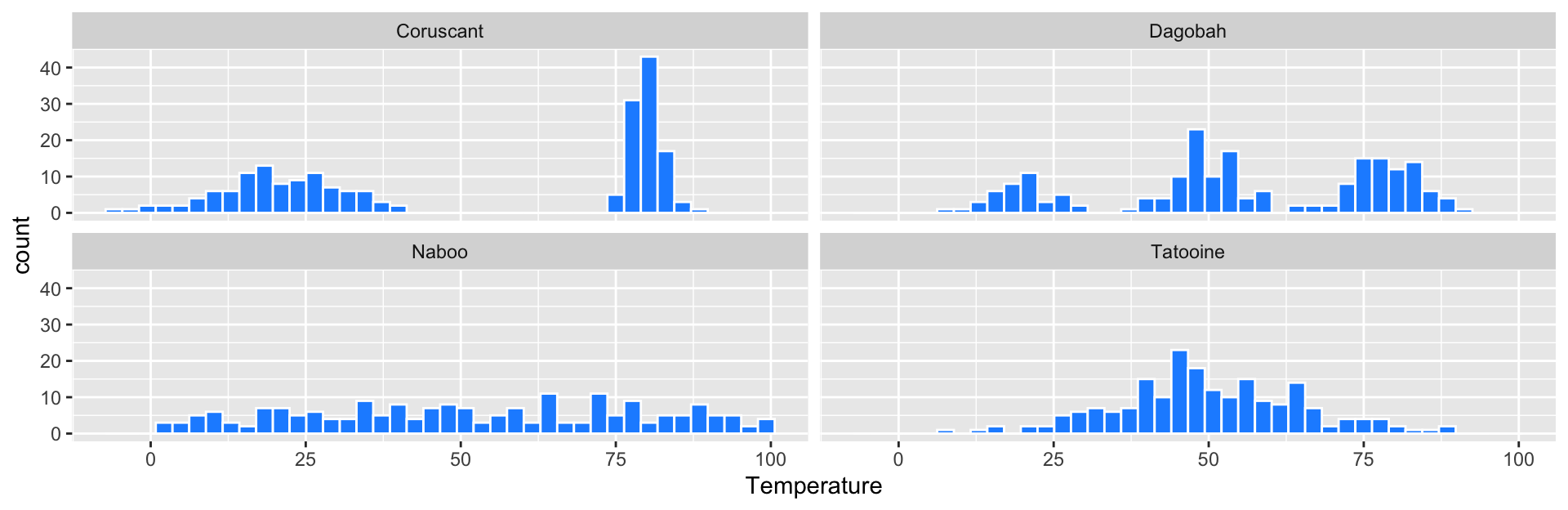

Daily Temperatures

Suppose we’re astronomers studying four far away planets: Naboo, Tatooine, Coruscant, and Dagobah. We have the daily temperature on each of these planets over 200 days:

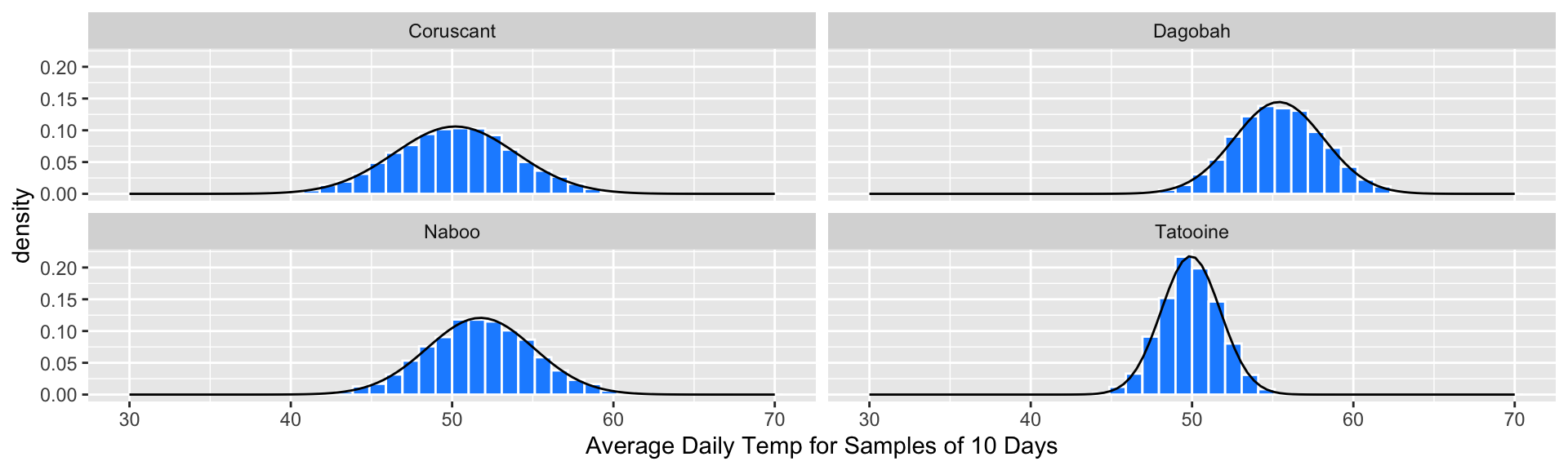

Random Sample Means (n=10)

Suppose we repeatedly take samples of 10 days from each planet, and compute the average temperature \(\bar{x}\) for each sample (these are sampling distributions):

- Q: What does the distribution of sample means look like?

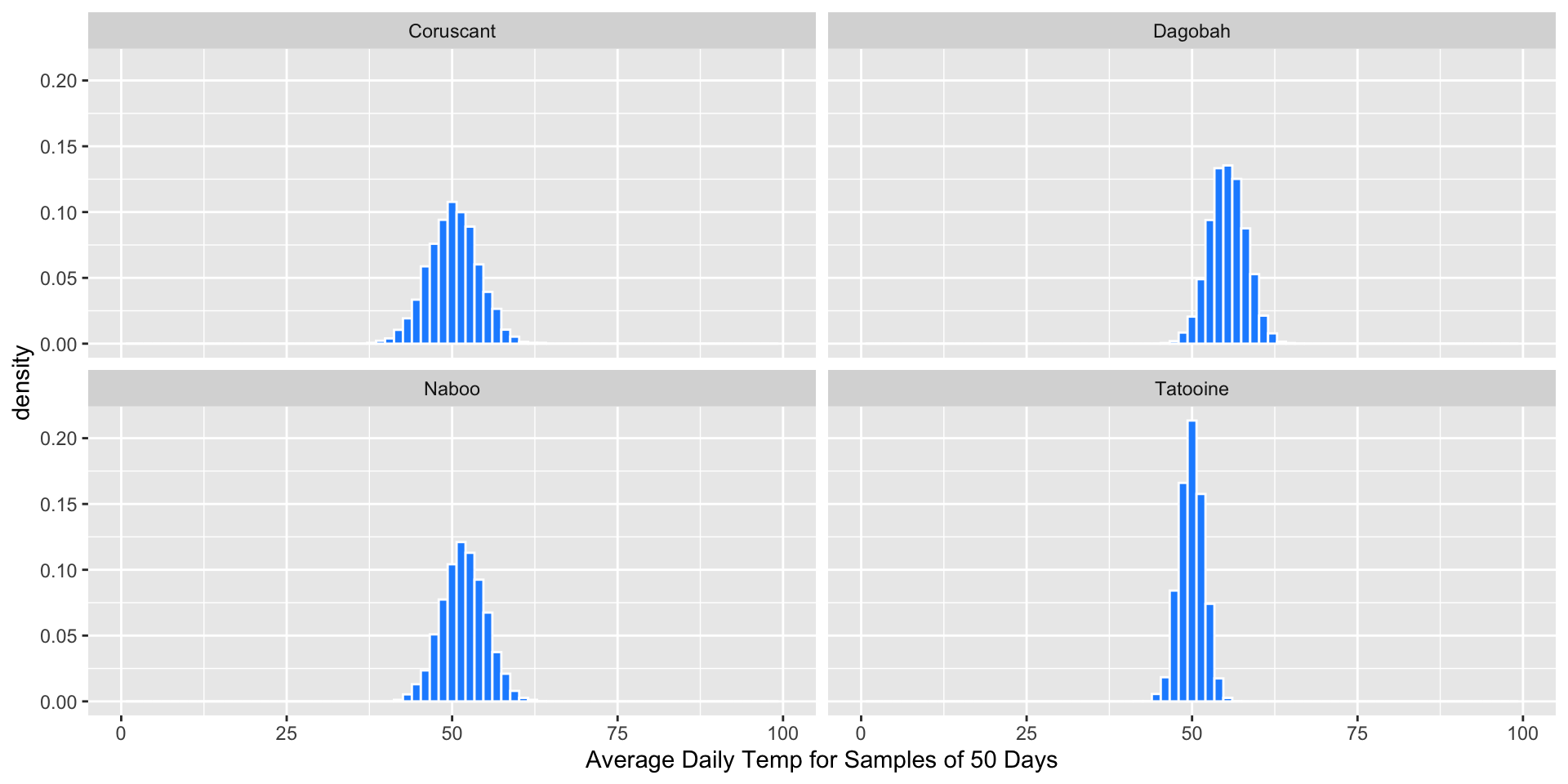

Random Sample Means (n=50)

Suppose we repeatedly take samples of 50 days from each planet, and compute the average temperature \(\bar{x}\) for each sample (these are sampling distributions):

Q: What does the distribution of sample means look like?

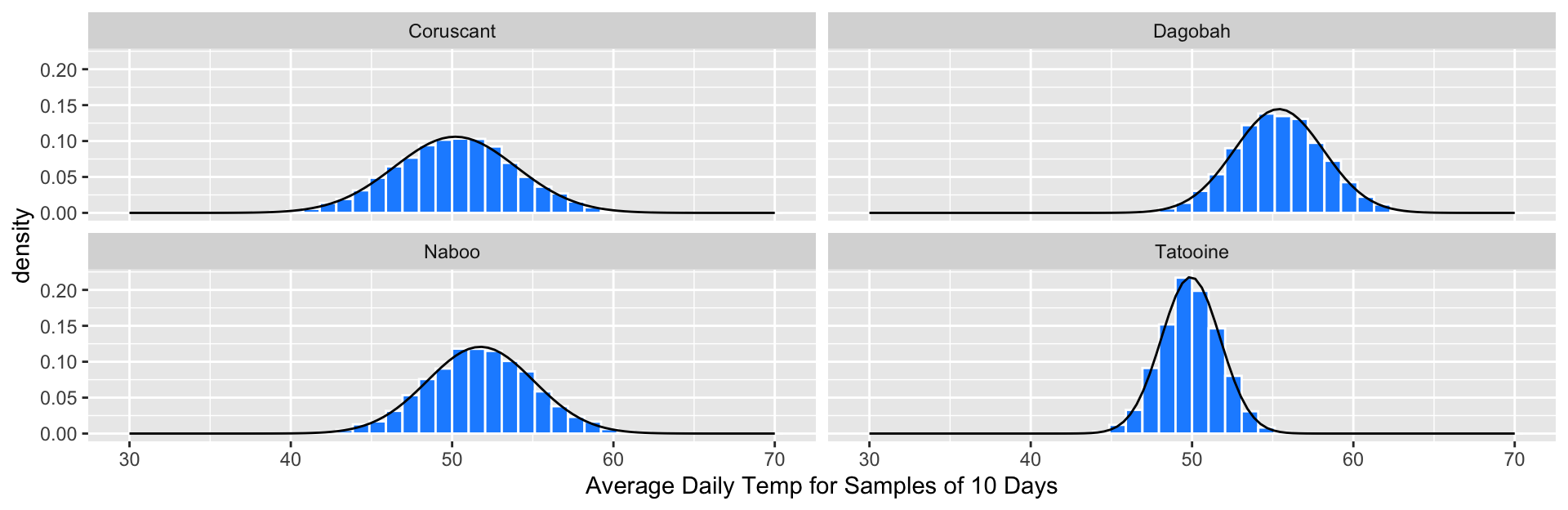

Sampling Distributions are Approximately Normal

The sampling distribution for each planet appeared approximately Normal with \(n=50\), regardless of the shape of the population distribution.

Sampling Distributions are Approximately Normal

The sampling distribution for each planet appeared approximately Normal with \(n=50\), regardless of the shape of the population distribution.

We’ve seen this before! As \(n\) increases,

- Sampling distributions become more Normal

- Variance decreases

- Mean doesn’t change; it is the parameter.

The Central Limit Theorem (CLT)

Theorem: Central Limit Theorem

Suppose a simple random sample of size \(n\) is drawn from a population with finite mean \(\mu\) and finite standard deviation \(\sigma\). Let \(\bar{x}\) be the sample mean. When \(n\) is large, then approximately \[\bar{x} \sim Normal\Big(\mu, \frac{\sigma}{\sqrt{n}}\Big)\]

A proof of the CLT requires more advanced techniques in probability (See Math 391).

We have gained intuition already for the CLT by examining sampling distributions!

We will use the CLT to conduct hypothesis tests/confidence intervals without simulation.

- Even when the world is strange and chaotic at the individual level, averages follow clear, predictable rules that we can use to draw conclusions!

The CLT is a BIG deal!

- When conducting inference, our statistic is often a sample mean

- A proportion is a sample mean of sorts too!

- For nearly any population distribution, the sample mean is approximately Normal

- The CLT gives a formula to calculate the mean and variance of a sample mean.

- We can estimate these using our data

- We can quickly approximate what the sampling distribution looks like!

Next week:

- We’ll use the approximate distribution of the sample statistic (given by the CLT) to construct confidence intervals and to conduct hypothesis tests!