P-Value Pitfalls

Megan Ayers

Math 141 | Spring 2026

Friday, Week 8

Reminders

- Assignment deadlines will resume per usual after the break

- Lab from yesterday: Due Tuesday March 31st

- Midterm revisions: Due Wednesday April 1st

- HW 7: Due Friday April 3rd

Goals for today

A hearty p-values discussion

Zoom out on statistical inference so far

Motivate theory-based inference

Let’s Talk About P-values

The original intention of the p-value was as an informal measure to judge whether or not a researcher should take a second look.

But to create simple statistical manuals for practitioners, this informal measure quickly became a rule: “p-value < 0.05” = “statistically significant”.

What were/are the consequences of the “p-value < 0.05” = “statistically significant” rule?

Let’s Talk About P-values

- A consequence: The p-value is often misinterpreted to be the probability the null hypothesis is true.

- A p-value of 0.003 does not mean there’s a 0.3% chance that ESP doesn’t exist!

- By giving people a simple rule, they never learned what the p-value actually measures.

Let’s Talk About P-values

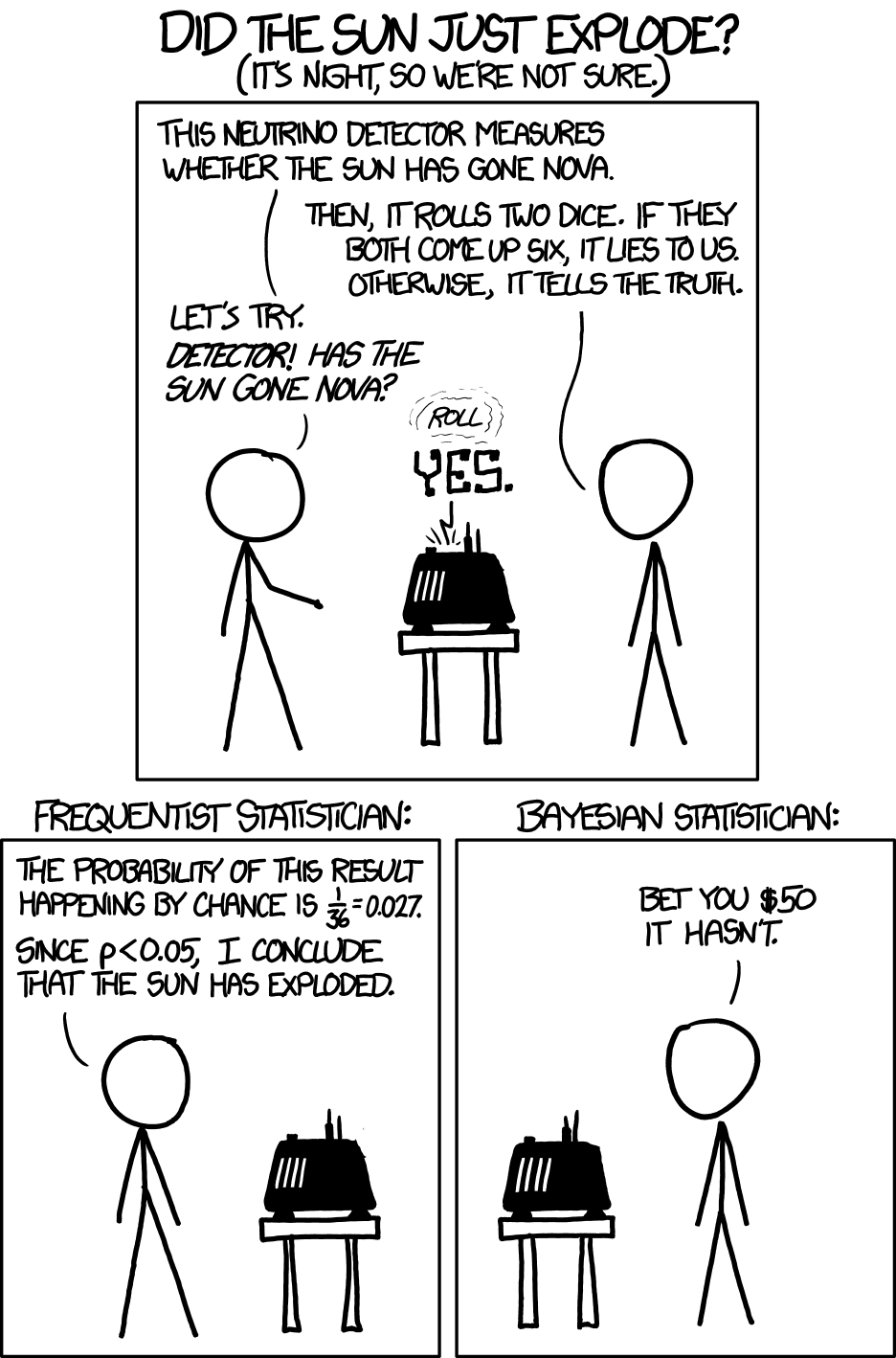

A consequence: Researchers often put too much weight on the p-value and not enough weight on their domain knowledge/the plausibility of their conjecture.

Read and discuss this xkcd comic with your neighbors

Let’s Talk About P-values

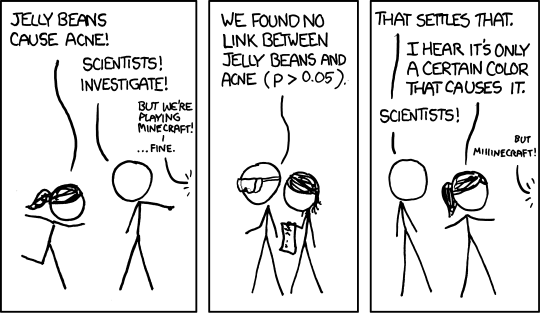

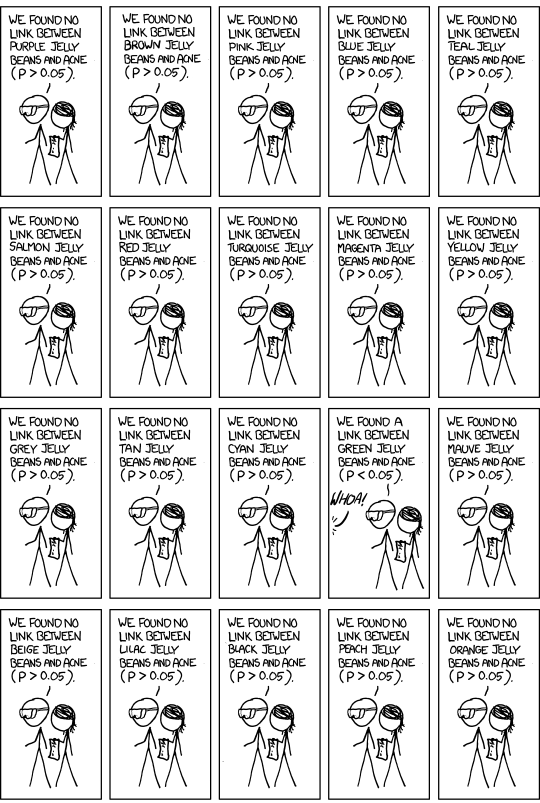

A consequence: P-hacking: Cherry-picking promising findings that are beyond this arbitrary threshold.

Let’s Talk About P-values

A consequence: P-hacking: Cherry-picking promising findings that are beyond this arbitrary threshold.

Let’s Talk About P-values

A consequence: P-hacking: Cherry-picking promising findings that are beyond this arbitrary threshold.

- Q: If we run 100 hypothesis tests where all null hypotheses are true, how many do we expect to have a P-value less than 0.05 due to chance?

- This is also known as the multiple comparisons problem

Where does p-hacking come from?

- Can be an unintentional consequence of data analysis’s “garden of forking paths”

- Also partly a byproduct of publication bias/incentives in academia

Moving forward from p-hacking

Distinguish the purpose of your analysis: confirmatory vs exploratory

- Confirmatory analyses: Pre-register your hypotheses and planned analysis before conducting your study

- Exploratory analyses: Less pressure on “statistical significance”, more focus on preliminary evidence that requires a confirmatory follow-up

Always present statistical context behind your conclusions: confidence intervals, p-values, analysis decisions, and assumptions

Multiple testing corrections

- Informally, these inflate your p-values to account for performing many tests

- Ex. If I perform \(n\) hypothesis tests, I change my rejection threshold to \(\alpha / n\) (Bonferroni correction)

Let’s Talk About P-values

- Another consequence of p-values: People conflate statistical significance with practical significance.

Example: A Nature study of 19,000+ recently married people found that those who meet their spouses online…

Are less likely to divorce (p-value < 0.002)

Are more likely to have high marital satisfaction (p-value < 0.001)

BUT the estimated effect sizes were tiny.

- Divorce rate of 5.96% for those who met online versus 7.67% for those who met in-person.

- On a 7 point scale, happiness value of 5.64 for those who met online versus 5.48 for those who met in-person.

Q: Do these results provide compelling evidence that one should change their dating behavior?

Let’s Talk About P-values

The American Statistical Association created a set of principles to address misconceptions and misuse of p-values:

P-values can indicate how incompatible the data are with a specified statistical model.

P-values do not measure the probability that the studied hypothesis is true.

Scientific conclusions and business or policy decisions should not be based only on whether or not a p-value passes a specific threshold (i.e. 0.05).

Proper inference requires full reporting and transparency.

A p-value, or statistical significance, does not measure the size of an effect or the importance of a result.

By itself, a p-value does not provide a good measure of evidence regarding a model or hypothesis.

Let’s Talk About P-values

Despite its issues, p-values are still quite popular and can still be a useful tool when used properly.

In 2014, George Cobb a professor from Mount Holyoke College posed the following questions (and answers):

- We can break the cycle!

Math 141 & P-Values

Understanding p-values and being able to interpret a p-value in context is a learning objective of Math 141.

- Ex: If ESP doesn’t exist, the probability of guessing correctly on at least 106 out of 329 trials is 0.003.

Understanding that a small p-value means we have some evidence for \(H_a\) is important.

- Ex: Because the p-value is small, we have evidence for ESP.

Understanding that a small p-value alone does not imply practical significance.

- Create a confidence interval to contextualize the effect size!

Understanding that what you mean by small should depend on your field and whether a Type I Error or Type II Error is worse for your particular research question.

Your ability to tell if a # is less than 0.05 is not a learning objective for Math 141.

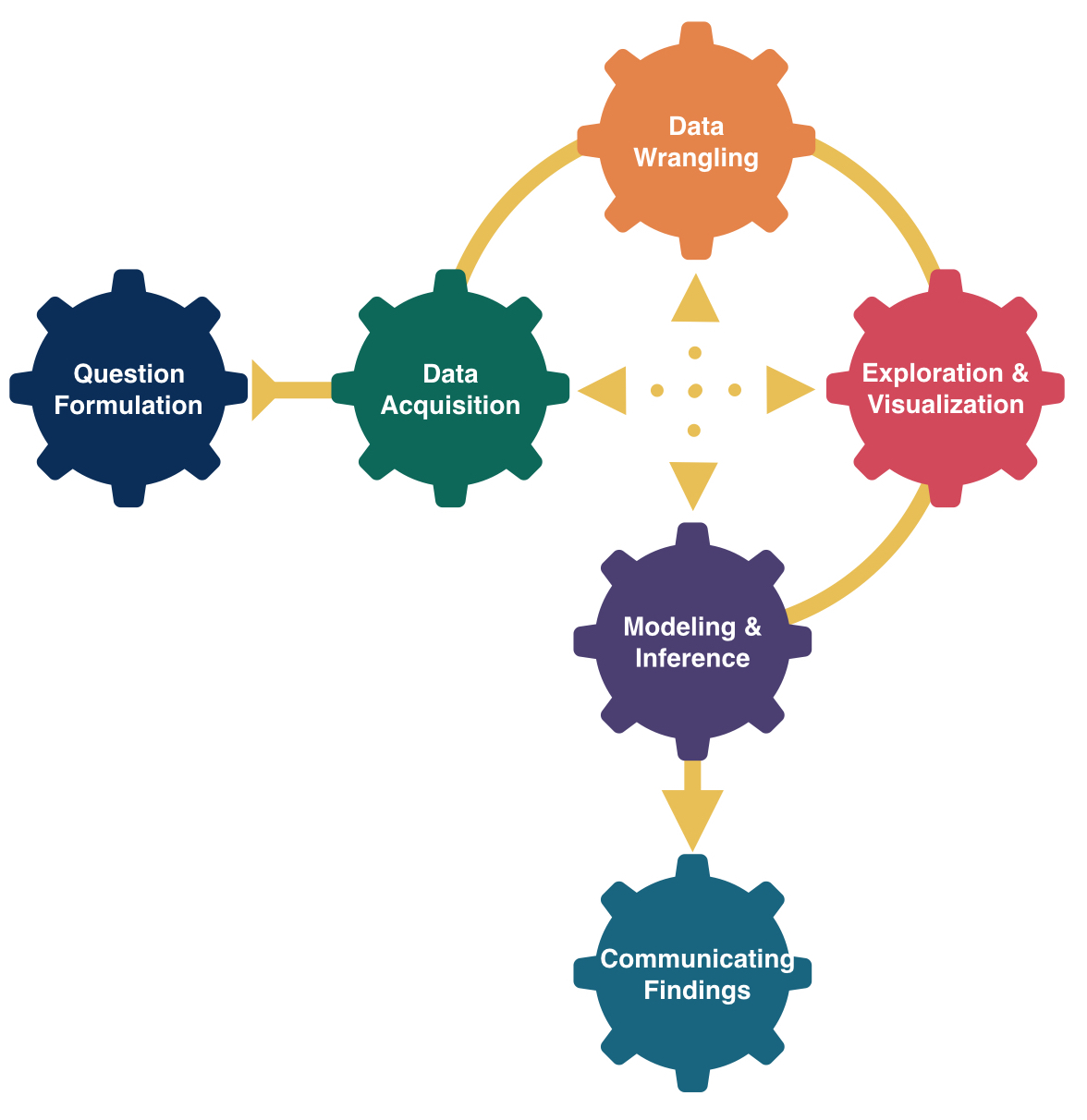

Inference: The big picture

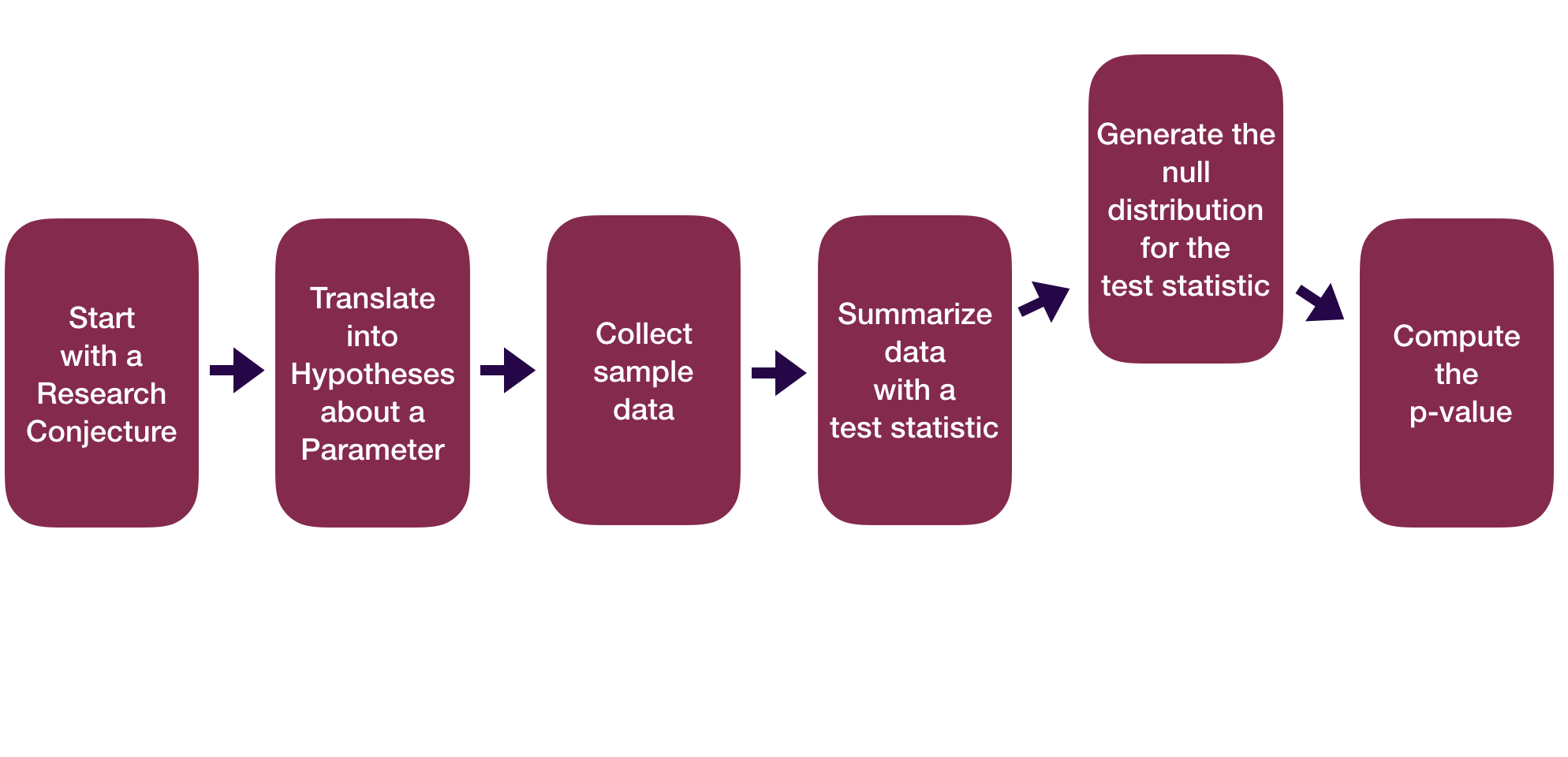

Inference: The Big Picture

Statistical inference is the process of drawing conclusions about a population based on sample data.

Q: How do point estimates and confidence intervals help us make inferences?

Q: How does the hypothesis testing framework help us make inferences?

Inference: The Big Picture

Working with samples provides a window into the population, but we can’t see it all

Overarching goal: distinguish between results that arise due to chance vs results that reflect a real, systematic pattern in the population

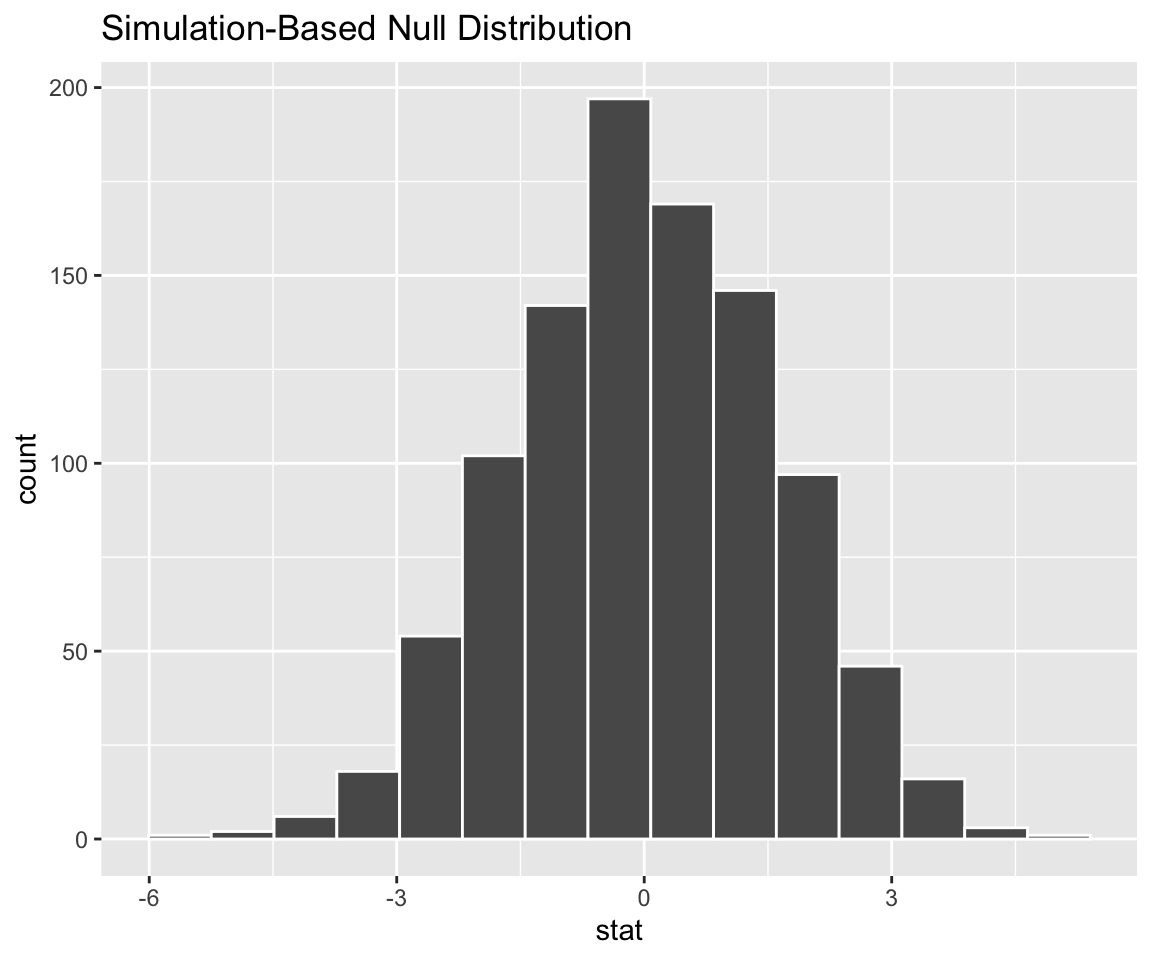

This led us to focus on sampling distributions, which we approximate using bootstrap distributions and resampling methods

We used these to accomplish two tasks:

- Generate point + interval estimates: Our best guess + range of plausible values for a parameter

- Perform hypothesis tests: A scientific method for maintaining or rejecting a null hypothesis in favor of an alternative hypothesis

Inference: The Big Picture

Point + interval estimates: Our best guess + range of plausible values for a parameter

Hypothesis testing: A scientific method for maintaining or rejecting a null hypothesis in favor of an alternative hypothesis

These two approaches are two sides of the same coin!

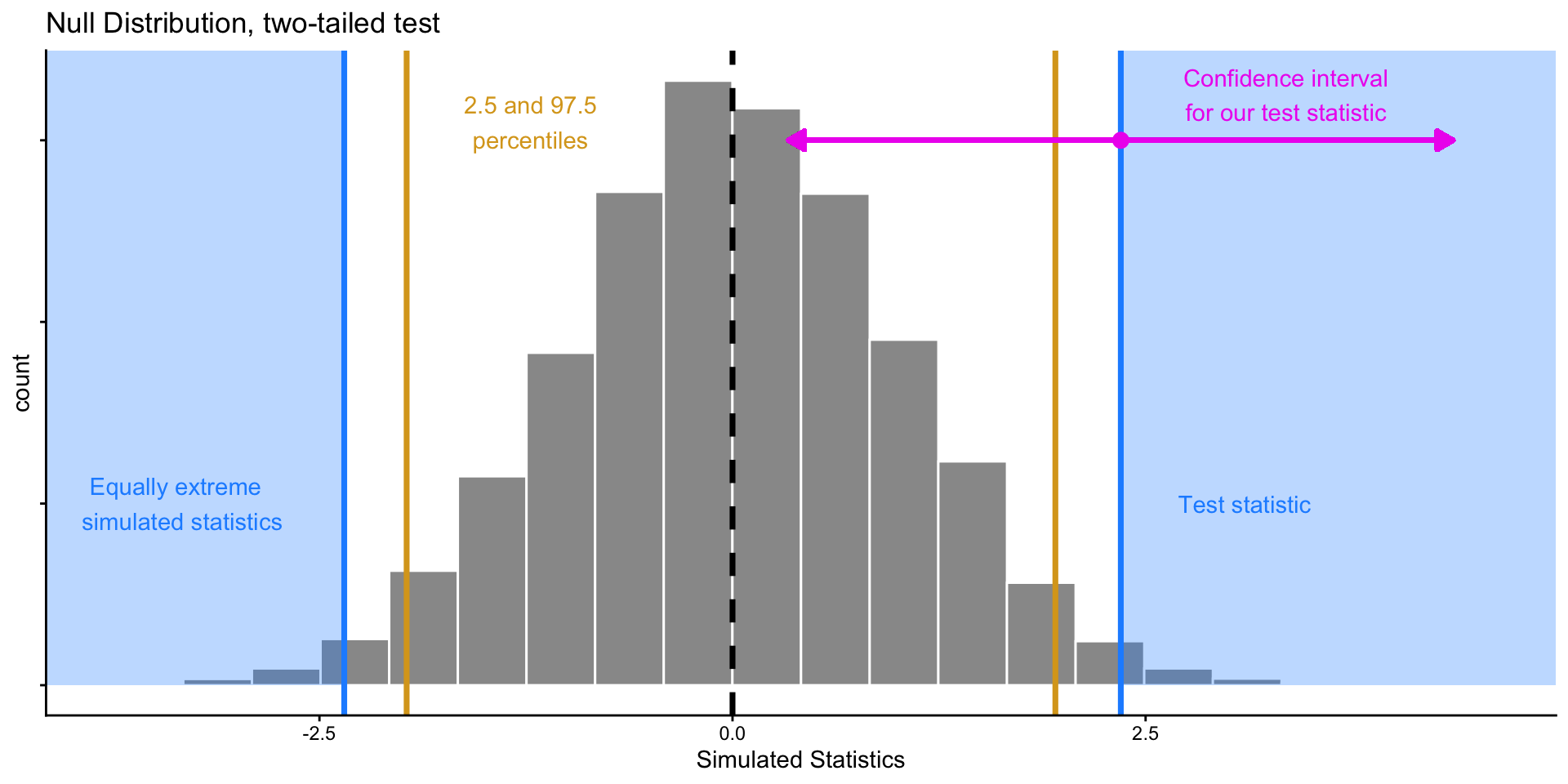

Inference: The Big Picture

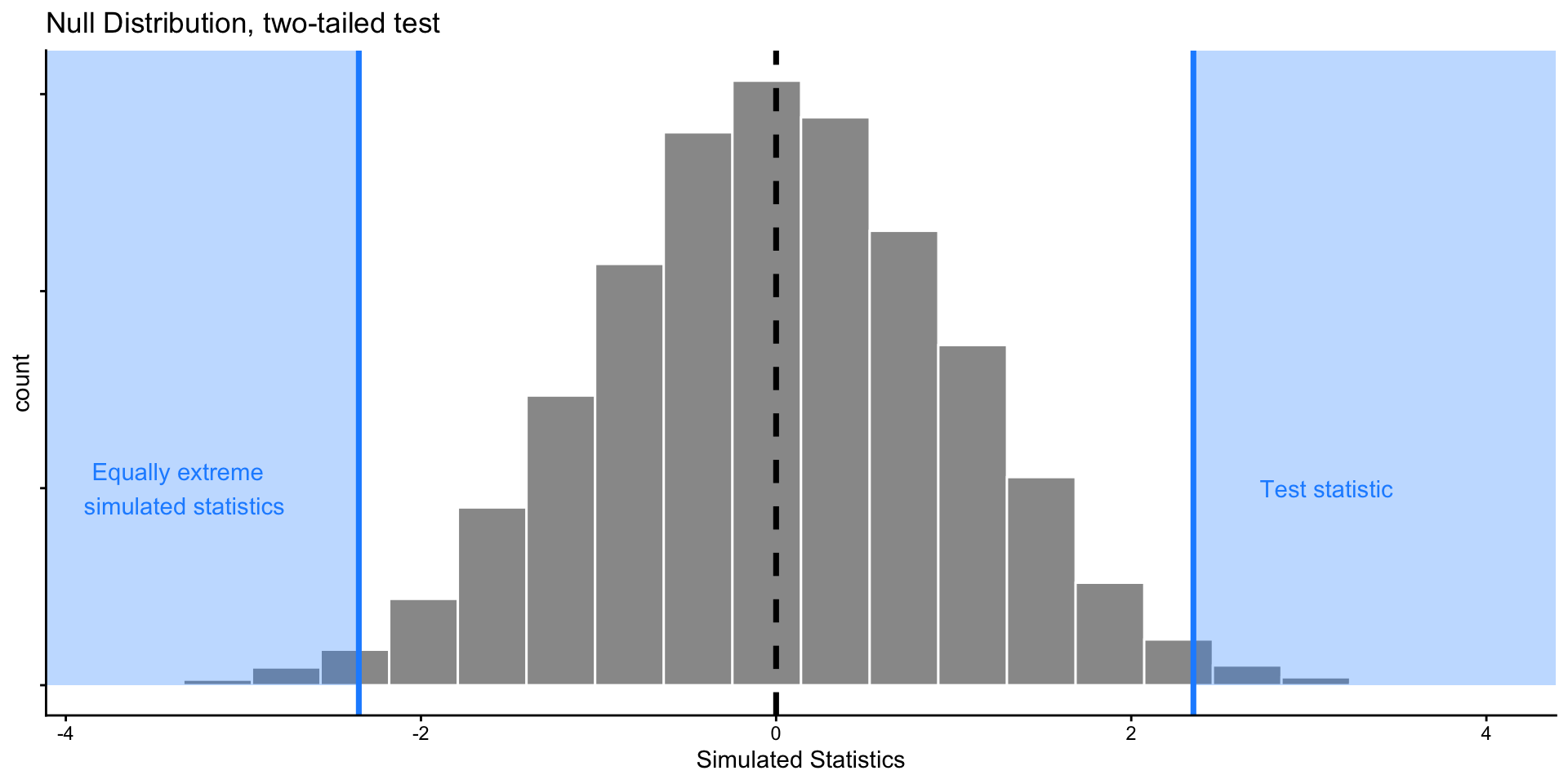

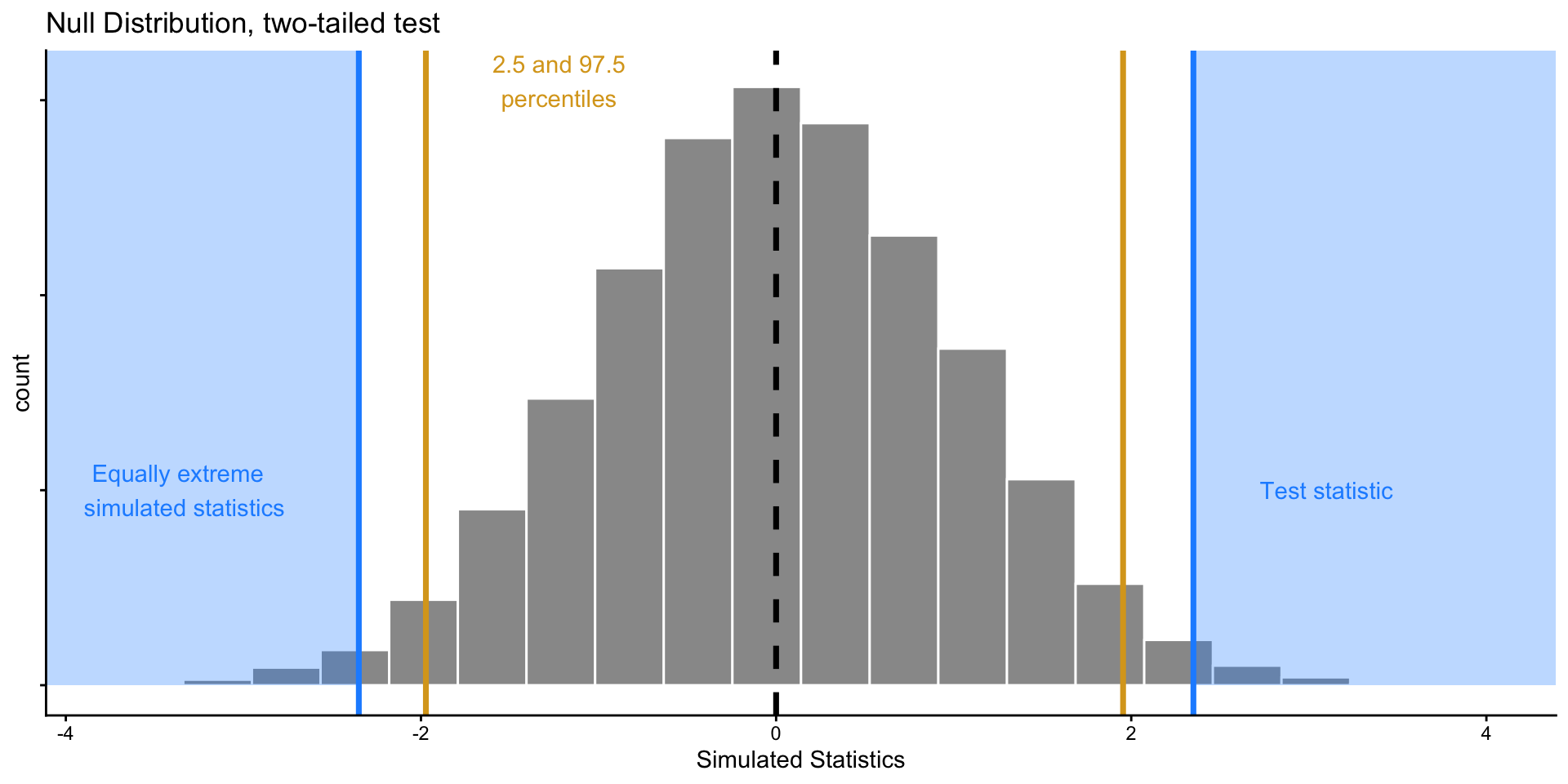

These two approaches are two sides of the same coin!

Suppose we have \(H_0: \mu = 0\), and observe a test statistic

Performing 2-sided hypothesis test with \(\alpha = 0.05\) is equivalent to computing a 95% confidence interval (CI) from our data and seeing if 0 falls inside it.

- For any value \(x_{in}\) in CI, we would not reject a null hypothesis \(H_0: \mu = x_{in}\) given our data.

- For any value \(x_{out}\) outside of CI, we would reject a null hypothesis \(H_0: \mu = x_{out}\).

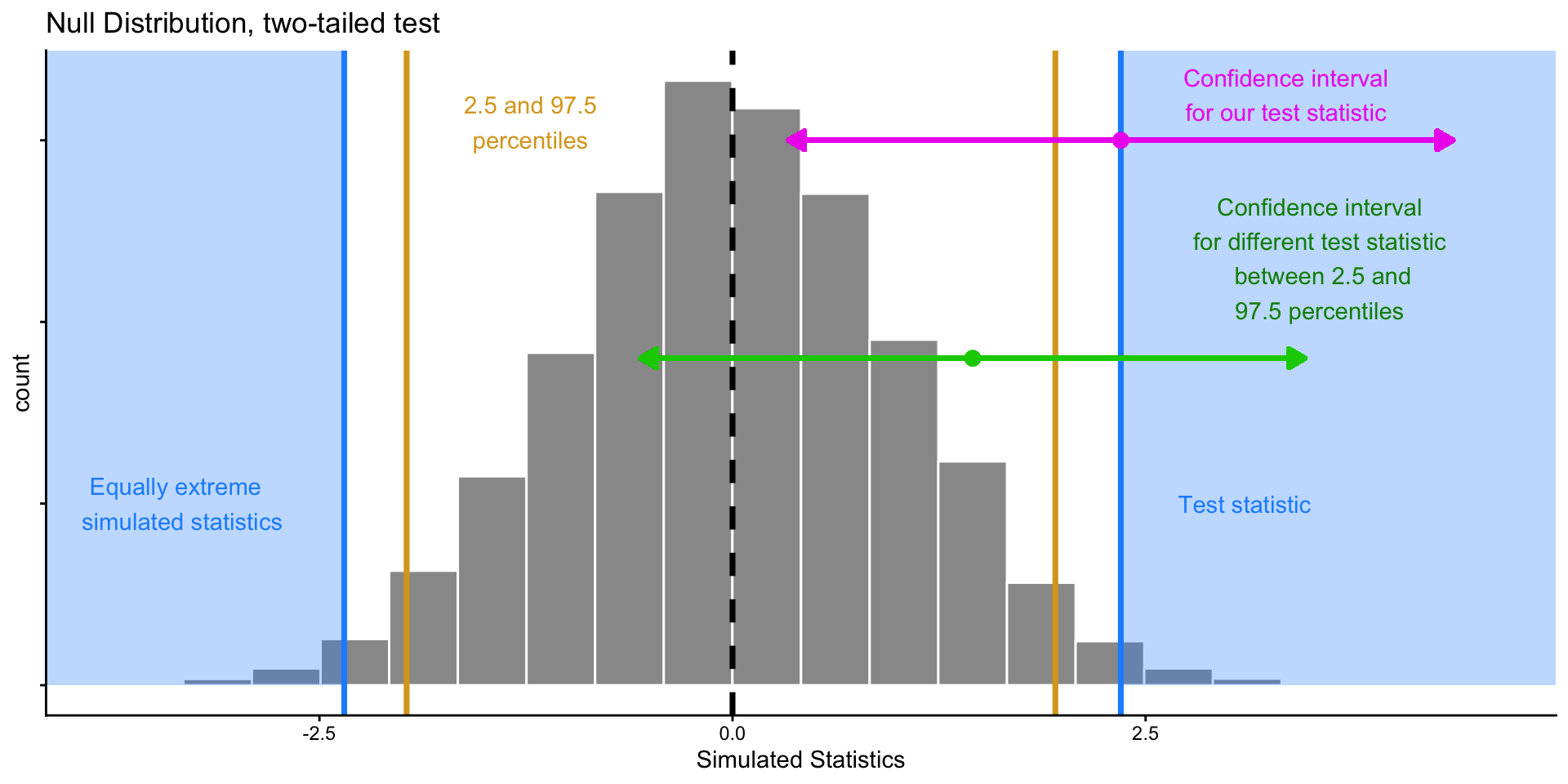

Inference: The Big Picture

These two approaches are two sides of the same coin!

Suppose we have \(H_0: \mu = 0\), and observe a test statistic

Performing 2-sided hypothesis test with \(\alpha = 0.05\) is equivalent to computing a 95% confidence interval (CI) from our data and seeing if 0 falls inside it.

- For any value \(x_{in}\) in CI, we would not reject a null hypothesis \(H_0: \mu = x_{in}\) given our data.

- For any value \(x_{out}\) outside of CI, we would reject a null hypothesis \(H_0: \mu = x_{out}\).

Inference: The Big Picture

These two approaches are two sides of the same coin!

Suppose we have \(H_0: \mu = 0\), and observe a test statistic

Performing 2-sided hypothesis test with \(\alpha = 0.05\) is equivalent to computing a 95% confidence interval (CI) from our data and seeing if 0 falls inside it.

- For any value \(x_{in}\) in CI, we would not reject a null hypothesis \(H_0: \mu = x_{in}\) given our data.

- For any value \(x_{out}\) outside of CI, we would reject a null hypothesis \(H_0: \mu = x_{out}\).

Inference: The Big Picture

These two approaches are two sides of the same coin!

Suppose we have \(H_0: \mu = 0\), and observe a test statistic

Performing 2-sided hypothesis test with \(\alpha = 0.05\) is equivalent to computing a 95% confidence interval (CI) from our data and seeing if 0 falls inside it.

- For any value \(x_{in}\) in CI, we would not reject a null hypothesis \(H_0: \mu = x_{in}\) given our data.

- For any value \(x_{out}\) outside of CI, we would reject a null hypothesis \(H_0: \mu = x_{out}\).

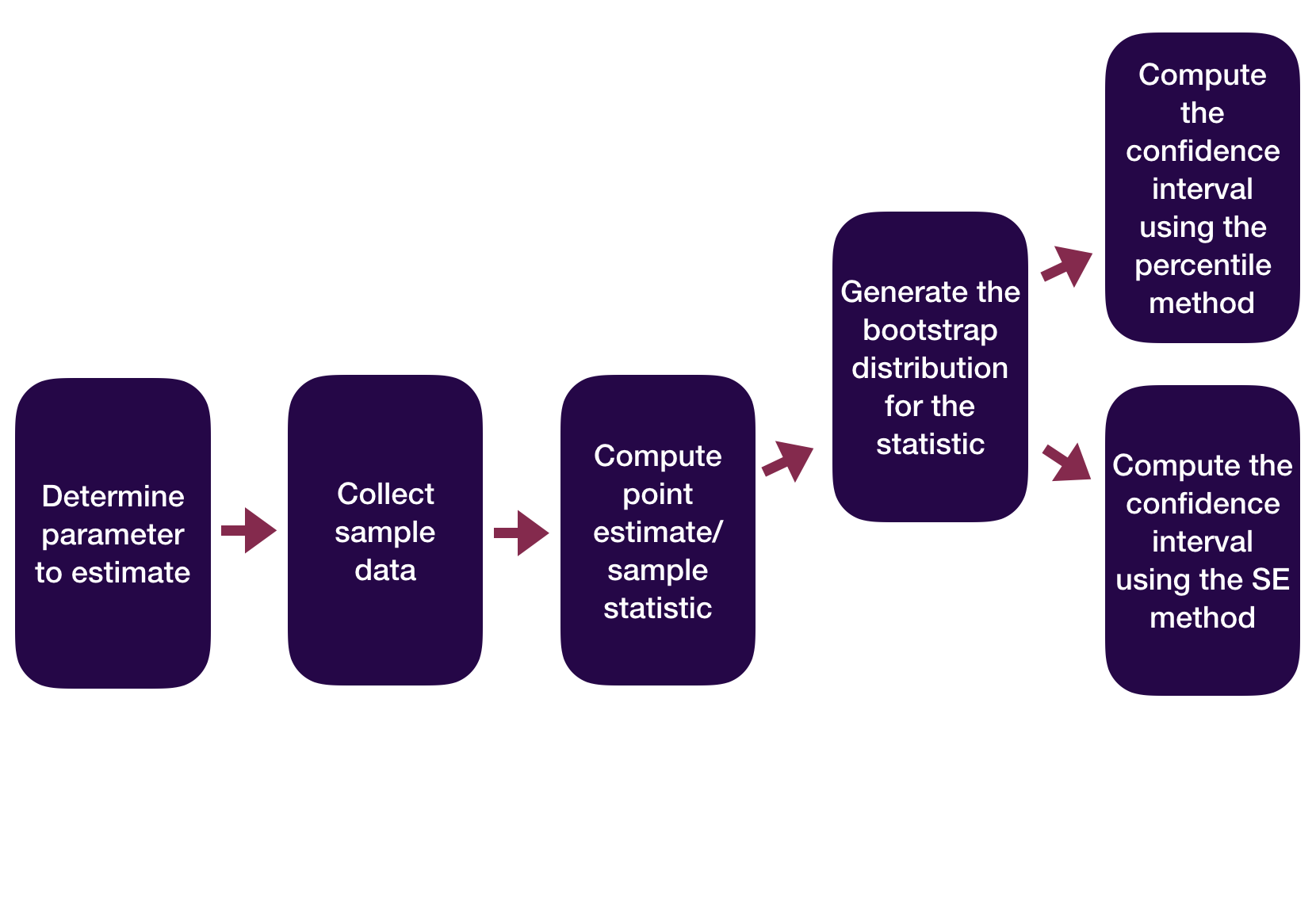

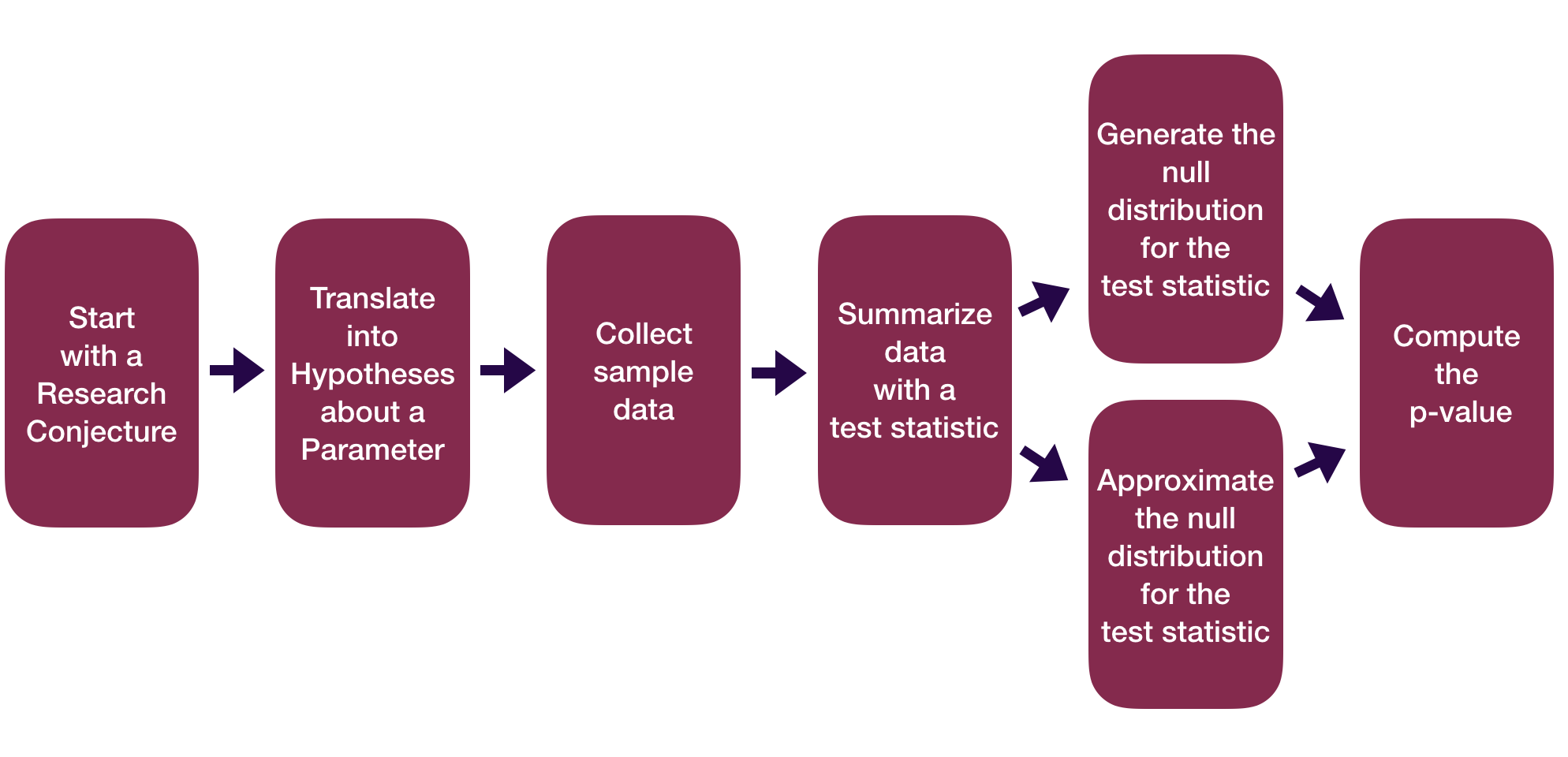

Statistical Inference Zoom Out – Estimation

Question: How did folks do inference before computers?

Statistical Inference Zoom Out – Estimation

Question: How did folks do inference before computers?

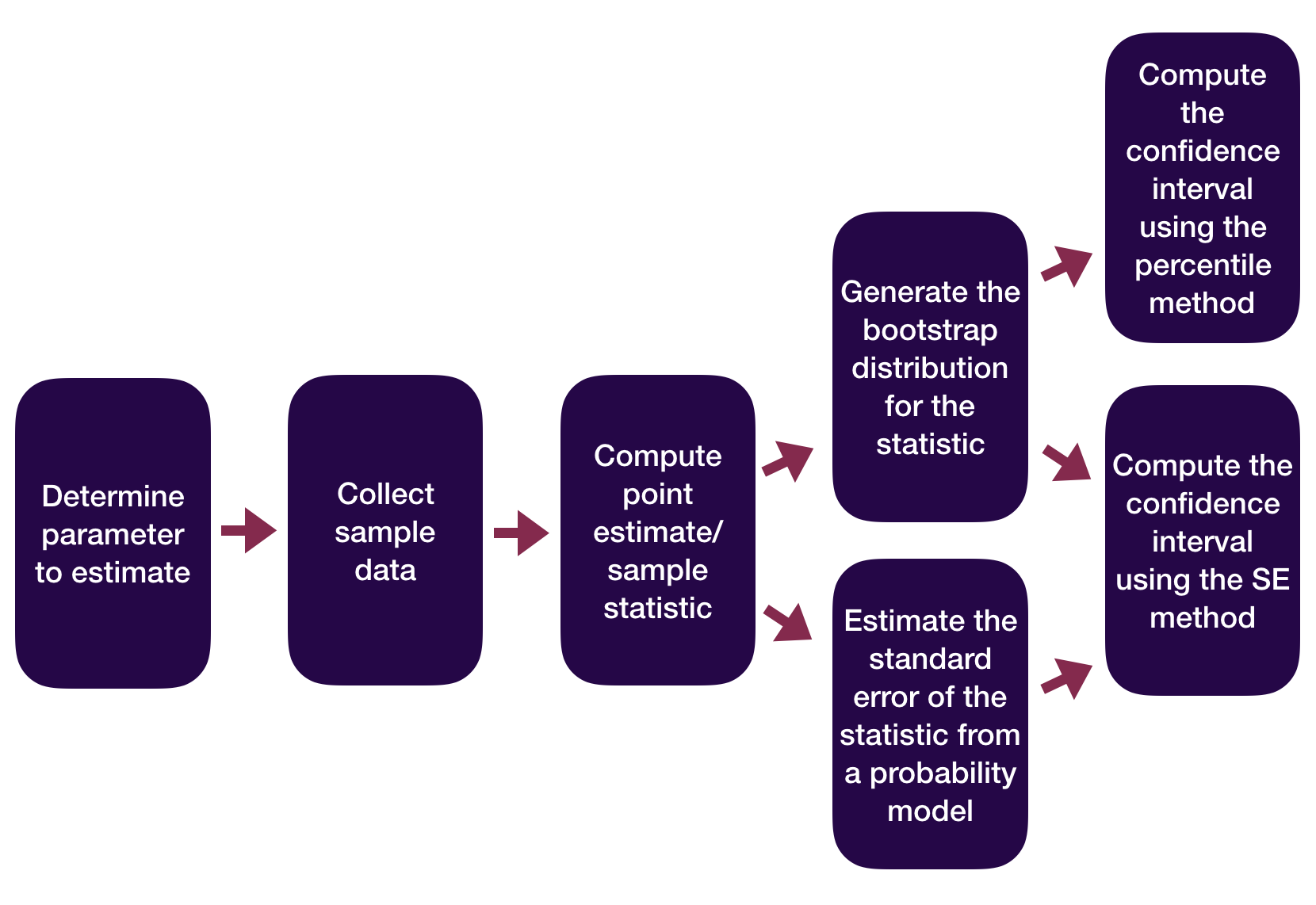

Statistical Inference Zoom Out – Testing

Question: How did folks do inference before computers?

Statistical Inference Zoom Out – Testing

Question: How did folks do inference before computers?

This means we need to learn about probability models!

Probability Models

- We have computers now! Why do we need to know how to do inference without them?

- It’s important to build literacy for existing and future work that relies on probability models and theory

- Default settings for many programmed statistical analyses make assumptions based on probability theory

- Closed-form results build intuition and formalize ideas in ways that simulation methods alone do not

Probability Models

Motivating question: How can we use theoretical probability models to approximate our (sampling) distributions?

Before we can answer that question and apply the models, we need to learn about the theoretical probability models themselves. We’ll turn to this after spring break!