Hypothesis Testing II

Megan Ayers

Math 141 | Spring 2026

Monday, Week 8

Goals for today

Midterm revisions

Practice framing research questions in terms of hypotheses

Practice defining null distributions

1 vs 2 sided tests

Midterm revisions

If you scored less than 80% on the midterm you have the opportunity to get some points back. Parameters:

- You can get up to 50% of your missed points back (up to an 80% total score on the midterm).

- Example: If you got a 70% on the midterm, you can get up to an 80% with revisions, but if you got a 50% on the midterm you can get up to a 75% with revisions.

- For each question part you can get half the points you missed.

- Example: If you got a 1 / 3 on Question 1 part (a), an entirely correct solution submitted to me on the midterm makeups will increase your score to 2 / 3 on Question 1 part (a).

- Changes to rules now apply:

- You must give a brief explanation of where you went wrong for each revised problem and why your revision fixes this.

- You may consult your notes for the course and any of our internal course material. But outside resources, including friends, peers, the internet, or AI tools, are still prohibited.

- You have no time pressure.

- You can come to my (Megans’s) office hours to discuss questions you are stuck on, but no other office hours or individual tutoring.

- Revision instructions are on the course website.

- Submit paper copies of your midterm revisions to me by Wednesday 4/1 at 5:00pm. No late submissions!

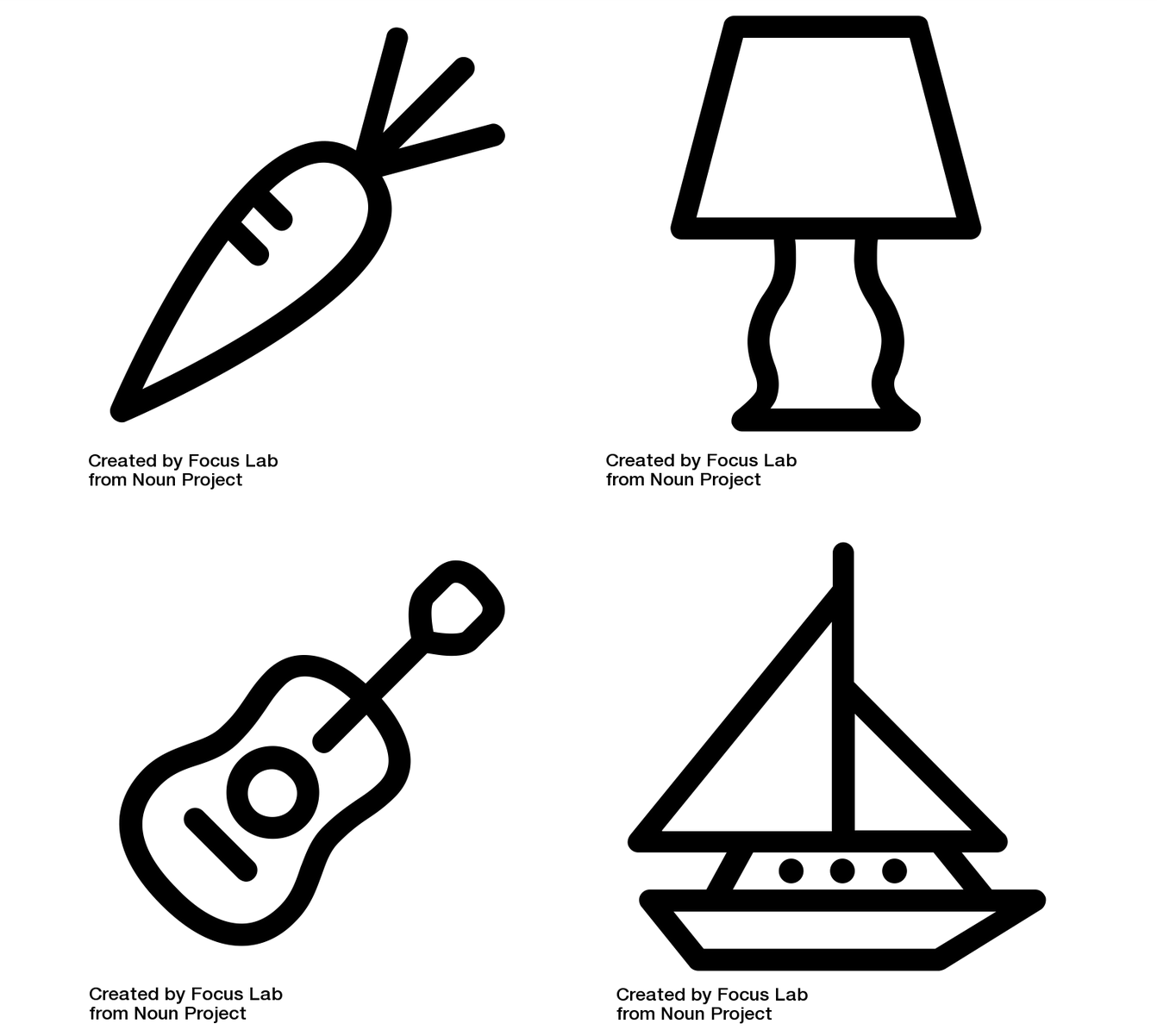

Recap: Framework for Hypothesis Testing

Present research question and identify hypotheses

- Null hypothesis (\(H_0\)): status quo, random chance, no effect…

- Alternative hypothesis (\(H_a\)): the researchers’ conjecture

Describe Null distribution

Obtain data, calculate relevant Test Statistic

Calculate the P-value

- P-value = likelihood of observing the Test Statistic or something more extreme assuming the Null Hypothesis

Use the P-value to make a conclusion on the research question

Let’s Practice

Example: Does Extrasensory Perception (ESP) exist?

Psychologists Bem and Honorton conducted extrasensory perception studies:

- A “sender” randomly chooses an object out of 4 possible objects and sends that information to a “receiver”.

- The “receiver” is then given a set of 4 possible objects and they must decide which one most resembles the object sent to them.

Out of 329 reported trials, the “receivers” correctly identified the object 106 times.

Hypothesis Testing Steps: ESP

Psychologists Bem and Honorton conducted extrasensory perception studies:

- A “sender” randomly chooses an object out of 4 possible objects and sends that information to a “receiver”.

- The “receiver” is then given a set of 4 possible objects and they must decide which one most resembles the object sent to them.

Out of 329 reported trials, the “receivers” correctly identified the object 106 times.

Discuss with neighbor(s):

1. What is the relevant null hypothesis (\(H_0\)) and alternative hypothesis (\(H_a\))?

2. Describe Null distribution. How might we simulate it? (Hint: Recall the card guessing example)

3. What is our relevant Test Statistic?

4. What does the P-value represent here? How might we calculate it, given step 2?

5. How would we use the P-value to make a conclusion on the research question?

Hypothesis Testing Steps: ESP

- What is the relevant null hypothesis (\(H_0\)) and alternative hypothesis (\(H_a\))?

- \(H_0\): Participants were guessing randomly (\(p = 0.25\))

- \(H_A\): Participants were guessing better than random (\(p > 0.25\))

Hypothesis Testing Steps: ESP

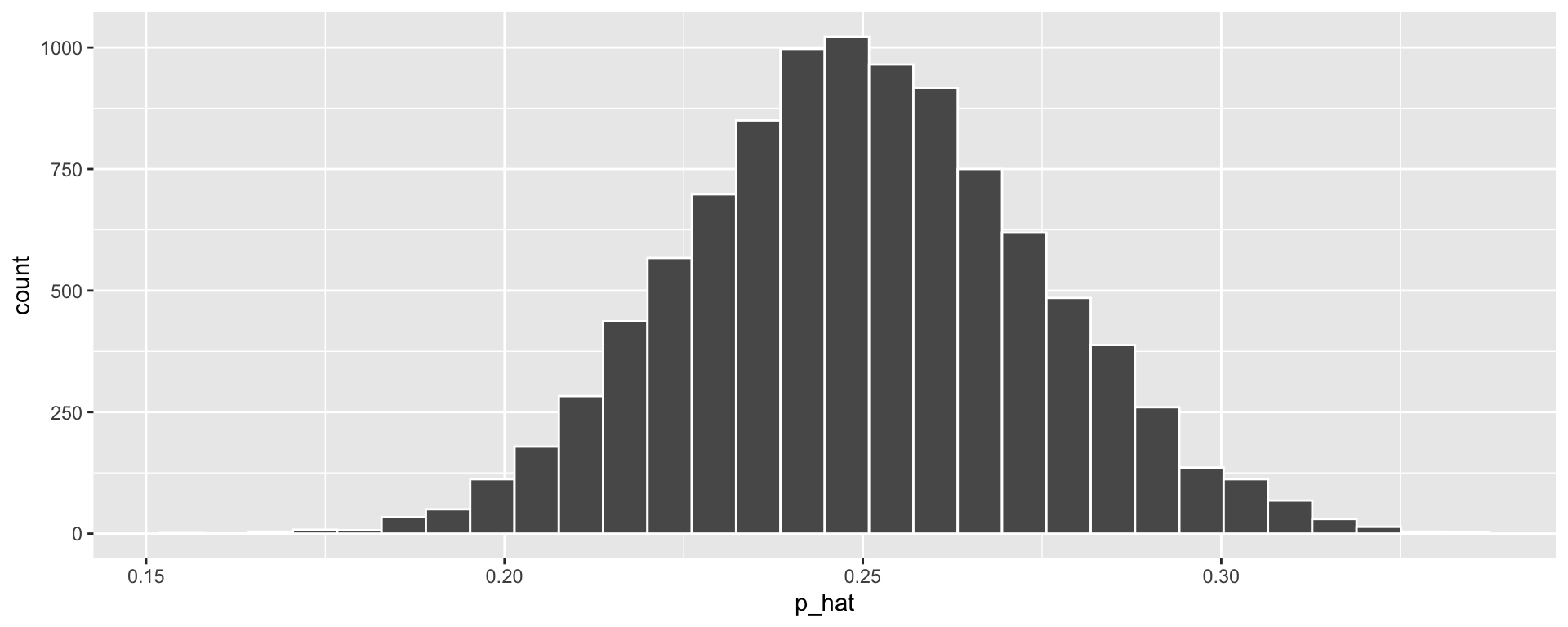

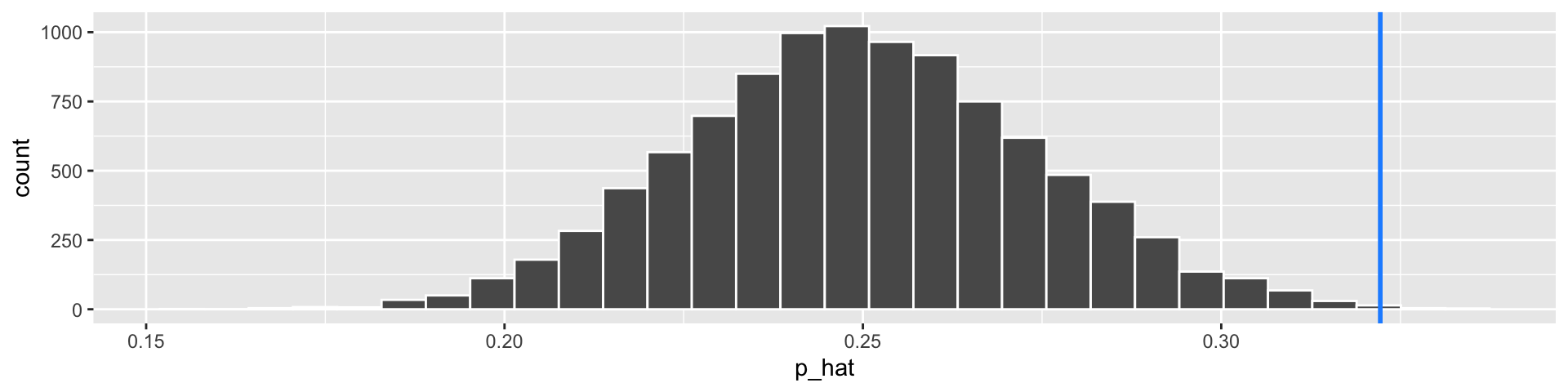

- Describe “Null” distribution. How might we simulate it?

- The distribution of \(\widehat{p}\) (the proportion of correct guesses) we would see if participants guess at random each time.

Steps to simulate this:

- Sample with replacement from a vector of 0’s and 1’s, where we have a 25% chance of sampling 1 each time

- Compute proportion of 1’s sampled

- Repeat 1 and 2 many times.

set.seed(123)

guesses <- c(0, 0, 0, 1) # Like a 4-sided dice with sides 0, 0, 0, 1

null_stats <- data.frame(correct = guesses) %>%

rep_sample_n(size = 329, replace = TRUE,

reps = 10000) %>%

group_by(replicate) %>%

summarize(p_hat = mean(correct))

ggplot(null_stats, aes(x = p_hat)) + geom_histogram(color = "white")

Hypothesis Testing Steps: ESP

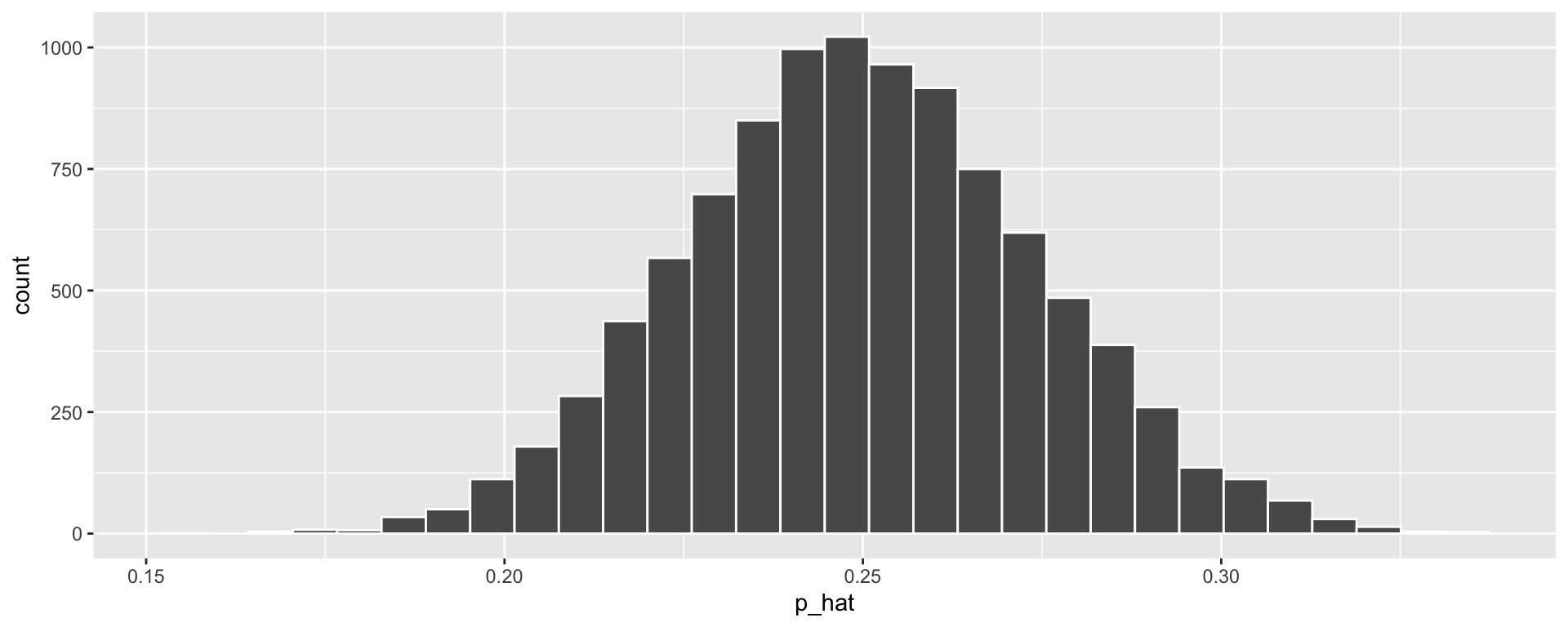

- Describe “Null” distribution. How might we simulate it?

- The distribution of \(\widehat{p}\) (the proportion of correct guesses) we would see if participants guess at random each time.

Steps to simulate this:

- Sample with replacement from a vector of 0’s and 1’s, where we have a 25% chance of sampling 1 each time

- Compute proportion of 1’s sampled

- Repeat 1 and 2 many times.

set.seed(123)

guesses <- c(0, 0, 0, 1) # Like a 4-sided dice with sides 0, 0, 0, 1

null_stats <- data.frame(correct = guesses) %>%

rep_sample_n(size = 329, replace = TRUE,

reps = 10000) %>%

group_by(replicate) %>%

summarize(p_hat = mean(correct))

ggplot(null_stats, aes(x = p_hat)) + geom_histogram(color = "white")

Hypothesis Testing Steps: ESP

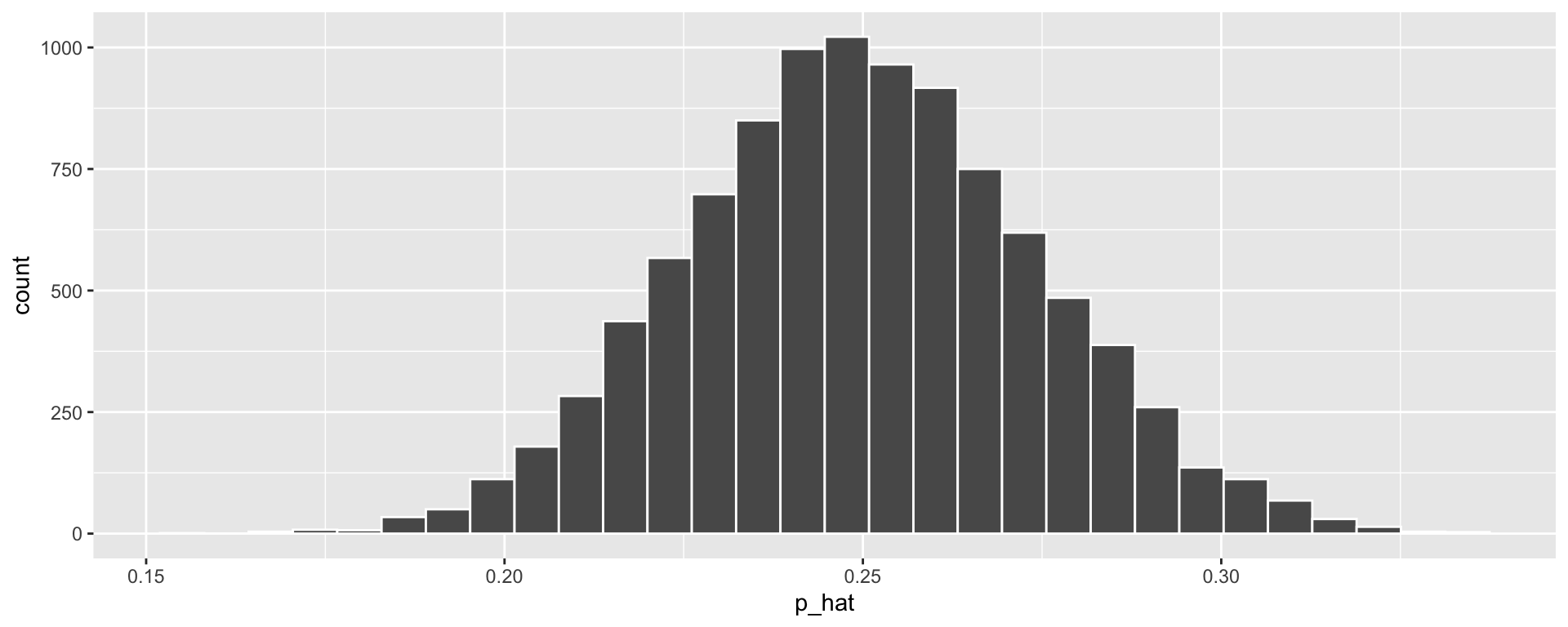

- Describe “Null” distribution. How might we simulate it?

- The distribution of \(\widehat{p}\) (the proportion of correct guesses) we would see if participants guess at random each time.

Steps to simulate this:

- Sample with replacement from a vector of 0’s and 1’s, where we have a 25% chance of sampling 1 each time

- Compute proportion of 1’s sampled

- Repeat 1 and 2 many times.

set.seed(123)

guesses <- c(0, 0, 0, 1) # Like a 4-sided dice with sides 0, 0, 0, 1

null_stats <- data.frame(correct = guesses) %>%

rep_sample_n(size = 329, replace = TRUE,

reps = 10000) %>%

group_by(replicate) %>%

summarize(p_hat = mean(correct))

ggplot(null_stats, aes(x = p_hat)) + geom_histogram(color = "white")

Hypothesis Testing Steps: ESP

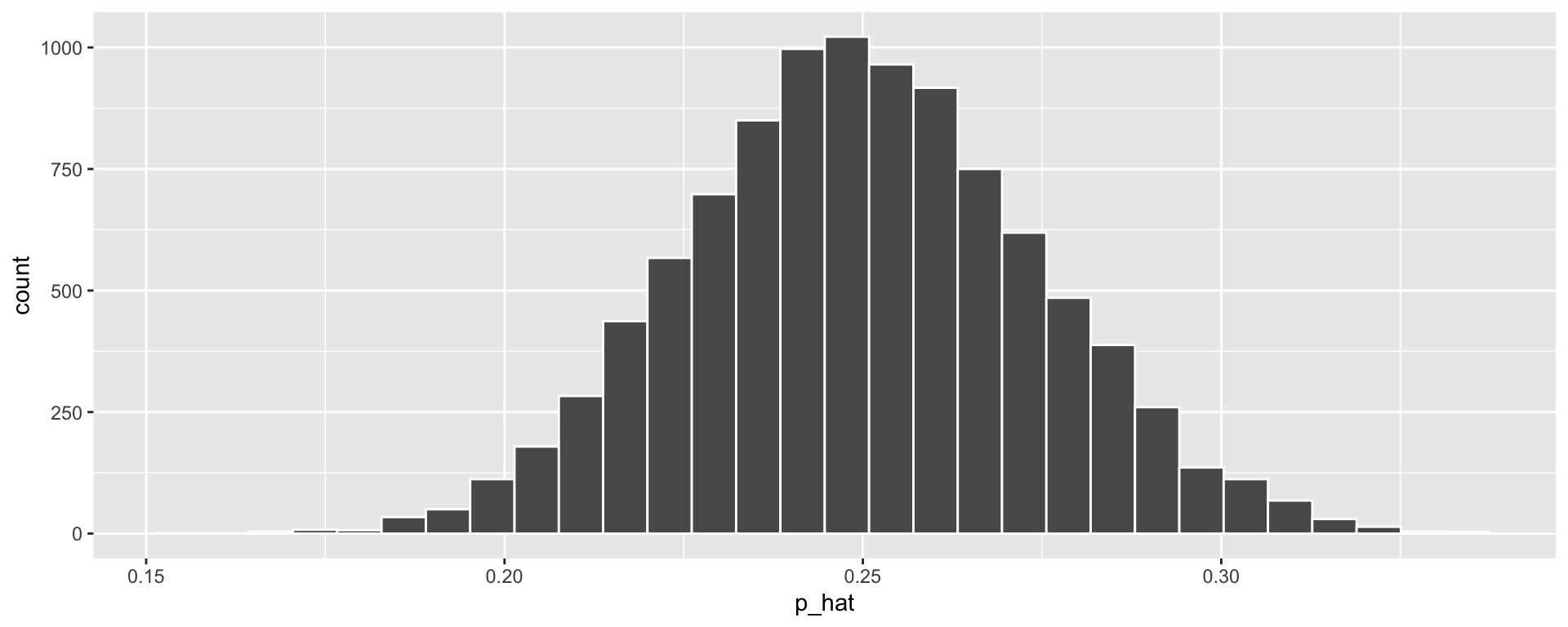

- Describe “Null” distribution. How might we simulate it?

- The distribution of \(\widehat{p}\) (the proportion of correct guesses) we would see if participants guess at random each time.

Steps to simulate this:

- Sample with replacement from a vector of 0’s and 1’s, where we have a 25% chance of sampling 1 each time

- Compute proportion of 1’s sampled

- Repeat 1 and 2 many times.

set.seed(123)

guesses <- c(0, 0, 0, 1) # Like a 4-sided dice with sides 0, 0, 0, 1

null_stats <- data.frame(correct = guesses) %>%

rep_sample_n(size = 329, replace = TRUE,

reps = 10000) %>%

group_by(replicate) %>%

summarize(p_hat = mean(correct))

ggplot(null_stats, aes(x = p_hat)) + geom_histogram(color = "white")

Hypothesis Testing Steps: ESP

- What is our relevant “Test Statistic”?

- \(\widehat{p}\) = 106 / 329 = 0.32

- How can we calculate the “P-value”?

- Can use probability theory to find \(P(\text{Correct guesses} \geq 106) = \ldots ?\)

- Or our simulated null distribution

- How would we use the P-value to make a conclusion on the research question?

- If the p-value is “small enough”, we reject the null hypothesis of random guessing.

Hypothesis Testing: ESP

We got a pretty small p-value (\(0.0013\)). Hooray! It is reasonable to reject the null hypothesis.

But really, do we believe that ESP is real?

- Next lecture, we’ll talk more about hypothesis testing errors.

Side Note: Reproducibility and Replicatability

Two important words in data analysis:

- Reproducibility

- Replicability

Reproducibility: If I give you the raw data and my write-up, you will get to the exact same final numbers that I did.

- By using

QuartoDocuments, we are learning a reproducible workflow.

- By using

Replicability: If you follow my study design but collect new data (i.e. repeat my study on new subjects), you will come to the same conclusions that I did.

- Sadly, replication studies of Bem and Honorton’s ESP trials largely failed to find evidence of ESP.

Switching gears: 1 vs 2 sided tests

Say we’re flipping a coin 20 times, and (for some reason) we are worried that it’s not a “fair” coin

- i.e., The probability of heads is not 0.5

Let \(p=\) probability of getting a heads with this coin

Q: What is the null hypothesis?

Possible alternative hypotheses:

- \(H_a: p \leq 0.5\)

- \(H_a: p \geq 0.5\)

- \(H_a: p \neq 0.5\)

We’ve been thinking about the first two cases (1 sided tests). Let’s see what changes if \(H_a: p \neq 0.5\) (2 sided test).

Recall the hypothesis testing steps again:

Present research question and identify hypotheses (\(H_0\) and \(H_a\))

- \(H_a: p \leq 0.5\), or \(p \geq 0.5\), or \(p \neq 0.5\)?

- \(H_0: p = 0.5\)

Describe “Null” distribution

- This is the same regardless of which \(H_a\) we use, depends only on \(H_0\)

Obtain data, calculate relevant “Test Statistic”

Calculate the “P-value”

- P-value = likelihood of observing the Test Statistic or something more extreme assuming the Null Hypothesis

Use the P-value to make a conclusion on the research question

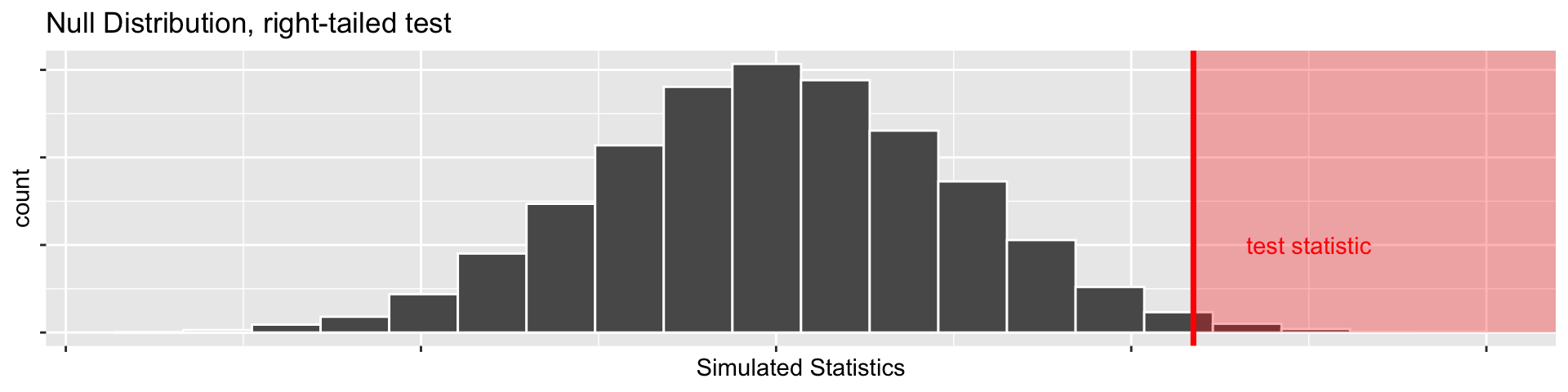

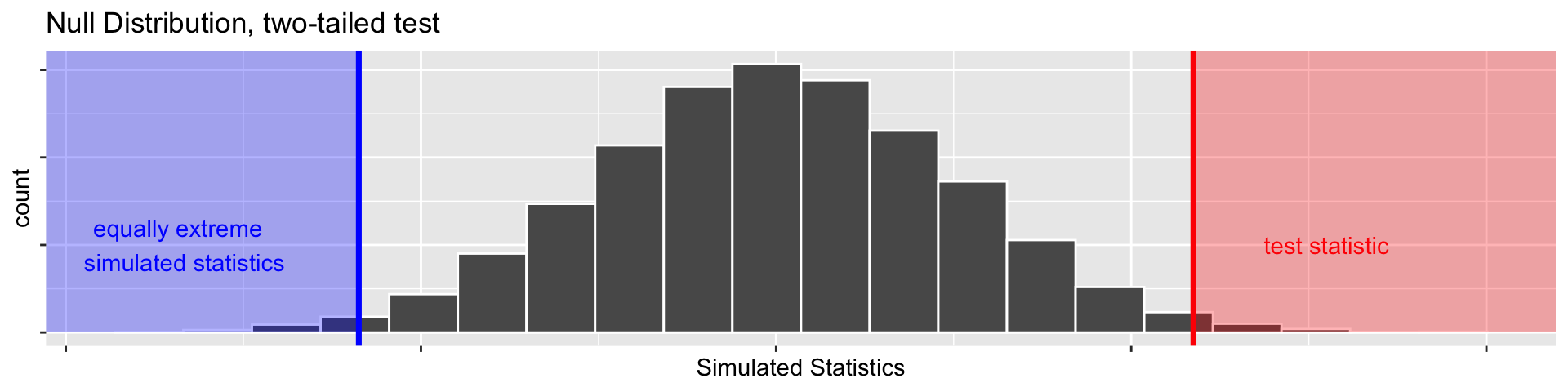

1 vs 2 sided tests: what does “more extreme” mean?

- Suppose our test statistic from 20 coin flips is \(\widehat{p}= 0.85\).

- If we have \(H_a: p > 0.5\) (right-tailed test)

- We’d want \(\mathrm{P}(\widehat{p} \geq 0.85)\) under the null hypothesis as our P-value

1 vs 2 sided tests: what does “more extreme” mean?

- Suppose our test statistic from 20 coin flips is \(\widehat{p}= 0.85\).

- Suppose we have \(H_a: p \neq 0.5\)

- \(\widehat{p}= 0.85\) is 0.35 more than \(p = 0.5\), so we want to include \(\mathrm{P}(\widehat{p} \geq 0.85)\)

- \(\widehat{p}= 0.15\) is 0.35 less than \(p = 0.5\), so we’d also want \(\mathrm{P}(\widehat{p} \leq 0.15)\) (this would be more extreme in the other direction)

- So our P-value would be the sum of those two things (both tails)!

1 vs 2 sided tests: what does “more extreme” mean?

Note the effect that our choice of \(H_a\) had on our p-values!

- Our test statistic was \(\widehat{p}= 0.85\)

- \(H_a: p \leq 0.5\): P-value = 0.9649

- \(H_a: p \geq 0.5\): P-value = 0.0351

- \(H_a: p \neq 0.5\): P-value = 0.0732

Another Example

Can you tell if a mouse is in pain by looking at its facial expression? A recent study created a “mouse grimace scale” and tested to see if there was a positive correlation between scores on that scale and the degree of pain (based on injections of a weak and mildly painful solution). The study’s authors believe that if the scale applies to other mammals as well, it could help veterinarians test how well painkillers and other medications work in animals.

Q: Write out \(H_0\) and \(H_a\) qualitatively (in words).

Q: Write out \(H_0\) and \(H_a\) in terms of population parameters. (Hint: recall that we use \(r\) for correlation)

Q: Describe how we’d expect the data to behave under \(H_0\).

Another Example!

Can a simple smile have an effect on punishment assigned following an infraction? In a 1995 study, Hecht and LeFrance examined the effect of a smile on the leniency of disciplinary action for wrongdoers. Participants in the experiment took on the role of members of a college disciplinary panel judging students accused of cheating. For each suspect, along with a description of the offense, a picture was provided with either a smile or neutral facial expression. A leniency score was calculated based on the disciplinary decisions made by the participants.

Q: Write out \(H_0\) and \(H_a\) qualitatively (in words).

Q: Write out \(H_0\) and \(H_a\) in terms of population parameters (Hint, we can write the population mean leniency score for smilers as \(\mu_S\)).

Q: Describe how we’d expect the data to behave under \(H_0\).

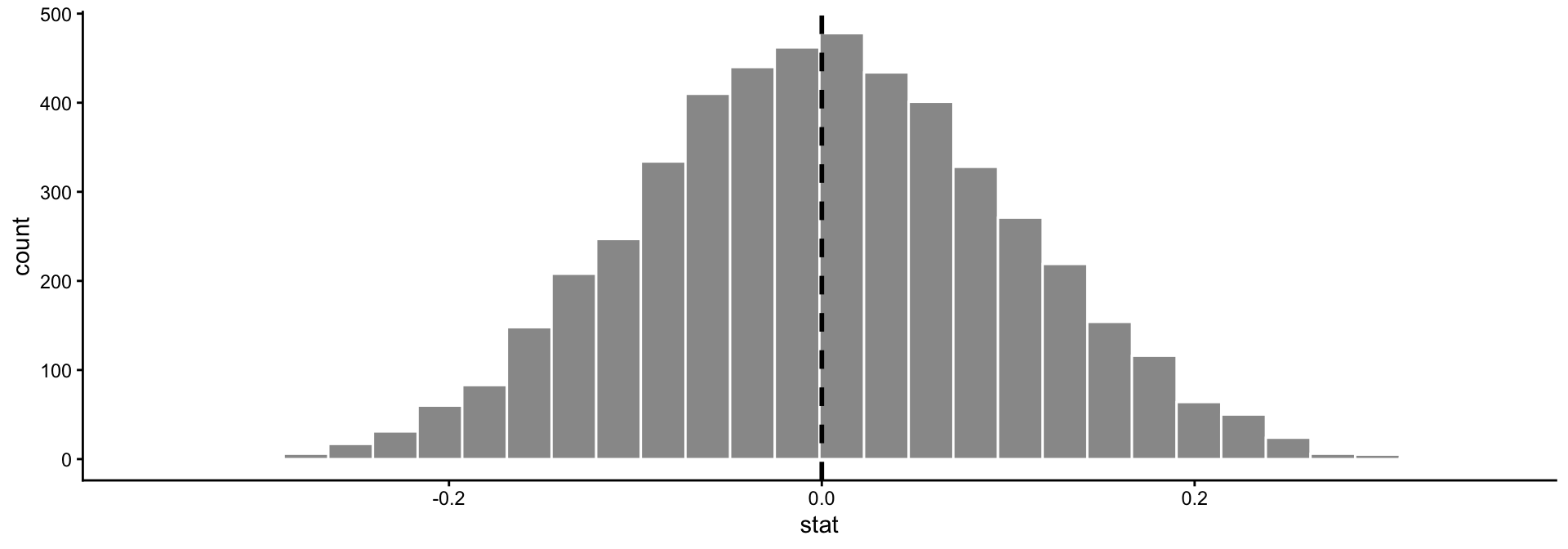

Null distributions: how to generate them for these other examples?

- Rat Pain and grimacing :(

- \(H_0\): The correlation between grimacing and the level of pain is 0

- Under the null, grimacing and level of pain are totally unrelated

- To approximate the null distribution, we could:

- Randomly shuffle the

grimacecolumn of our data - Calculate the correlation between

grimaceandpainin the shuffled data - Repeat 1-2 many times.

- Randomly shuffle the

set.seed(111)

df <- data.frame(pain = runif(100, 0, 10),

grimace = rnorm(100, 10, 2))

null_stats <- df %>% select(grimace) %>%

rep_sample_n(size = 100, replace = FALSE,

reps = 5000) %>%

add_column(pain = rep(df$pain, times = 5000)) %>%

group_by(replicate) %>%

summarize(stat = cor(grimace, pain))

ggplot(null_stats, aes(x = stat)) +

geom_histogram(color = "white", fill = "gray60") +

geom_vline(aes(xintercept = 0), lty = 2,

col = "black", lwd = 1) +

theme_classic()

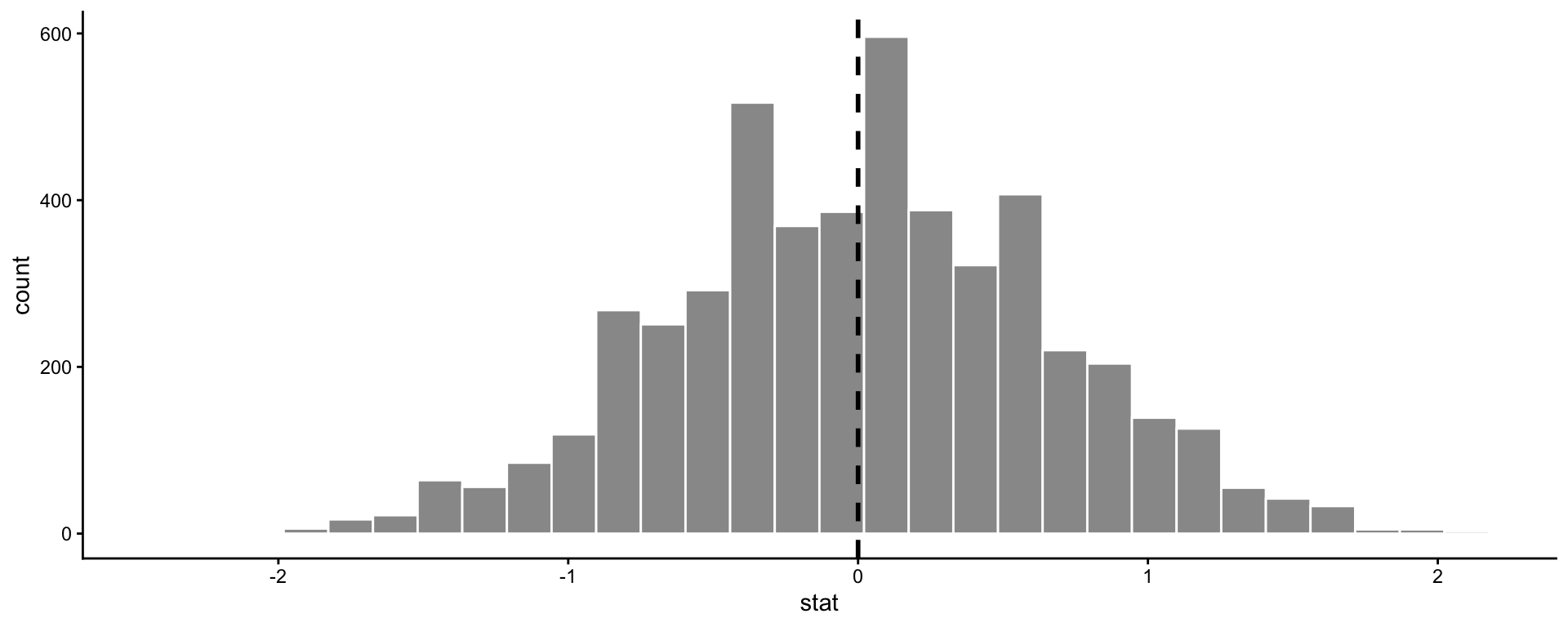

Null distributions: how to generate them for these other examples?

- Discipline for Smilers vs Non-Smilers

- \(H_0\): We expect the difference in average leniency scores between the smiling and non-smiling groups to be zero - no relationship between the leniency score and the treatment group.

- To approximate the null distribution, we could:

- Randomly shuffle the

treatmentcolumn of our data - Calculate the difference in means between the leniency scores in each group

- Repeat 1-2 many times.

- Randomly shuffle the

set.seed(111)

df <- data.frame(treatment = rep(c("smile", "neutral"), times = 30),

score = round(runif(60, 0, 10)))

null_stats <- df %>% select(treatment) %>%

rep_sample_n(size = 60, replace = FALSE, reps = 5000) %>%

add_column(score = rep(df$score, times = 5000)) %>%

group_by(replicate, treatment) %>%

summarize(ybar = mean(score)) %>%

pivot_wider(names_from = treatment, values_from = ybar) %>%

mutate(stat = smile - neutral)

ggplot(null_stats, aes(x = stat)) +

geom_histogram(color = "white", fill = "gray60") +

geom_vline(aes(xintercept = 0), lty = 2, col = "black", lwd = 1) +

theme_classic()

Next time

- Decisions in hypothesis testing

- Types of hypothesis testing errors

- Power