Time n

1 1 12

2 2 12

Linear Models IV: Multiple Regression

Megan Ayers

Math 141 | Spring 2026

Monday, Week 5

Announcements

- Week 4 assessments

- Office hours changes

Goals for Today

- Handling categorical and quantitative explanatory variables at the same time.

- Compare parallel slopes and interaction models for multiple regression

- Transformations

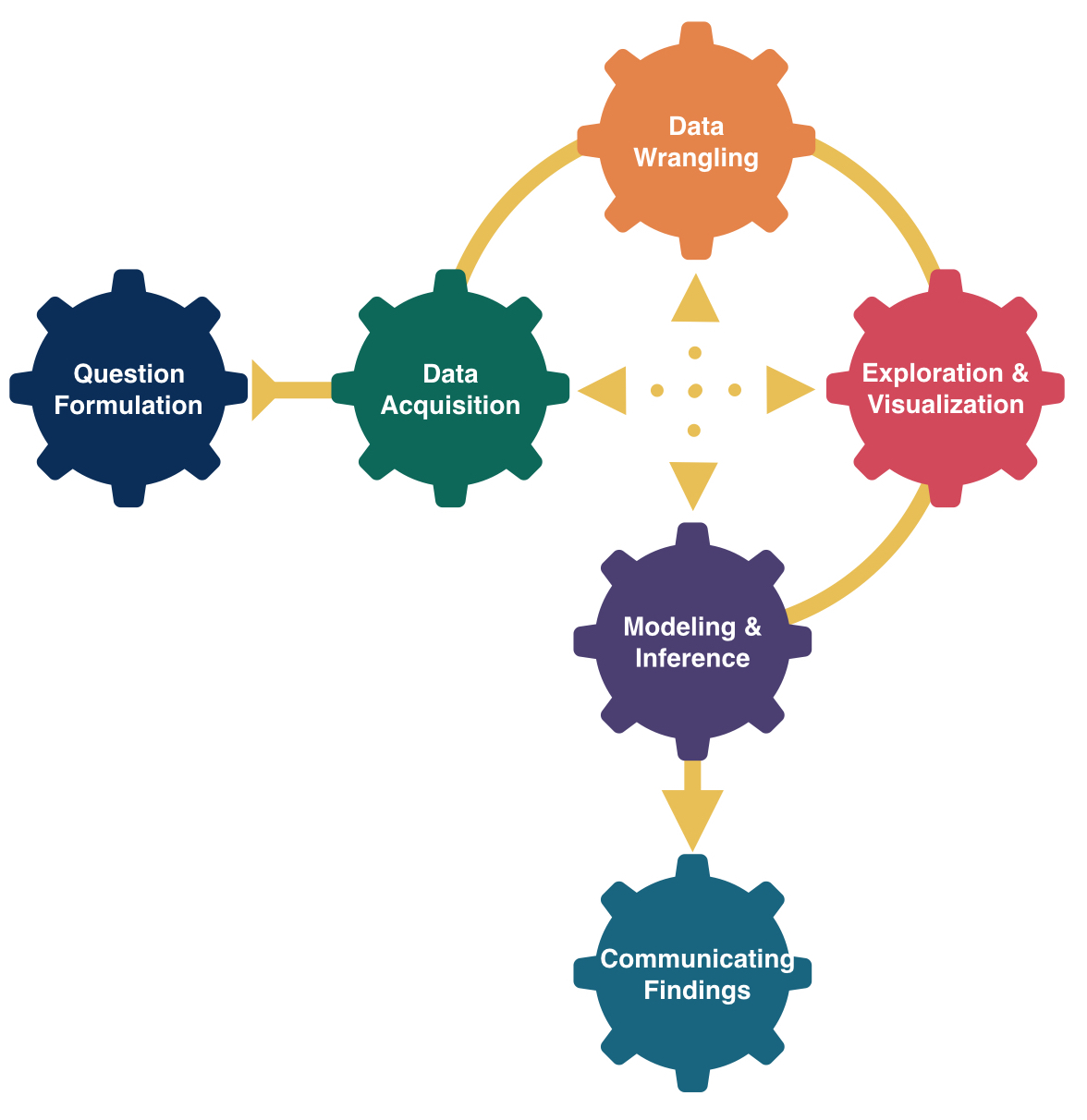

Multiple Linear Regression

Form of the Model:

\[ \begin{align} y &= \beta_0 + \beta_1 x_1 + \beta_2 x_2 + \cdots + \beta_p x_p + \epsilon \end{align} \]

How does extending to more predictors change our process?

- What doesn’t change:

- Still use Method of Least Squares to estimate coefficients

- Still use

lm()to fit the model andpredict()for prediction

- What does change:

- Meaning of the coefficients are more complicated and depend on other variables in the model

- Need to decide which variables to include and how (linear term, squared term…)

Multiple Linear Regression

We are going to see a few examples of multiple linear regression this week.

We will need to return to modeling later in the course once we have learned about statistical inference (i.e., confidence intervals and p-values).

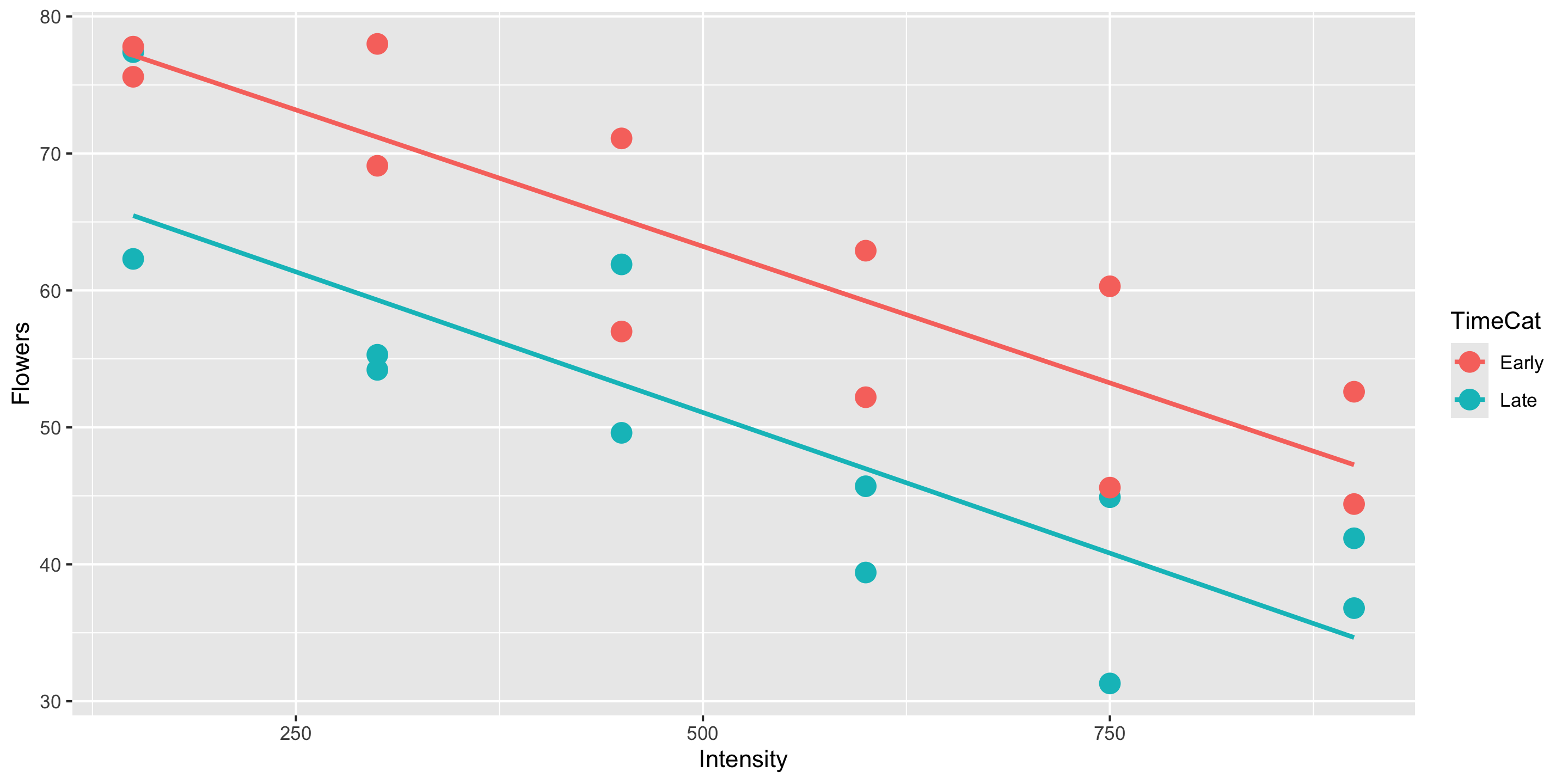

Example

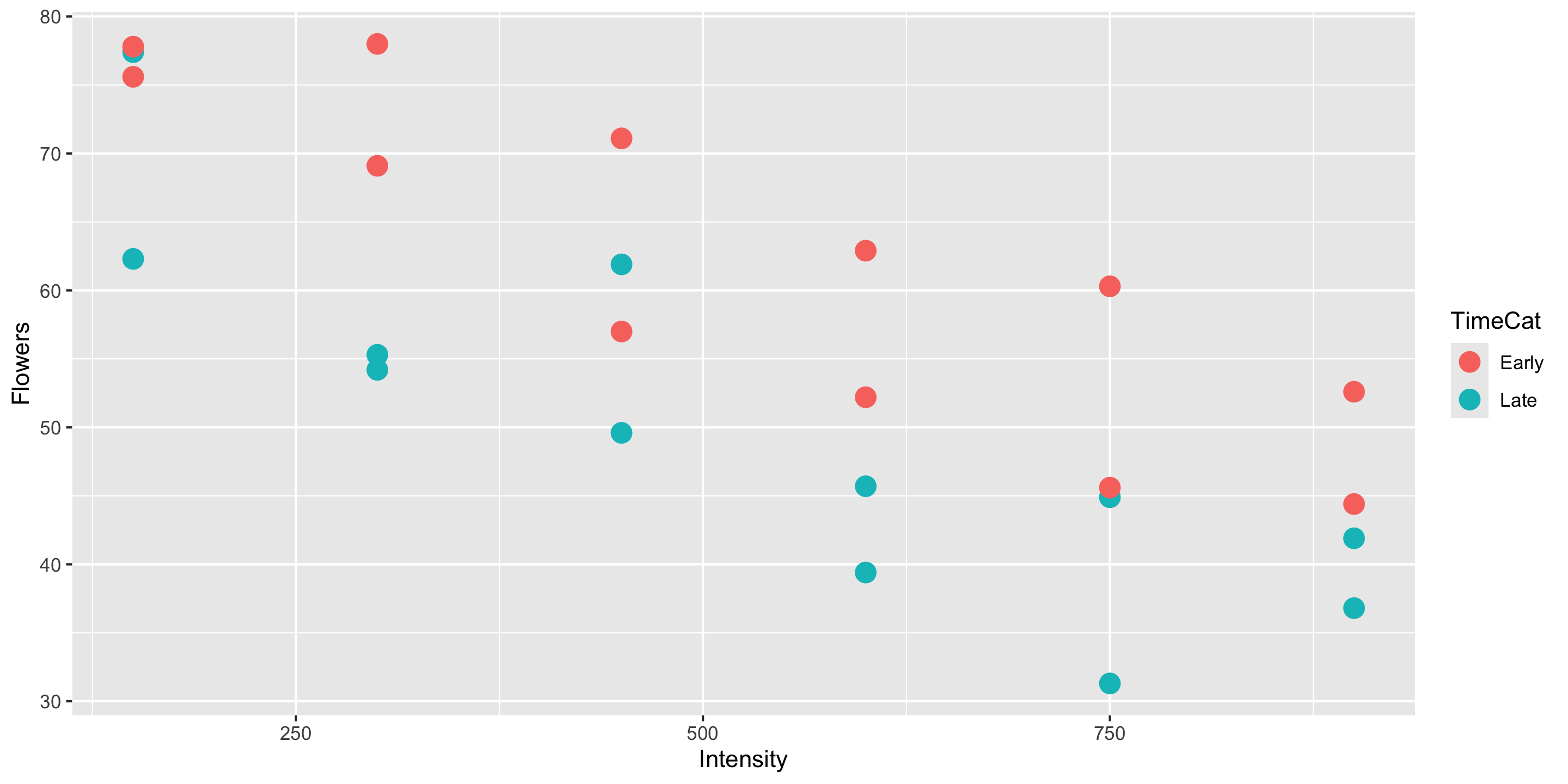

Meadowfoam is a plant that grows in the Pacific Northwest and is harvested for its seed oil. In a randomized experiment, researchers at Oregon State University looked at how two light-related factors influenced the number of flowers per meadowfoam plant, the primary measure of productivity for this plant. The two light measures were light intensity (in mmol/ \(m^2\) /sec) and the timing of onset of the light (early or late in terms of photo periodic floral induction).

Response variable?

Explanatory variables?

Model Form?

Data Loading and Wrangling

Visualizing the Data

Q: How does this plot help us intuit what \(\widehat{\beta}_0\), \(\widehat{\beta}_1\), and \(\widehat{\beta}_2\) might be?

Why don’t I have to include

data =andmapping =in myggplot()layer?

Building the Linear Regression Model

Full model form:

# A tibble: 3 × 7

term estimate std_error statistic p_value lower_ci upper_ci

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 intercept 83.5 3.27 25.5 0 76.7 90.3

2 Intensity -0.04 0.005 -7.89 0 -0.051 -0.03

3 TimeCat: Late -12.2 2.63 -4.62 0 -17.6 -6.69- Estimated regression line for TimeCat = “Late”

- Estimated regression line for TimeCat = “Early”:

Appropriateness of Model Form

Is the assumption of equal slopes reasonable here?

Prediction

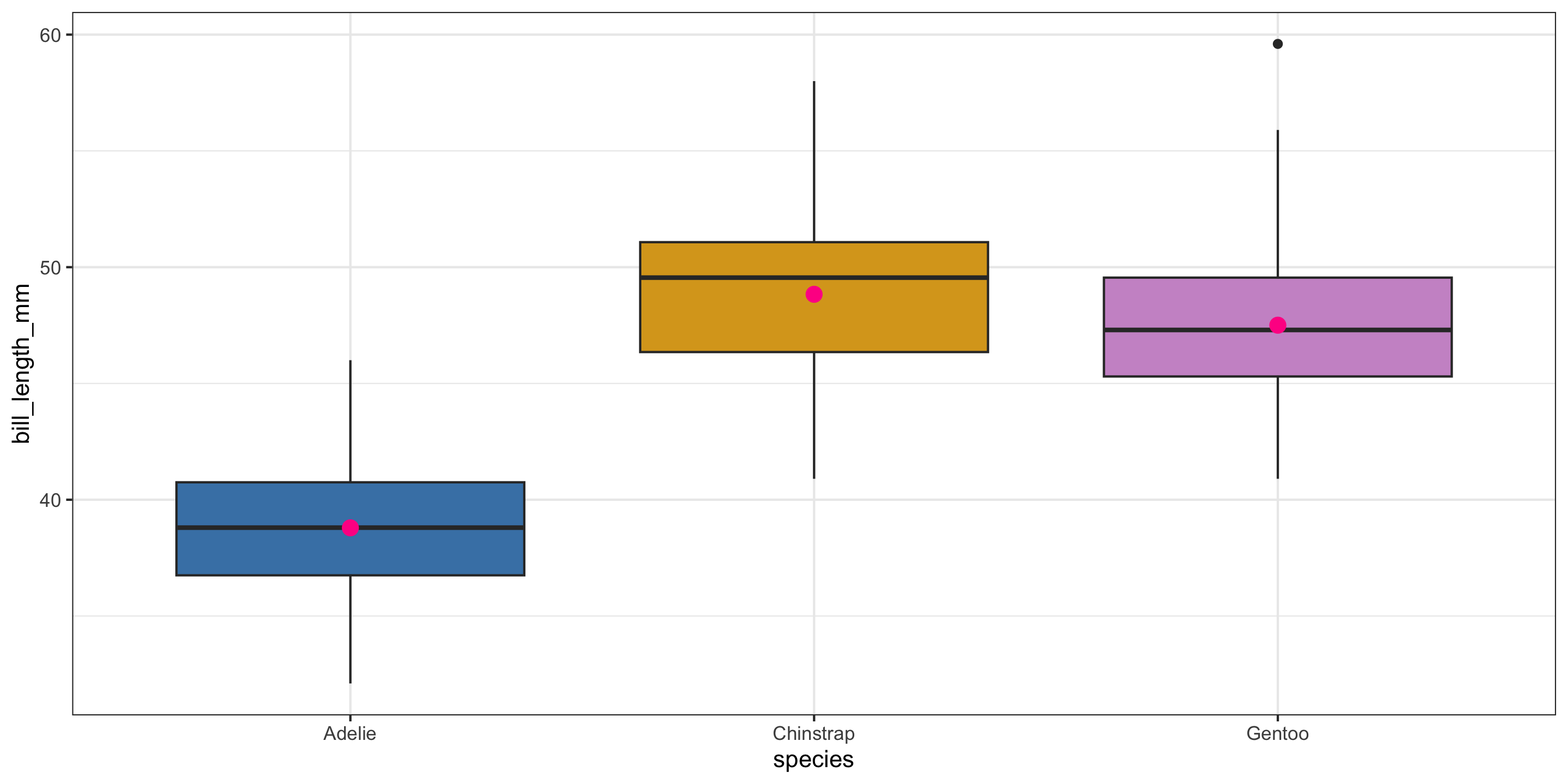

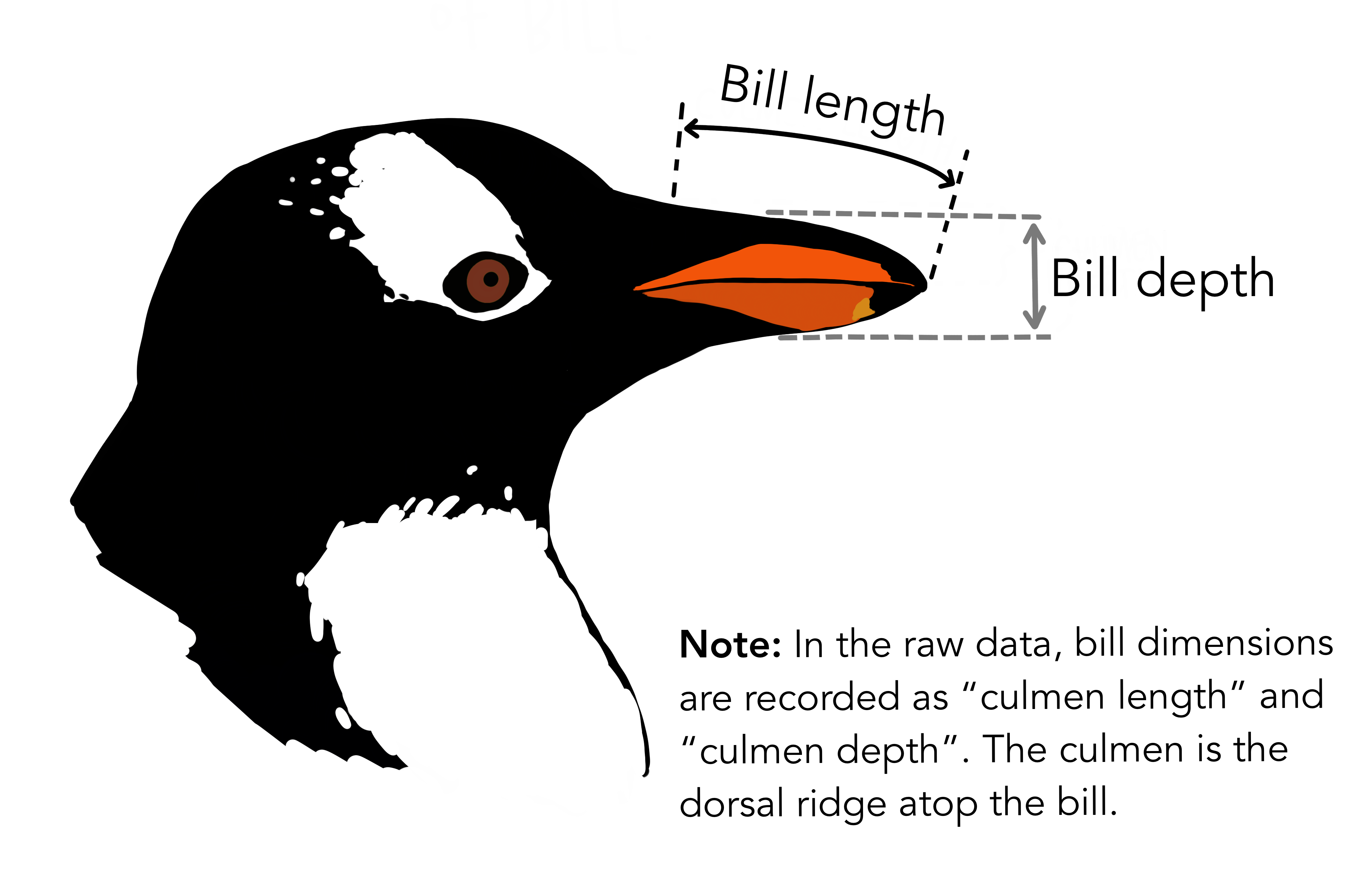

Returning to the Palmer Penguins

Last time:

We predicted a penguin’s bill length based on their species.

- Pink dots represent the mean value in each group.

- For the single categorical variable model, those pink dots are the predicted values for each group.

This time:

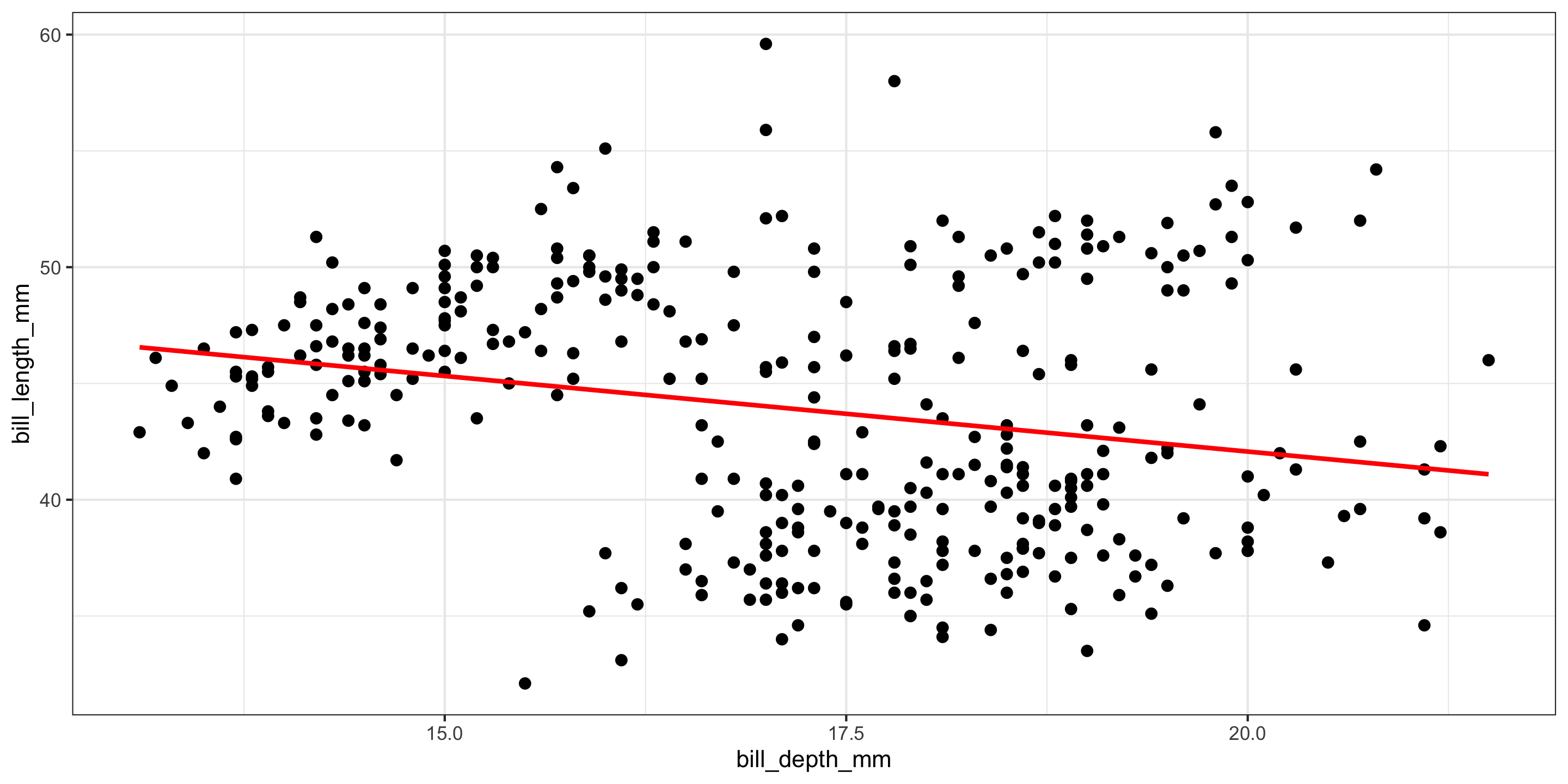

We’ll incorporate bill depth for prediction!

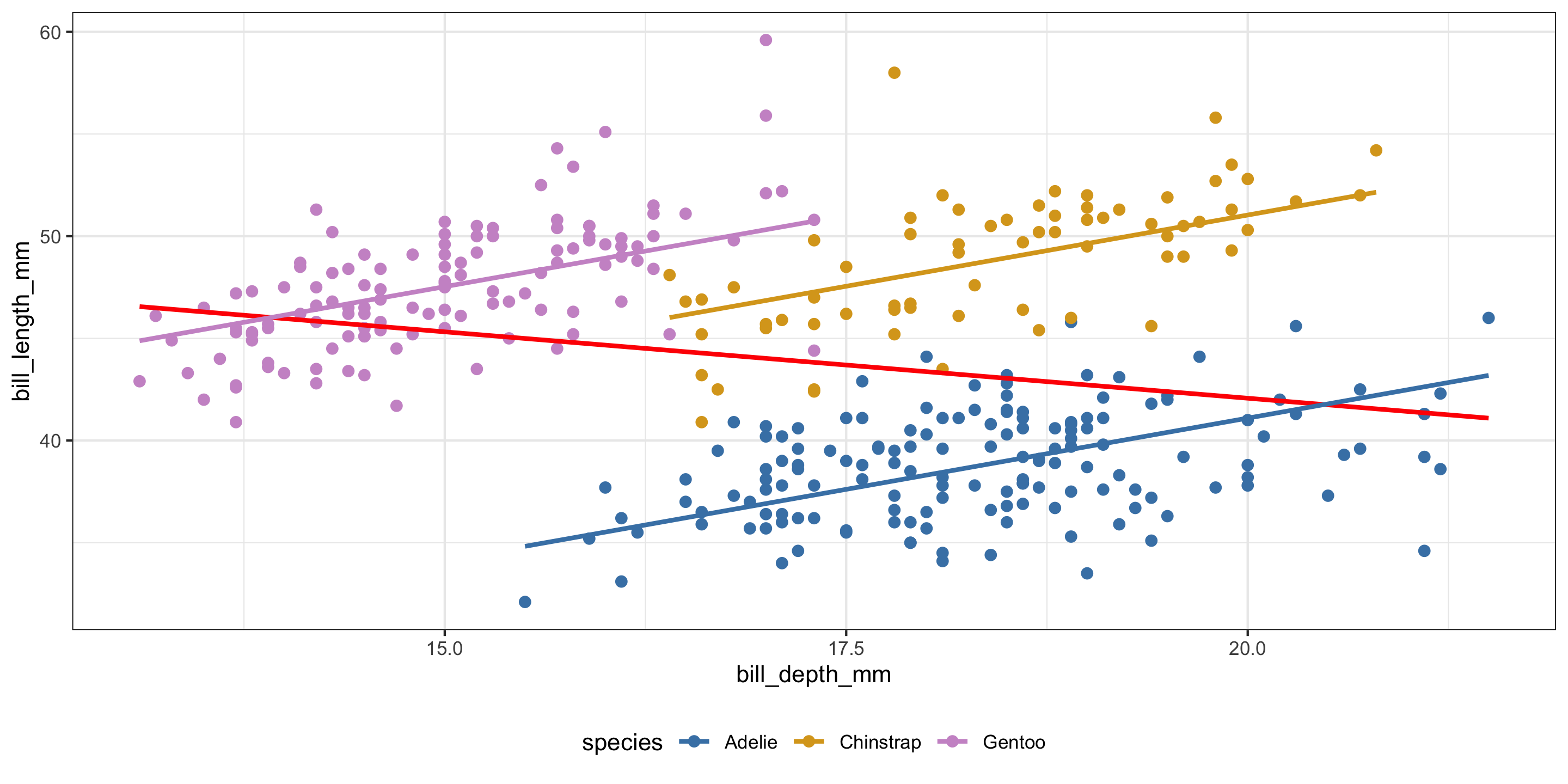

- A moderate negative relationship between bill length and bill depth!

- Does this make sense?

What if we include both explanatory variables?

ggplot(penguins, aes(x = bill_depth_mm,

y = bill_length_mm,

color = species)) +

geom_point(size = 2) +

geom_smooth(inherit.aes = FALSE,

mapping = aes(x = bill_depth_mm,

y = bill_length_mm),

method = "lm", se = FALSE,

color = "red") +

geom_parallel_slopes(se = FALSE) +

scale_color_manual(values = c("steelblue",

"goldenrod",

"plum3")) +

theme_bw() +

theme(legend.position = "bottom")

- Negative relationships between bill depth and bill length overall.

- Positive relationships between bill depth and bill length when accounting for species!

- What is going on here??

- This is a case of Simpson’s Paradox.

Three candidate models

Explanatory variable: species

# A tibble: 3 × 2

term estimate

<chr> <dbl>

1 intercept 38.8

2 species: Chinstrap 10.0

3 species: Gentoo 8.71Explanatory variables: species and bill_depth_mm (equal slope)

- Coefficient interpretations?

Interpreting the Multiple Regression Equation

# A tibble: 4 × 7

term estimate std_error statistic p_value lower_ci upper_ci

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 intercept 13.2 2.25 5.88 0 8.80 17.6

2 bill_depth_mm 1.39 0.122 11.4 0 1.15 1.63

3 species: Chinstrap 9.94 0.368 27.0 0 9.22 10.7

4 species: Gentoo 13.4 0.512 26.2 0 12.4 14.4 \[ \widehat{y}= 13.2 + 1.39 \cdot x_{\textrm{Bill Depth}} + 9.94 \cdot x_{\textrm{Species:Chinstrap}} + 13.4 \cdot x_{\textrm{Species:Gentoo}} \]

- Slope Coefficient on Quantitative Variable: The expected/predicted change in response variable, on average, per unit increase in the explanatory variable, holding all other variables constant.

- Example: We expect a 1.394mm increase in bill length for every 1mm increase in bill depth, on average, after holding species constant.

- Interpretation on factors from Categorical Variables still applies: the expect/predicted change in response variable, on average, when going from the reference level to the factor’s level, holding all other variables constant.

Interpreting the Multiple Regression Equation

# A tibble: 4 × 7

term estimate std_error statistic p_value lower_ci upper_ci

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 intercept 13.2 2.25 5.88 0 8.80 17.6

2 bill_depth_mm 1.39 0.122 11.4 0 1.15 1.63

3 species: Chinstrap 9.94 0.368 27.0 0 9.22 10.7

4 species: Gentoo 13.4 0.512 26.2 0 12.4 14.4 \[ \widehat{y}= 13.2 + 1.39 \cdot x_{\textrm{Bill Depth}} + 9.94 \cdot x_{\textrm{Species:Chinstrap}} + 13.4 \cdot x_{\textrm{Species:Gentoo}} \]

- Intercept Coefficient: The expected/predicted value of the response, on average, when all explanatory variables are set to 0.

- What does setting species to 0 mean in this context?

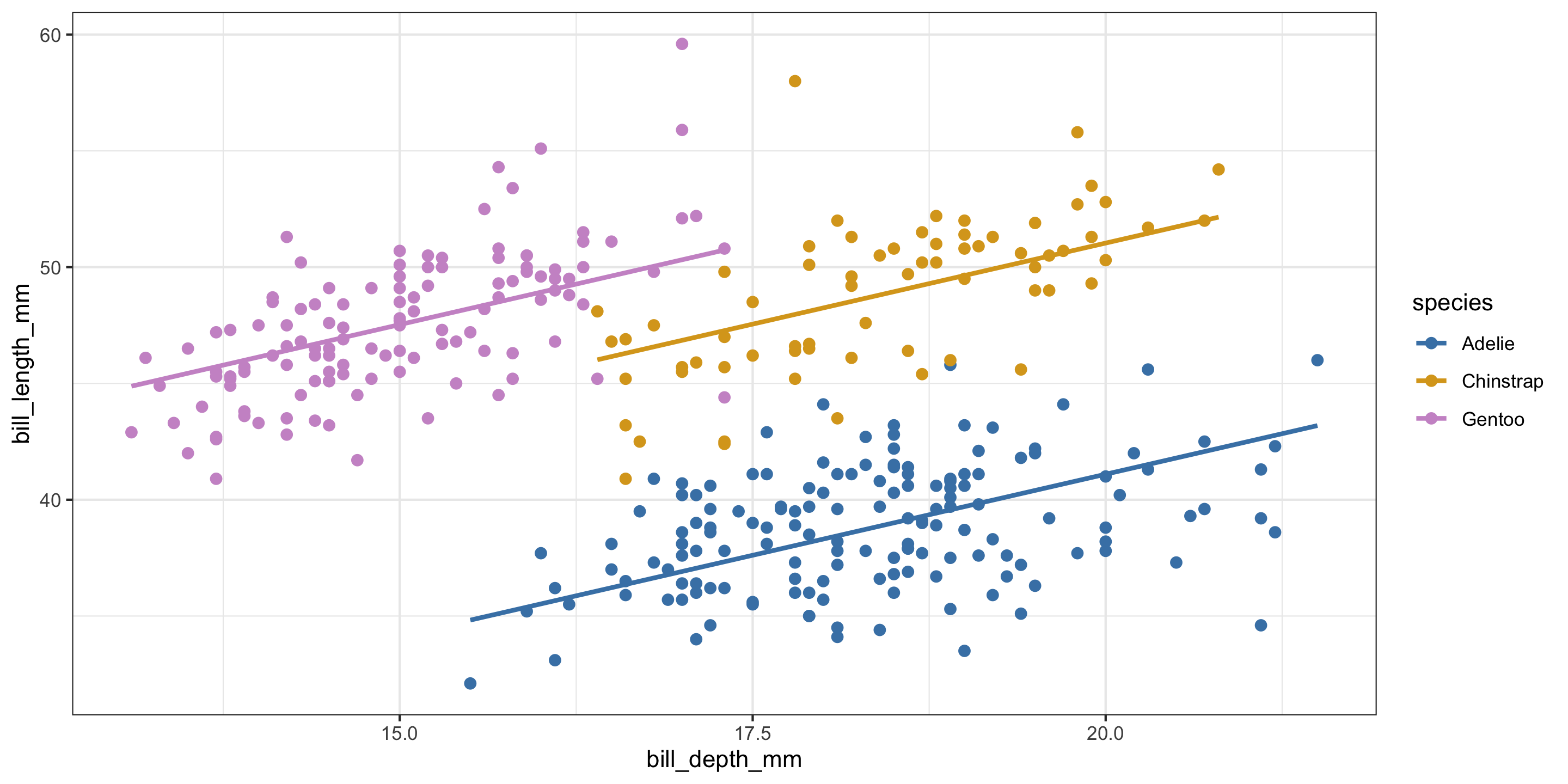

Returning to our MLR model

Are equal slopes a reasonable assumption here?

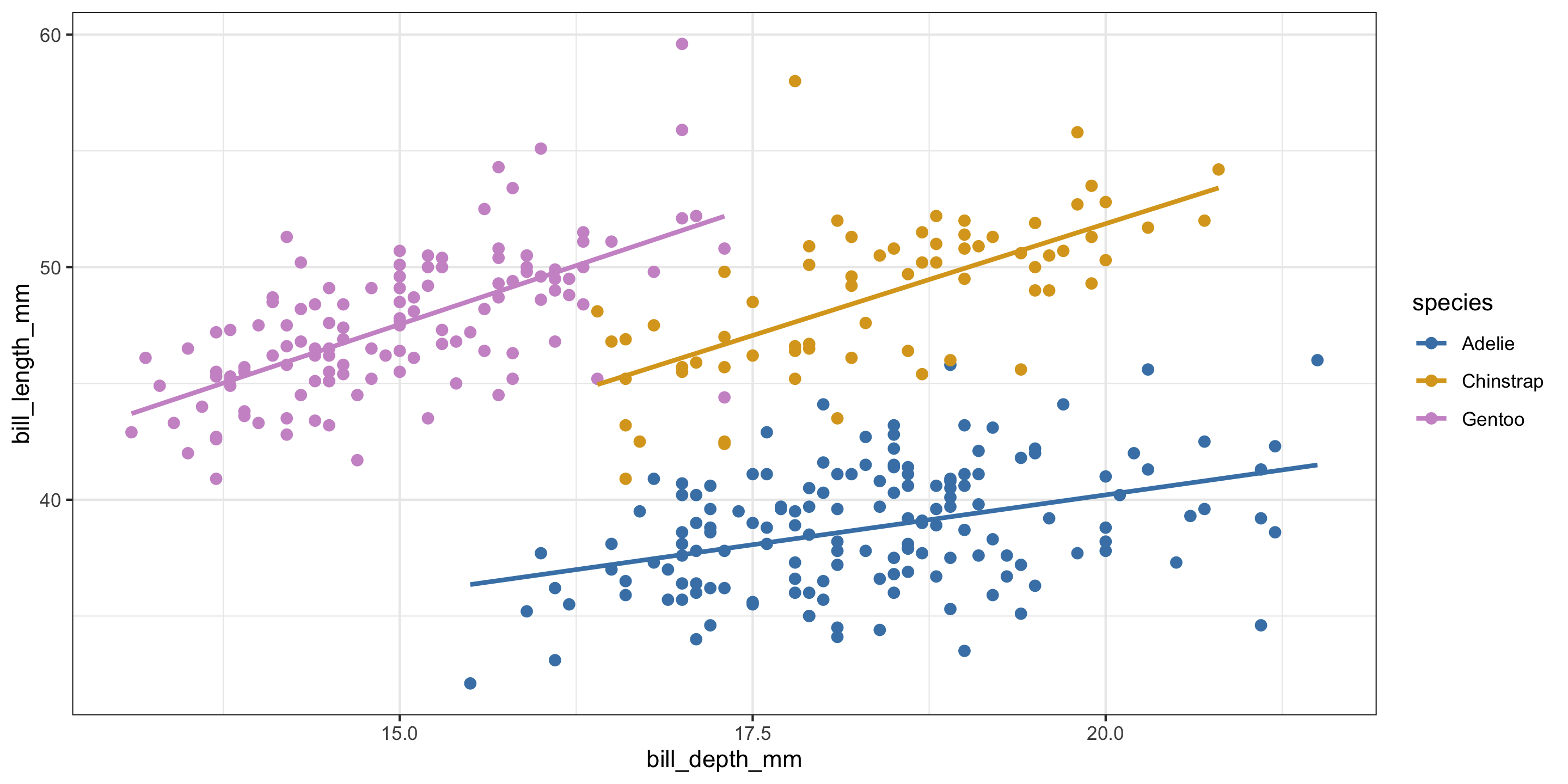

Different Slopes Model

Equal slopes models force the relationship between quantitative predictors and the response variable to be the same for each group in the model.

In contrast, different slopes models allow for different relationships between quantitative predictors and the response variable for each group in the model.

How can we allow our model to have different slopes?

Contrasting model forms

Recall the equal slopes model:

\[ y = \beta_0 + \beta_1 \cdot x_{\textrm{Bill Depth}} + \beta_2 \cdot x_{\textrm{Species:Chinstrap}} + \beta_3 \cdot x_{\textrm{Species:Gentoo}} + \epsilon \]

How can we allow the slopes to vary?

\[ y = \beta_0 + \beta_1 \cdot x_{\textrm{Bill Depth}} + \beta_2 \cdot x_{\textrm{Species:Chinstrap}} + \beta_3 \cdot x_{\textrm{Species:Gentoo}} + \\ \beta_4 \cdot x_{\textrm{Bill Depth}} \cdot x_{\textrm{Species:Chinstrap}} + \beta_5 \cdot x_{\textrm{Bill Depth}} \cdot x_{\textrm{Species:Gentoo}} + \epsilon \]

- Coefficient interpretation?

Different Slopes Model in R

# A tibble: 4 × 7

term estimate std_error statistic p_value lower_ci upper_ci

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 intercept 13.2 2.25 5.88 0 8.80 17.6

2 bill_depth_mm 1.39 0.122 11.4 0 1.15 1.63

3 species: Chinstrap 9.94 0.368 27.0 0 9.22 10.7

4 species: Gentoo 13.4 0.512 26.2 0 12.4 14.4 # A tibble: 6 × 7

term estimate std_error statistic p_value lower_ci upper_ci

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 intercept 23.1 3.02 7.65 0 17.1 29.0

2 bill_depth_mm 0.857 0.164 5.22 0 0.534 1.18

3 species: Chinstrap -9.64 5.72 -1.69 0.093 -20.9 1.60

4 species: Gentoo -5.84 4.54 -1.29 0.199 -14.8 3.08

5 bill_depth_mm:speciesC… 1.06 0.31 3.44 0.001 0.455 1.68

6 bill_depth_mm:speciesG… 1.16 0.279 4.17 0 0.615 1.71Detour: handling curved relationships

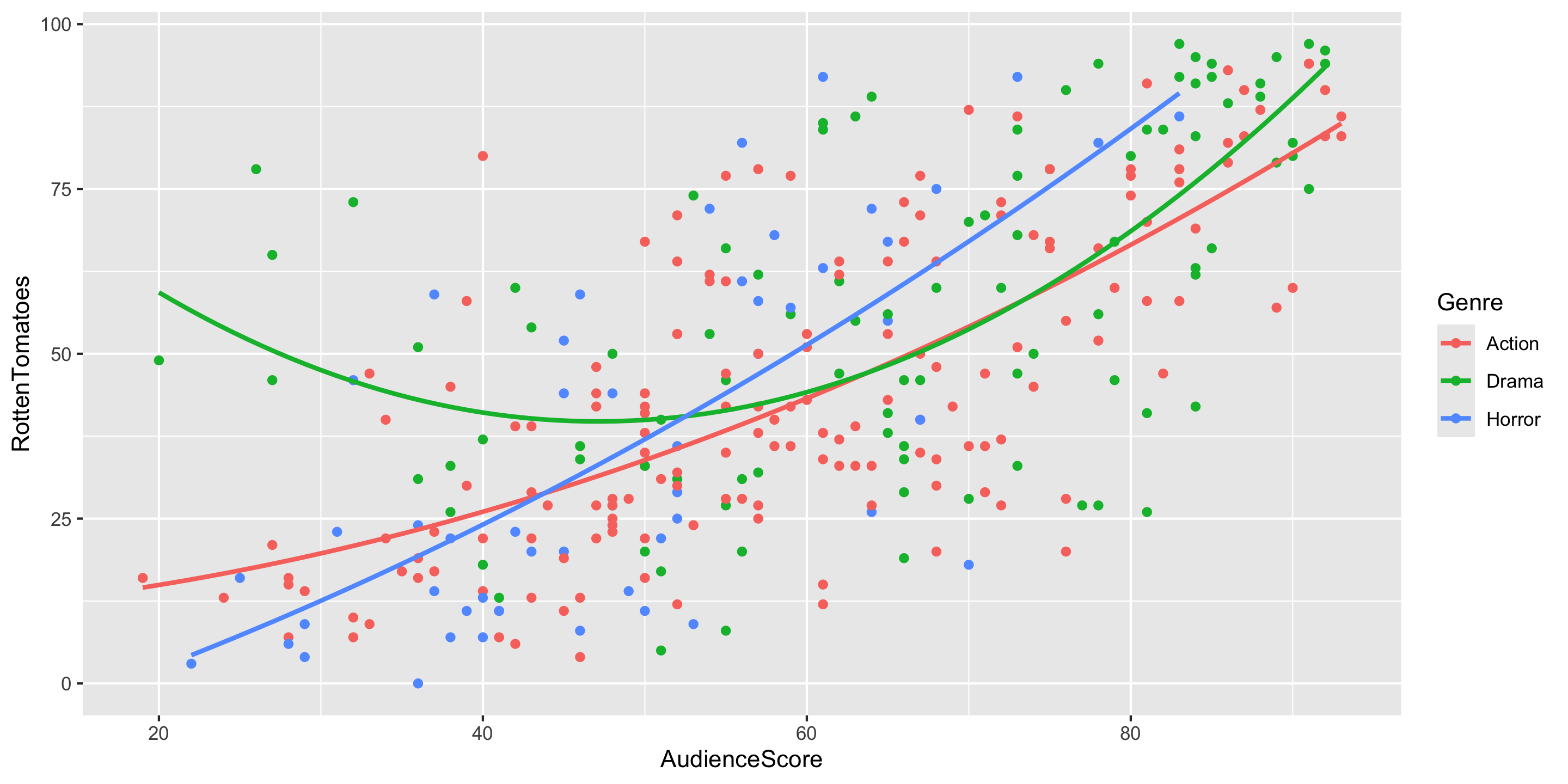

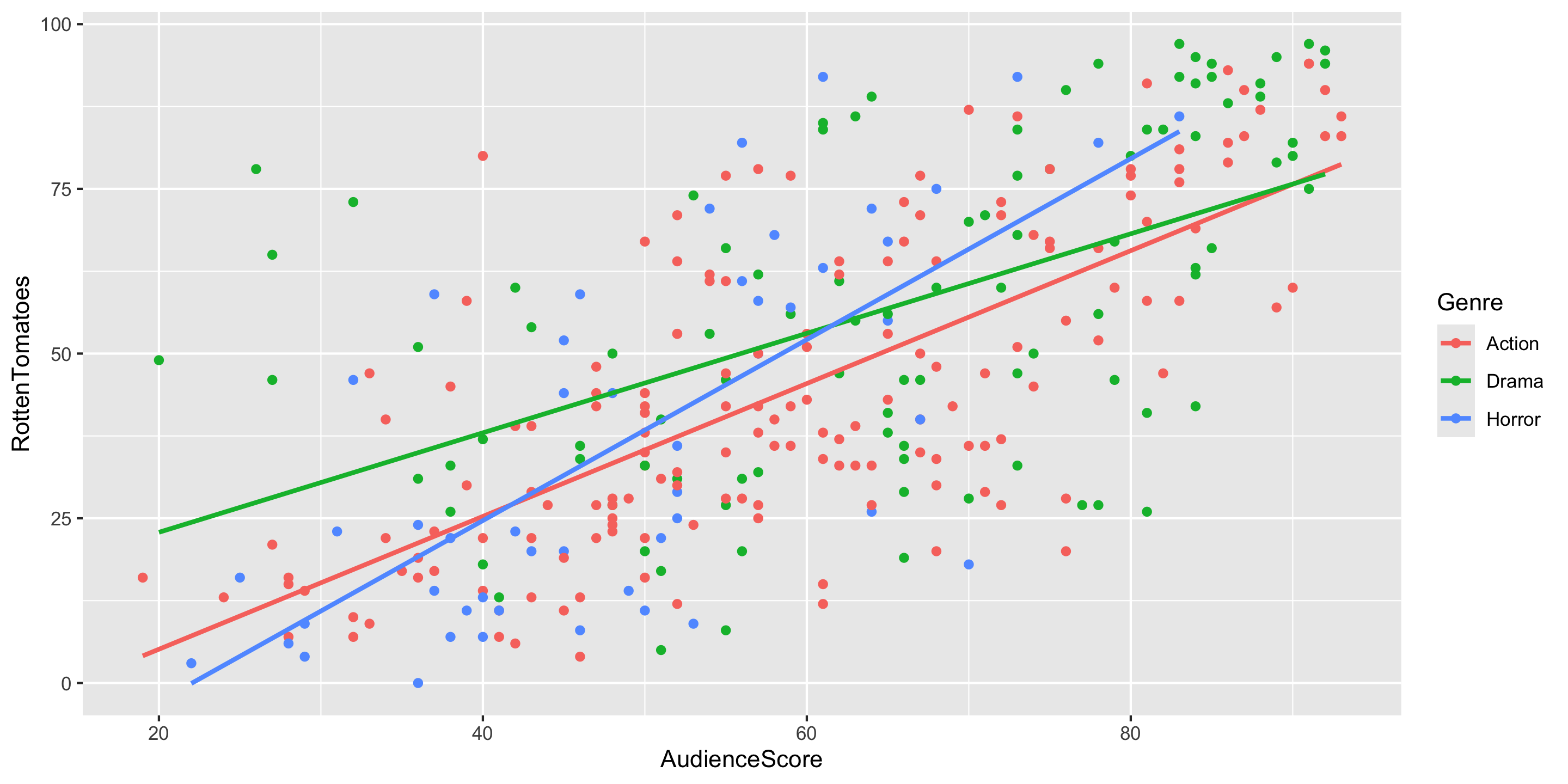

New data context: movie ratings

Rows: 313

Columns: 16

$ Movie <chr> "Spider-Man 3", "Transformers", "Pirates of the Carib…

$ LeadStudio <chr> "Sony", "Paramount", "Disney", "Warner Bros", "Warner…

$ RottenTomatoes <dbl> 61, 57, 45, 60, 20, 79, 35, 28, 41, 71, 95, 42, 18, 2…

$ AudienceScore <dbl> 54, 89, 74, 90, 68, 86, 55, 56, 81, 52, 84, 55, 70, 6…

$ Story <chr> "Metamorphosis", "Monster Force", "Rescue", "Sacrific…

$ Genre <chr> "Action", "Action", "Action", "Action", "Action", "Ac…

$ TheatersOpenWeek <dbl> 4252, 4011, 4362, 3103, 3778, 3408, 3959, 3619, 2911,…

$ OpeningWeekend <dbl> 151.1, 70.5, 114.7, 70.9, 49.1, 33.4, 58.0, 45.3, 19.…

$ BOAvgOpenWeekend <dbl> 35540, 17577, 26302, 22844, 12996, 9791, 14663, 12541…

$ DomesticGross <dbl> 336.53, 319.25, 309.42, 210.61, 140.13, 134.53, 131.9…

$ ForeignGross <dbl> 554.34, 390.46, 654.00, 245.45, 117.90, 249.00, 157.1…

$ WorldGross <dbl> 890.87, 709.71, 963.42, 456.07, 258.02, 383.53, 289.0…

$ Budget <dbl> 258.0, 150.0, 300.0, 65.0, 140.0, 110.0, 130.0, 110.0…

$ Profitability <dbl> 345.30, 473.14, 321.14, 701.64, 184.30, 348.66, 222.3…

$ OpenProfit <dbl> 58.57, 47.00, 38.23, 109.08, 35.07, 30.36, 44.62, 41.…

$ Year <dbl> 2007, 2007, 2007, 2007, 2007, 2007, 2007, 2007, 2007,…Response variable: RottenTomatoes

Explanatory variables: AudienceScore

Exploring the Data

- Q: What do the trends suggest, would it make sense to include interaction terms in the model?

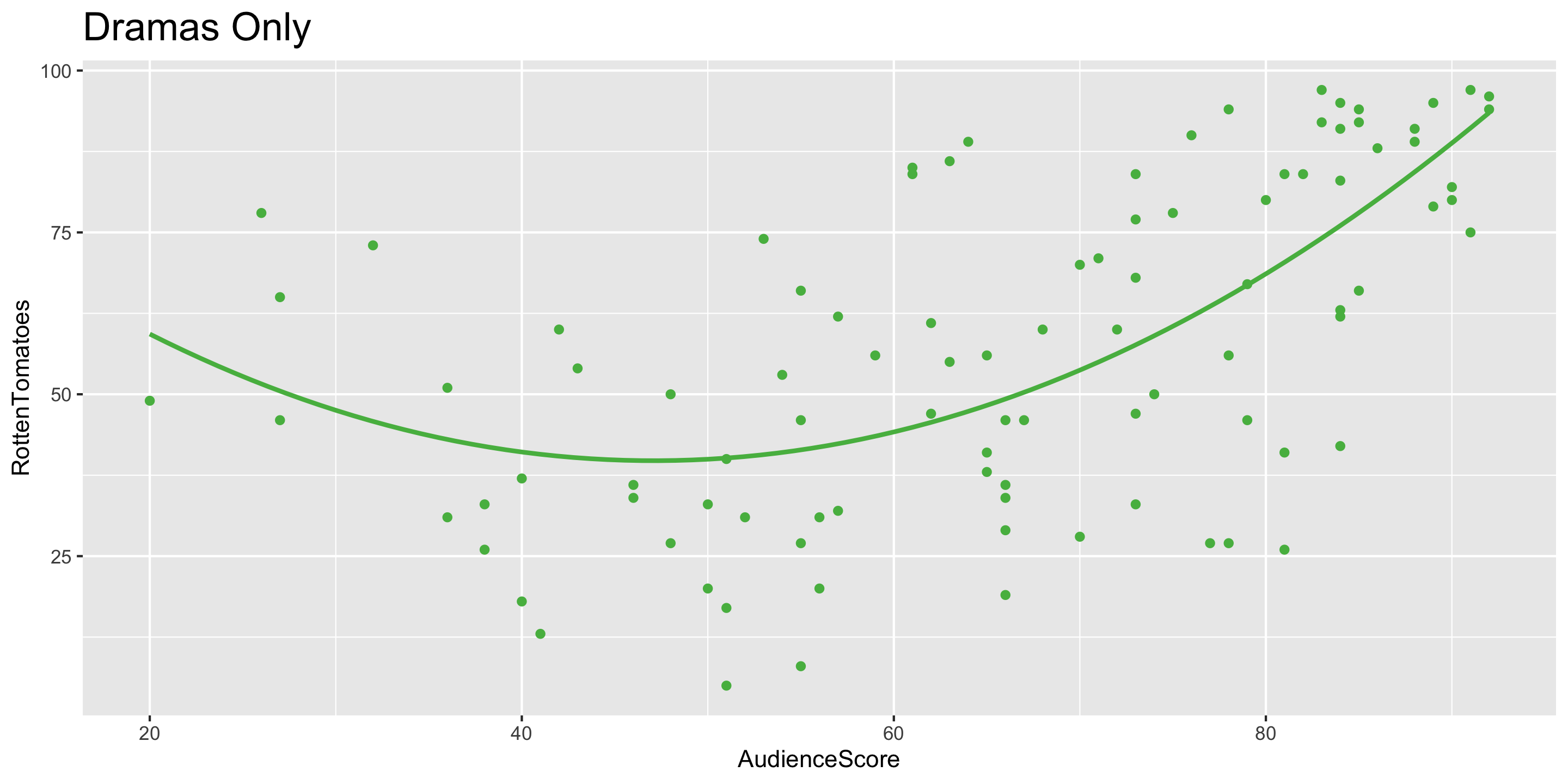

- Q: Does anyone spot a curved relationship for one of the genres?

Adding a Curve to your Scatterplot

Adding a Curve to your Scatterplot

ggplot(data = movies2 %>% filter(Genre == "Drama"),

mapping = aes(x = AudienceScore,

y = RottenTomatoes)) +

geom_point(color = "#55B84F") +

geom_smooth(method = "lm", se = FALSE, color = "#55B84F",

formula = y ~ poly(x, degree = 2)) +

ggtitle("Dramas Only") +

theme(plot.title = element_text(size = 18))

Using Transformations to Account for Non-Linear Relationships

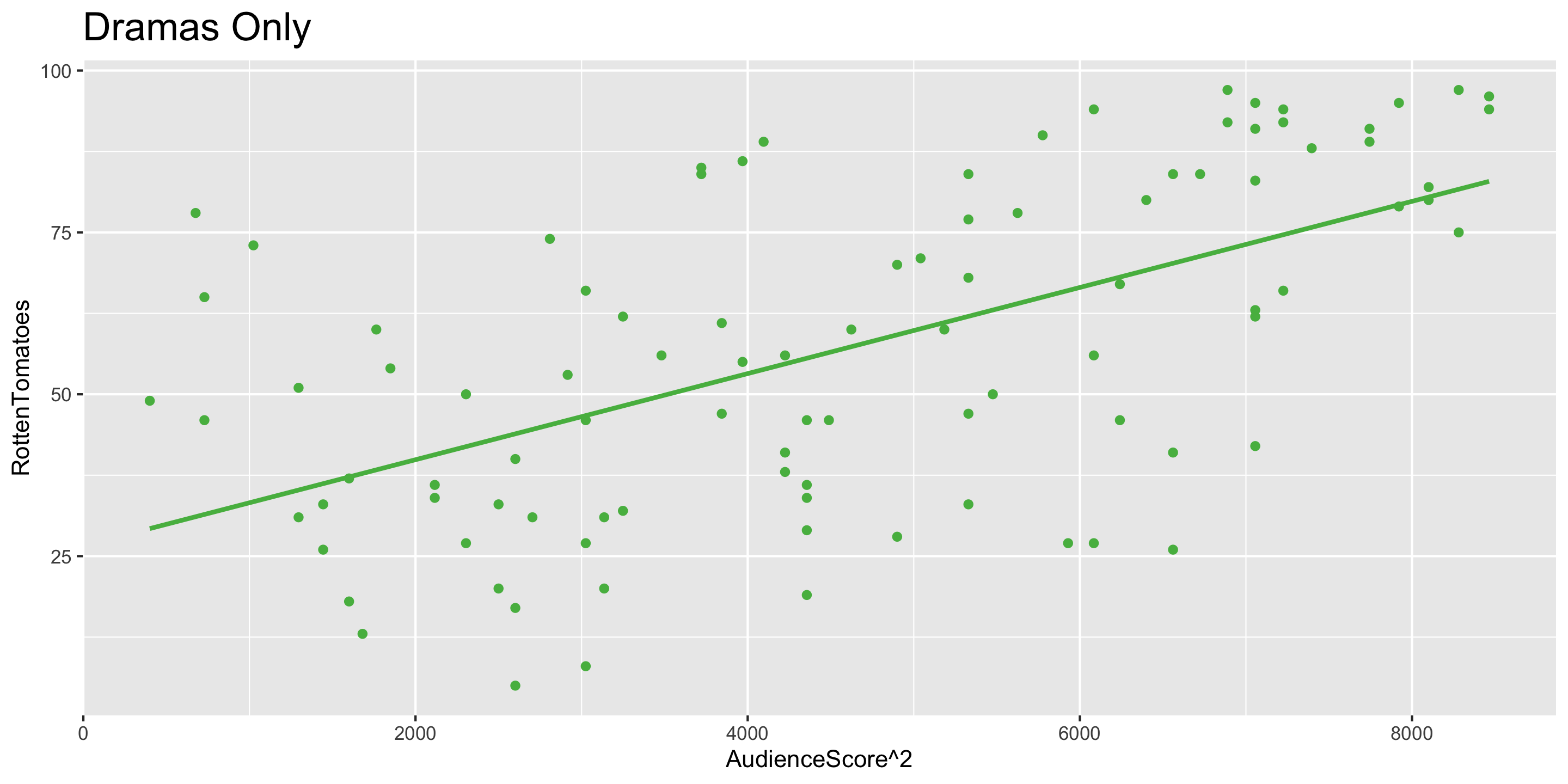

- Notice that the relationship between

AudienceScore\(^2\) andRottenTomatoesis approximately linear. AudienceScore\(^2\) is a transformation ofAudienceScore

Fitting a Model with a Transformed Variable

# A tibble: 3 × 7

term estimate std_error statistic p_value lower_ci upper_ci

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 intercept 98.8 21.3 4.64 0 56.6 141.

2 poly(AudienceScore, de… -2.51 0.722 -3.48 0.001 -3.94 -1.08

3 poly(AudienceScore, de… 0.027 0.006 4.58 0 0.015 0.038- We’ll practice a very common transformation in lab this week - the log transformation

- Q: Why is it still called a linear regression if the model also handles curved relationship?

- Transformations are super powerful: we can now use linear models even if the untransformed variables have non-linear relationships.

Model Building Guidance

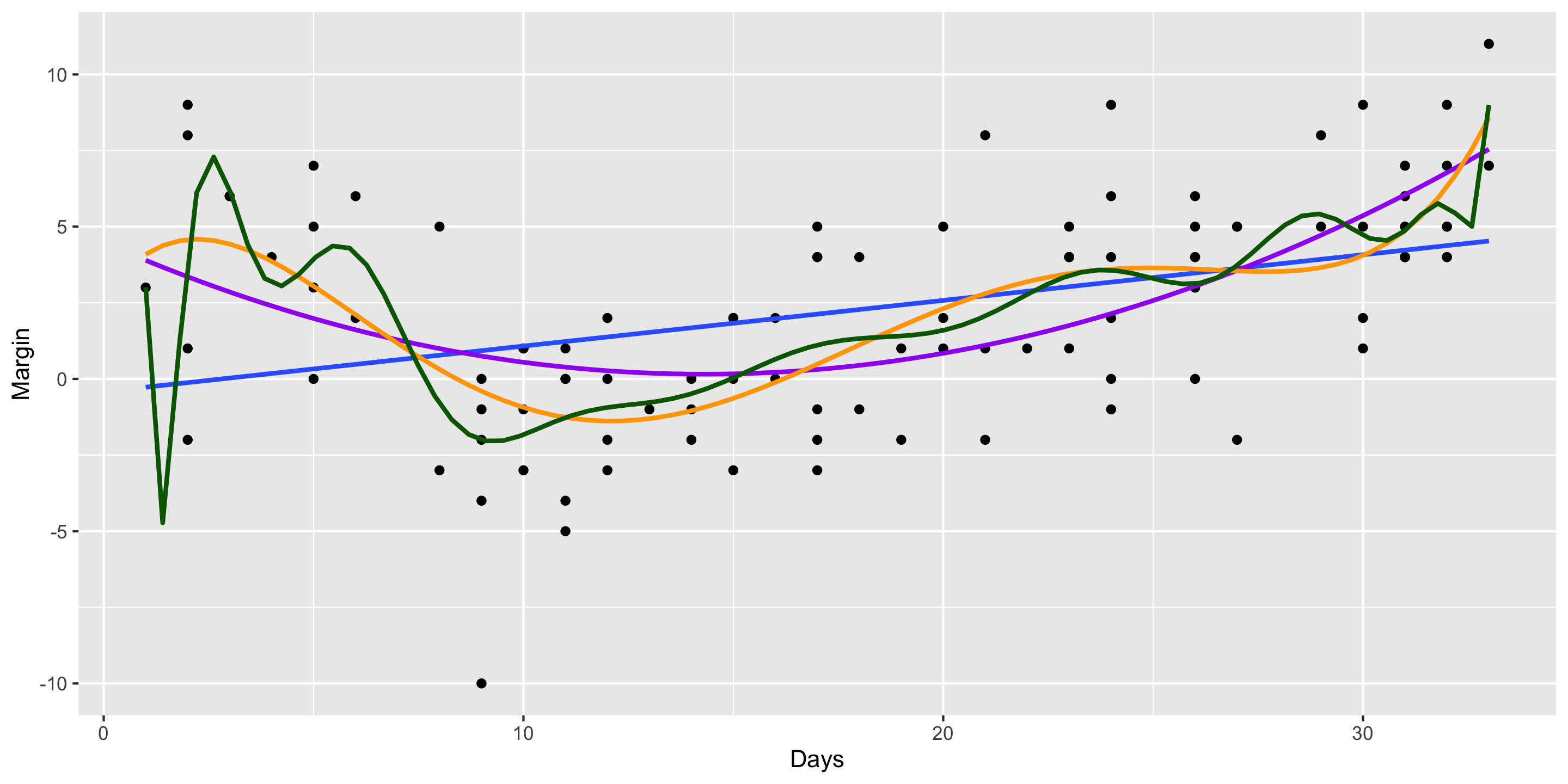

What degree of polynomial should I include in my model?

Guiding Principle: Capture the general trend, not the noise.

\[ \begin{align} y &= f(x) + \epsilon \\ y &= \mbox{TREND} + \mbox{NOISE} \end{align} \]

2008 Election Polls Example:

Next time

- Linear regression with many variables

- Multicollinearity

- Model selection